Professional Documents

Culture Documents

Proactive and On-Demand. Proactive Protocols Attempt To Maintain Up-To-Date Routing

Uploaded by

Rishabh SharmaOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Proactive and On-Demand. Proactive Protocols Attempt To Maintain Up-To-Date Routing

Uploaded by

Rishabh SharmaCopyright:

Available Formats

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 1 INTRODUCTION

Routing protocols for ad hoc networks can be classified into two major types: proactive and on-demand. Proactive protocols attempt to maintain up-to-date routing information to all nodes by periodically disseminating topology updates throughout the network. In contrast, on demand protocols attempt to discover a route only when a route is needed. To reduce the overhead and the latency of initiating a route discovery for each packet, on-demand routing protocols use route Caches. Due to mobility, cached routes easily become stale. Using stale routes causes packet losses, and increases latency and overhead. In this paper, we investigate how to make on-demand routing Protocols adapt quickly to topology changes. This problem is important because such protocols use route caches to make routing decisions; it is challenging because topology changes are frequent. To address the cache staleness issue in DSR (the Dynamic Source Routing protocol) prior work used adaptive timeout mechanisms. Such mechanisms use heuristics with ad hoc parameters to predict the lifetime of a link or a route. However, a predetermined choice of ad hoc parameters for certain scenarios may not work well for others, and scenarios in the real world are different from those used in simulations. Moreover, heuristics cannot accurately estimate timeouts because topology changes are unpredictable. As a result, either valid routes will be removed or stale routes will be kept in caches. In our project, we propose proactively disseminating the broken link information to the nodes that have that link in their caches. Proactive cache updating is key to making route caches adapt quickly to topology changes. It is also important to inform only the nodes that have cached a broken link to avoid unnecessary overhead. Thus, when a link failure is detected, our goal is to notify all reachable nodes that have cached the link about the link failure. We define a new cache structure called a cache table to maintain the information necessary for cache updates. A cache table has no capacity limit; its size increases as new routes are discovered and decreases as stale routes are removed. Dept Of ISE VKIT, Blore-74 Page 1

2010-2011

ANTNET: A Mobile Agent Approach To Adaptive Routing

Each node maintains in its cache table two types of information for each route. The first type of information is how well routing information is synchronized among nodes on a route: whether a link has been cached in only upstream nodes, or in both upstream and downstream nodes, or neither. The second type of information is which neighbor has learned which links through a ROUTE REPLY. We design a distributed algorithm that uses the information kept by each node to achieve distributed cache updating. When a link failure is detected, the algorithm notifies selected neighborhood nodes about the broken link: the closest upstream and/or downstream nodes on each route containing the broken link, and the neighbors that learned the link through ROUTE REPLIES. When a node receives a notification, the algorithm notifies selected neighbors. Thus, the broken link information will be quickly propagated to all reachable nodes that need to be notified.

1.1 System Requirements Specification

A Software Requirements Specification (SRS) - a requirements specification for a software system - is a complete description of the behavior of a system to be developed. It includes a set of use cases that describe all the interactions the users will have with the software. Use cases are also known as functional requirements. In addition to use cases, the SRS also contains non-functional (or supplementary) requirements. Non-functional requirements are requirements which impose constraints on the design or implementation (such as performance engineering requirements, quality standards, or design constraints).

1.2 Functional Requirements:

The functional requirements are as explained below: At regular intervals from every node a mobile agent is launched with a randomly selected destination node. The mobile agent carries dictionary and stack which has nodes information. Each mobile agent selects next hop using information stored in routing table. When destination is reached the agent generates backward agent popping its memory information to it. Dept Of ISE VKIT, Blore-74 2010-2011 Page 2

ANTNET: A Mobile Agent Approach To Adaptive Routing

The backward ant makes same path corresponding to forward ant but in opposite direction. At each node it pops stack to know next hop node. After arriving to neighbor, the backward ant updates routing table with the cost of previous node.

1.3 Non Functional Requirements:

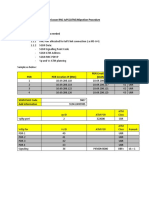

Hardware Requirements: System Hard Disk Floppy Drive Monitor Mouse Ram : Pentium IV 2.4 GHz. : 40 GB. : 1.44 Mb. : 15 VGA Colour. : Logitech. : 256 Mb.

Software Requirements: Operating system Technology Tool used : Windows XP Professional. : CORE JAVA, Swings, and Networking. : JDK 1.5, JCreator 3.5.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 3

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 2 LITERATURE SURVEY

A wireless ad hoc network is a decentralized type of wireless network. The network is ad hoc because it does not rely on a preexisting infrastructure, such as routers in wired networks or access points in managed (infrastructure) wireless networks. Instead, each node participates in routing by forwarding data for other nodes, and so the determination of which nodes forward data is made dynamically based on the network connectivity. In addition to the classic routing, ad hoc networks can use flooding for forwarding the data.

2.1 Mobile ad hoc network

A mobile ad hoc network (MANET), sometimes called a mobile mesh network, is a self-configuring network of mobile devices connected by wireless links. Each device in a MANET is free to move independently in any direction, and will therefore change its links to other devices frequently. Each must forward traffic unrelated to its own use, and therefore be a router. The primary challenge in building a MANET is equipping each device to continuously maintain the information required to properly route traffic. Such networks may operate by themselves or may be connected to the larger Internet. MANETs are a kind of wireless ad hoc networks that usually has a routable networking environment on top of a Link Layer ad hoc network. They are also a type of mesh network, but many mesh networks are not mobile or not wireless. The growth of laptops and 802.11/Wi-Fi wireless networking have made MANETs a popular research topic since the mid- to late 1990s. Many academic papers evaluate protocols and abilities assuming varying degrees of mobility within a bounded space, usually with all nodes within a few hops of each other and usually with nodes sending data at a constant rate. Different protocols are then evaluated based on the packet drop rate, the overhead introduced by the routing protocol, and other measures.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 4

ANTNET: A Mobile Agent Approach To Adaptive Routing

Types of MANET

Vehicular Ad Hoc Networks (VANETs) are used for communication among vehicles and between vehicles and roadside equipment.

Intelligent vehicular ad hoc networks (InVANETs) are a kind of artificial intelligence that helps vehicles to behave in intelligent manners during vehicle-tovehicle collisions, accidents, drunken driving etc.

Internet Based Mobile Ad-hoc Networks (iMANET) are ad-hoc networks that link mobile nodes and fixed Internet-gateway nodes. In such type of networks normal ad-hoc routing algorithms don't apply directly.

2.2 MANET routing protocols

As mentioned earlier, three proposed protocols have been accepted as experimental RFCs by the IETF. Two of these are presented here. They are both based on well known algorithms from Internet routing. AODV uses the principals from Distance Vector routing (used in RIP) and OLSR uses principals from Link State routing (used in OSPF). A third approach, which combines the strengths of proactive and reactive schemes is also presented. This is called a hybrid protocol. 2.2.1 Reactive protocols AODV Reactive protocols seek to set up routes on-demand. If a node wants to initiate communication with a node to which it has no route, the routing protocol will try to establish such a route. The Ad-Hoc On-Demand Distance Vector routing protocol is described in RFC 3561. The philosophy in AODV, like all reactive protocols, is that topology information is only transmitted by nodes on-demand. When a node wishes to transmit traffic to a host to which it has no route, it will generate a route request(RREQ) message that will be flooded in a limited way to other nodes. This causes control traffic overhead to be dynamic and it will result in an initial delay when initiating such communication.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 5

ANTNET: A Mobile Agent Approach To Adaptive Routing

A route is considered found when the RREQ message reaches either the destination itself, or an intermediate node with a valid route entry for the destination. For as long as a route exists between two endpoints, AODV remains passive. When the route becomes invalid or lost, AODV will again issue a request. AODV avoids the ``counting to infinity'' problem from the classical distance vector algorithm by using sequence numbers for every route. The counting to infinity problem is the situation where nodes update each other in a loop. Consider nodes A, B, C and D making up a MANET as illustrated in figure 2.2. A is not updated on the fact that its route to D via C is broken. This means that A has a registered route, with a metric of 2, to

D. C

has registered that the link to D is down, so once node B is updated on the link

breakage between C and D, it will calculate the shortest path to D to be via A using a metric of 3. C receives information that B can reach D in 3 hops and updates its metric to 4 hops.

A

then registers an update in hop-count for its route to D via C and updates the metric to 5.

And so they continue to increment the metric in a loop.

Figure: A scenario that can lead to the ``counting to infinity'' problem.

The way this is avoided in AODV, for the example described, is by B noticing that

as

route to D is old based on a sequence number. B will then discard the route and C will

be the node with the most recent routing information by which B will update its routing table. AODV defines three types of control messages for route maintenance: RREQ - A route request message is transmitted by a node requiring a route to a node. Dept Of ISE VKIT, Blore-74 2010-2011 Page 6

ANTNET: A Mobile Agent Approach To Adaptive Routing

As an optimization AODV uses an expanding ring technique when flooding these messages. Every RREQ carries a time to live (TTL) value that states for how many hops this message should be forwarded. This value is set to a predefined value at the first transmission and increased at retransmissions. Retransmissions occur if no replies are received. Data packets waiting to be transmitted(i.e. the packets that initiated the RREQ) should be buffered locally and transmitted by a FIFO principal when a route is set. RREP - A route reply message is unicasted back to the originator of a RREQ if the receiver is either the node using the requested address, or it has a valid route to the requested address. The reason one can unicast the message back, is that every route forwarding a RREQ caches a route back to the originator. RERR - Nodes monitor the link status of next hops in active routes. When a link breakage in an active route is detected, a RERR message is used to notify other nodes of the loss of the link. In order to enable this reporting mechanism, each node keeps a ``precursor list'', containing the IP address for each its neighbors that are likely to use it as a next hop towards each destination.

Figure: A possible path for a route reply if A wishes to find a route to J.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 7

ANTNET: A Mobile Agent Approach To Adaptive Routing

2.2.2 Proactive protocols - OLSR A proactive approach to MANET routing seeks to maintain a constantly updated topology understanding. The whole network should, in theory, be known to all nodes. This results in a constant overhead of routing traffic, but no initial delay in communication. The Optimized Link State routing(OLSR) is described in RFC3626. It is a tabledriven pro-active protocol. As the name suggests, it uses the link-state scheme in an optimized manner to diffuse topology information. In a classic link-state algorithm, linkstate information is flooded throughout the network. OLSR uses this approach as well, but since the protocol runs in wireless multi-hop scenarios the message flooding in OLSR is optimized to preserve bandwidth. The optimization is based on a technique called MultiPoint Relaying. The MPR technique is studied further in chapter 3. Being a table-driven protocol, OLSR operation mainly consists of updating and maintaining information in a variety of tables. The data in these tables is based on received control traffic, and control traffic is generated based on information retrieved from these tables. The route calculation itself is also driven by the tables. OLSR defines three basic types of control messages all of which will be studied in detail in chapter 3: HELLO - HELLO messages are transmitted to all neighbors. These messages are used for neighbor sensing and MPR calculation. TC - Topology Control messages are the link state signaling done by OLSR. This messaging is optimized in several ways using MPRs. MID - Multiple Interface Declaration messages are transmitted by nodes running OLSR on more than one interface. These messages lists all IP addresses used by a node.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 8

ANTNET: A Mobile Agent Approach To Adaptive Routing

2.2.3 Benefits of Dynamic Source Routing Protocol The Dynamic Source Routing Protocol has the following advantages. The Node have the information about the networks The DSR reduce the Packet loss and latency time The Node maintains the Route Status and Path for data transfer The Node automatically handles the Cache Updation Process Use On-Demand and Adaptive type of protocol for communication

Dept Of ISE VKIT, Blore-74

2010-2011

Page 9

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 3 SYSTEM ANALYSIS

3.1 Existing System

TCP performance degrades significantly in Mobile Ad hoc Networks due to the packet losses. Most of these packet losses result from the Route failures due to network mobility. TCP assumes such losses occur because of congestion, thus invokes congestion control mechanisms such as decreasing congestion windows, raising timeout, etc, thus greatly reduce TCP throughput. However, after a link failure is detected, several packets will be dropped from the network interface queue; TCP will time out because of these packet losses, as well as for Acknowledgement losses caused by route failures. There is no intimation information regarding about to the failure links to the Node from its neighboring Nodes. So that the Source Node cannot able to make the Route Decisions at the time of data transfer.

3.2 Limitation of Existing System

The Stale routes causes packet losses if packets cannot be salvaged by intermediate nodes. The stale routes increases packet delivery latency, since the MAC layer goes through multiple retransmissions before concluding a link failure. Use Adaptive time out mechanisms.

If the cache size is set large, more stale routes will stay in caches because FIFO replacement becomes less effective.

3.3 Proposed System

Prior work in DSR used heuristics with ad hoc parameters to predict the lifetime of a link or a route. However, heuristics cannot accurately estimate timeouts because topology changes are unpredictable.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 10

ANTNET: A Mobile Agent Approach To Adaptive Routing

Prior researches have proposed to provide link failure feedback to TCP so that TCP can avoid responding to route failures as if congestion had occurred. We propose proactively disseminating the broken link information to the nodes that have that link in their caches. We define a new cache structure called a cache table and present a distributed cache update algorithm. Each node maintains in its cache table the Information necessary for cache updates. The Source Node has the information regarding about the Destination and the Intermediate Node links failure, So that it is useful from Packet loss and reduce the latency time while data transfer throughout the Network.

3.4 Advantages of Proposed System

Proactive cache updating also prevents stale routes from being propagated to other nodes. We defined a new cache structure called a cache table to maintain the information necessary for cache updates. We presented a distributed cache update algorithm that uses the local information kept by each node to notify all reachable nodes that have cached a broken link. The algorithm enables DSR to adapt quickly to topology changes. The algorithm quickly removes stale routes no matter how nodes move and which traffic model is used.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 11

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 4 PROBLEM FORMLUTION

4.1 Objectives

The Dynamic Source Routing Protocol has the following objectives The Node have the information about the neighboring Nodes in the Network. The DSR reduce the Packet loss and latency time. The Node maintains the Route Status and Path information for data transfer and path request. The Node automatically handles the Cache Updation Process if any Link failure is happened in the Network. Use On-Demand and Adaptive type of protocol for communication.

4.2 Software Requirement Specification

The software requirement specification is produced at the culmination of the analysis task. The function and performance allocated to software as part of system engineering are refined by establishing a complete information description as functional representation, a representation of system behavior, an indication of performance requirements and design constraints, appropriate validation criteria. 4.2.1 User Interface * Swing - Swing is a set of classes that provides more powerful and flexible components that are possible with AWT. In addition to the familiar components, such as button checkboxes and labels, swing supplies several exciting additions, including tabbed panes, scroll panes, trees and tables. * Applet - Applet is a dynamic and interactive program that can run inside a web page displayed by a java capable browser such as hot java or Netscape. 4.2.2 Hardware Interface Hard disk RAM Processor Speed Processor : 40 GB : 512 MB : 3.00GHz : Pentium IV Processor 2010-2011 Page 12

Dept Of ISE VKIT, Blore-74

ANTNET: A Mobile Agent Approach To Adaptive Routing

4.2.3 Software Interface JDK 1.5 Java Swing MS-Access

4.2 Software Description

4.3.1 Java Java was conceived by James Gosling, Patrick Naughton, Chris Wrath, Ed Frank, and Mike Sheridan at Sun Micro system. It is a platform independent programming language that extends its features wide over the network.Java2 version introduces an new component called Swing is a set of classes that provides more power & flexible components than are possible with AWT. - Its a light weight package, as they are not implemented by platform-specific code. -Related classes are contained in javax.swing and its sub packages, such as javax.swing.tree. -Components explained in the Swing have more capabilities than those of AWT What Is Java? Java is two things: a programming language and a platform. The Java Programming Language Java is a high-level programming language that is all of the following: Simple Object-oriented Distributed Interpreted Robust Secure Architecture-neutral Portable High-performance Dept Of ISE VKIT, Blore-74 2010-2011 Page 13

ANTNET: A Mobile Agent Approach To Adaptive Routing

Multithreaded Dynamic

Java is also unusual in that each Java program is both compiled and interpreted. With a compiler, you translate a Java program into an intermediate language called Java byte codes--the platform-independent codes interpreted by the Java interpreter. With an interpreter, each Java byte code instruction is parsed and run on the computer. Compilation happens just once; interpretation occurs each time the program is executed. This figure illustrates how this works.

Java byte codes can be considered as the machine code instructions for the Java Virtual Machine (Java VM). Every Java interpreter, whether it's a Java development tool or a Web browser that can run Java applets, is an implementation of the Java VM. The Java VM can also be implemented in hardware. Java byte codes help make "write once, run anywhere" possible. The Java program can be compiled into byte codes on any platform that has a Java compiler. The byte codes can then be run on any implementation of the Java VM. For example, the same Java program can run on Windows NT, Solaris, and Macintosh. The Java Platform A platform is the hardware or software environment in which a program runs. The Java platform differs from most other platforms in that it's a software-only platform that

Dept Of ISE VKIT, Blore-74

2010-2011

Page 14

ANTNET: A Mobile Agent Approach To Adaptive Routing

runs on top of other, hardware-based platforms. Most other platforms are described as a combination of hardware and operating system. The Java platform has two components:

The Java Virtual Machine (Java VM) The Java Application Programming Interface (Java API)

The Java API is a large collection of ready-made software components that provide many useful capabilities, such as graphical user interface (GUI) widgets. The Java API is grouped into libraries (packages) of related components. The following figure depicts a Java program, such as an application or applet, that's running on the Java platform. As the figure shows, the Java API and Virtual Machine insulates the Java program from hardware dependencies.

As a platform-independent environment, Java can be a bit slower than native code. However, smart compilers, well-tuned interpreters, and just-in-time byte code compilers can bring Java's performance close to that of native code without threatening portability. What Can Java Do? Probably the most well-known Java programs are Java applets. An applet is a Java program that adheres to certain conventions that allow it to run within a Java-enabled browser. However, Java is not just for writing cute, entertaining applets for the World Wide Web ("Web"). Java is a general-purpose, high-level programming language and a powerful software platform. Using the generous Java API, we can write many types of programs. Dept Of ISE VKIT, Blore-74 2010-2011 Page 15

ANTNET: A Mobile Agent Approach To Adaptive Routing

The most common types of programs are probably applets and applications, where a Java application is a standalone program that runs directly on the Java platform. How does the Java API support all of these kinds of programs? With packages of software components that provide a wide range of functionality. The core API is the API included in every full implementation of the Java platform. The core API gives you the following features: The Essentials: Objects, strings, threads, numbers, input and output, data structures, system properties, date and time, and so on. Applets: The set of conventions used by Java applets. Networking: URLs, TCP and UDP sockets, and IP addresses. Internationalization: Help for writing programs that can be localized for users worldwide. Programs can automatically adapt to specific locales and be displayed in the appropriate language. Security: Both low-level and high-level, including electronic signatures, public/private key management, access control, and certificates. Software components: Known as JavaBeans, can plug into existing component architectures such as Microsoft's OLE/COM/Active-X architecture, OpenDoc, and Netscape's Live Connect. Object serialization: Allows lightweight persistence and communication via Remote Method Invocation (RMI). Java Database Connectivity (JDBC): Provides uniform access to a wide range of relational databases. Java not only has a core API, but also standard extensions. The standard extensions define APIs for 3D, servers, collaboration, telephony, speech, animation, and more. How Will Java Change My Life? Java is likely to make your programs better and requires less effort than other languages. We believe that Java will help you do the following:

Dept Of ISE VKIT, Blore-74

2010-2011

Page 16

ANTNET: A Mobile Agent Approach To Adaptive Routing

Get started quickly: Although Java is a powerful object-oriented language, it's easy to learn, especially for programmers already familiar with C or C++.

Write less code: Comparisons of program metrics (class counts, method counts, and so on) suggest that a program written in Java can be four times smaller than the same program in C++.

Write better code: The Java language encourages good coding practices, and its garbage collection helps you avoid memory leaks. Java's object orientation, its JavaBeans component architecture, and its wide-ranging, easily extendible API let you reuse other people's tested code and introduce fewer bugs.

Develop programs faster: Your development time may be as much as twice as fast versus writing the same program in C++. Why? You write fewer lines of code with Java and Java is a simpler programming language than C++.

Avoid platform dependencies with 100% Pure Java: You can keep your program portable by following the purity tips mentioned throughout this book and avoiding the use of libraries written in other languages.

Write once, run anywhere: Because 100% Pure Java programs are compiled into machine-independent byte codes, they run consistently on any Java platform. Distribute software more easily: You can upgrade applets easily from a central

server. Applets take advantage of the Java feature of allowing new classes to be loaded "on the fly," without recompiling the entire program. We explore the java.net package, which provides support for networking. Its creators have called Java programming for the Internet. These networking classes encapsulate the socket paradigm pioneered in the Berkeley Software Distribution (BSD) from the University of California at Berkeley.

4.3.2 Networking Basics Ken Thompson and Dennis Ritchie developed UNIX in concert with the C language at Bell Telephone Laboratories, Murray Hill, New Jersey, in 1969. In 1978, Bill Dept Of ISE VKIT, Blore-74 Page 17

2010-2011

ANTNET: A Mobile Agent Approach To Adaptive Routing

Joy was leading a project at Cal Berkeley to add many new features to UNIX, such as virtual memory and full-screen display capabilities. By early 1984, just as Bill was leaving to found Sun Microsystems, he shipped 4.2BSD, commonly known as Berkeley UNIX.4.2BSD came with a fast file system, reliable signals, interprocess communication, and, most important, networking. The networking support first found in 4.2 eventually became the de facto standard for the Internet. Berkeleys implementation of TCP/IP remains the primary standard for communications with the Internet. The socket paradigm for inter process and network communication has also been widely adopted outside of Berkeley. 4.3.3 Socket Overview A network socket is a lot like an electrical socket. Various plugs around the network have a standard way of delivering their payload. Anything that understands the standard protocol can plug in to the socket and communicate. Internet protocol (IP) is a low-level routing protocol that breaks data into small packets and sends them to an address across a network, which does not guarantee to deliver said packets to the destination. Transmission Control Protocol (TCP) is a higher-level protocol that manages to reliably transmit data. A third protocol, User Datagram Protocol (UDP), sits next to TCP and can be used directly to support fast, connectionless, unreliable transport of packets. Client/Server A server is anything that has some resource that can be shared. There are compute servers, which provide computing power; print servers, which manage a collection of printers; disk servers, which provide networked disk space; and web servers, which store web pages. A client is simply any other entity that wants to gain access to a particular server. In Berkeley sockets, the notion of a socket allows as single computer to serve many different clients at once, as well as serving many different types of information. This feat is managed by the introduction of a port, which is a numbered socket on a particular machine. A server process is said to listen to a port until a client connects to it. A server is allowed to accept multiple clients connected to the same port number,

Dept Of ISE VKIT, Blore-74

2010-2011

Page 18

ANTNET: A Mobile Agent Approach To Adaptive Routing

although each session is unique. To mange multiple client connections, a server process must be multithreaded or have some other means of multiplexing the simultaneous I/O. Reserved Sockets Once connected, a higher-level protocol ensues, which is dependent on which port you are using. TCP/IP reserves the lower, 1,024 ports for specific protocols. Port number 21 is for FTP, 23 is for Telnet, 25 is for e-mail, 79 is for finger, 80 is for HTTP, 119 is for Netnews-and the list goes on. It is up to each protocol to determine how a client should interact with the port. Java and the Net Java supports TCP/IP both by extending the already established stream I/O interface. Java supports both the TCP and UDP protocol families. TCP is used for reliable stream-based I/O across the network. UDP supports a simpler, hence faster, point-topoint datagram-oriented model. InetAddress The InetAddress class is used to encapsulate both the numerical IP address and the domain name for that address. We interact with this class by using the name of an IP host, which is more convenient and understandable than its IP address. The InetAddress class hides the number inside. As of Java 2, version 1.4, InetAddress can handle both IPv4 and IPv6 addresses. Factory Methods The InetAddress class has no visible constructors. To create an InetAddress object, we use one of the available factory methods. Factory methods are merely a convention whereby static methods in a class return an instance of that class. This is done in lieu of overloading a constructor with various parameter lists when having unique method names makes the results much clearer. Three commonly used InetAddress factory methods are shown here. static InetAddress getLocalHost( ) throws UnknownHostException static InetAddress getByName(String hostName) throws UnknowsHostException static InetAddress[ ] getAllByName(String hostName) throws UnknownHostException

Dept Of ISE VKIT, Blore-74

2010-2011

Page 19

ANTNET: A Mobile Agent Approach To Adaptive Routing

The getLocalHost( ) method simply returns the InetAddress object that represents the local host. The getByName( ) method returns an InetAddress for a host name passed to it. If these methods are unable to resolve the host name, they throw an UnknownHostException. On the internet, it is common for a single name to be used to represent several machines. In the world of web servers, this is one way to provide some degree of scaling. The getAllByName ( ) factory method returns an array of InetAddresses that represent all of the addresses that a particular name resolves to. It will also throw an UnknownHostException if it cant resolve the name to at least one address. Java 2, version 1.4 also includes the factory method getByAddress( ), which takes an IP address and returns an InetAddress object. Either an IPv4 or an IPv6 address can be used. Instance Methods The InetAddress class also has several other methods, which can be used on the objects returned by the methods just discussed. Here are some of the most commonly used. Boolean equals (Object other)- Returns true if this object has the same Internet address as other. byte[ ] getAddress( )- Returns a byte array that represents the objects Internet address in network byte order. String getHostAddress( )- Returns a string that represents the host address associated with the InetAddress object. String getHostName( )- Returns a string that represents the host name associated with the InetAddress object. boolean isMulticastAddress( )- Returns true if this Internet address is a multicast address. Otherwise, it returns false. String toString( )- Returns a string that lists the host name and the IP address for conveneince. Internet addresses are looked up in a series of hierarchically cached servers. That means that your local computer might know a particular name-to-IP-address mapping autocratically, such as for itself and nearby servers. For other names, it may ask a local DNS server for IP address information. If that server doesnt have a particular address, it Dept Of ISE VKIT, Blore-74 2010-2011 Page 20

ANTNET: A Mobile Agent Approach To Adaptive Routing

can go to a remote site and ask for it. This can continue all the way up to the root server, called InterNIC (internic.net). TCP/IP Client Sockets TCP/IP sockets are used to implement reliable, bidirectional, persistent, point-topoint, stream-based connections between hosts on the Internet. A socket can be used to connect Javas I/O system to other programs that may reside either on the local machine or on any other machine on the Internet. There are two kinds of TCP sockets in Java. One is for servers, and the other is for clients. The ServerSocket class is designed to be a listener, which waits for clients to connect before doing anything. The Socket class is designed to connect to server sockets and initiate protocol exchanges. The creation of a Socket object implicitly establishes a connection between the client and server. There are no methods or constructors that explicitly expose the details of establishing that connection. Here are two constructors used to create client sockets: Socket(String hostName, int port) Creates a socket connecting the local host to

the named host and port; can throw an UnknownHostException or anIOException. Socket(InetAddress ipAddress, int port) Creates a socket using a preexisting

InetAddress object and a port; can throw an IOException. A socket can be examined at any time for the address and port information associated with it, by use of the following methods: InetAddress getInetAddress( )- Returns the InetAddress associated with the Socket object. int getPort( ) Returns the remote port to which this Socket object is connected. int getLocalPort( ) Returns the local port to which this Socket object is connected. Once the Socket object has been created, it can also be examined to gain access to the input and output streams associated with it. Each of these methods can throw an IOException if the sockets have been invalidated by a loss of connection on the Net. InputStream getInputStream( )Returns the InputStream associated with the invoking socket.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 21

ANTNET: A Mobile Agent Approach To Adaptive Routing

OutputStream getOutputStream( ) Returns the OutputStream associated with the invoking socket. TCP/IP Server Sockets Java has a different socket class that must be used for creating server applications. The ServerSocket class is used to create servers that listen for either local or remote client programs to connect to them on published ports. ServerSockets are quite different form normal Sockets. When we create a ServerSocket, it will register itself with the system as having an interest in client connections. The constructors for ServerSocket reflect the port number that we wish to accept connection on and, optionally, how long we want the queue for said port to be. The queue length tells the system how many client connection it can leave pending before it should simply refuse connections. The default is 50. The constructors might throw an IOException under adverse conditions. Here are the constructors: ServerSocket(int port) Creates server socket on the specified port with a queue length of 50. Serversocket(int port, int maxQueue)-Creates a server socket on the specified port with a maximum queue length of maxQueue. ServerSocket(int port, int maxQueue, InetAddress localAddress)-Creates a server socket on the specified port with a maximum queue length of maxQueue. On a multihomed host, localAddress specifies the IP address to which this socket binds. ServerSocket has a method called accept( ), which is a blocking call that will wait for a client to initiate communications, and then return with a normal Socket that is then used for communication with the client.

4.3.4 URL The Web is a loose collection of higher-level protocols and file formats, all unified in a web browser. One of the most important aspects of the Web is that Tim Berners-Lee devised a scaleable way to locate all of the resources of the Net. The Uniform Resource Locator (URL) is used to name anything and everything reliably. Dept Of ISE VKIT, Blore-74 2010-2011 Page 22

ANTNET: A Mobile Agent Approach To Adaptive Routing

The URL provides a reasonably intelligible form to uniquely identify or address information on the Internet. URLs are ubiquitous; every browser uses them to identify information on the Web. Within Javas network class library, the URL class provides a simple, concise API to access information across the Internet using URLs. Format Two examples of URLs are http;//www.osborne.com/ and http://

www.osborne.com:80/index.htm. A URL specification is based on four components. The first is the protocol to use, separated from the rest of the locator by a colon (:). Common protocols are http, ftp, gopher, and file, although these days almost everything is being done via HTTP. The second component is the host name or IP address of the host to use; this is delimited on the left by double slashes (/ /) and on the right by a slash (/) or optionally a colon (:) and on the right by a slash (/). The fourth part is the actual file path. Most HTTP servers will append a file named index.html or index.htm to URLs that refer directly to a directory resource. Javas URL class has several constructors, and each can throw a MalformedURLException. One commonly used form specifies the URL with a string that is identical to what is displayed in a browser: URL(String urlSpecifier) The next two forms of the constructor breaks up the URL into its component parts: URL(String protocolName, String hostName, int port, String path) URL(String protocolName, String hostName, String path) Another frequently used constructor uses an existing URL as a reference context and then create a new URL from that context. URL(URL urlObj, String urlSpecifier) The following method returns a URLConnection object associated with the invoking URL object. it may throw an IOException. URLConnection openConnection( )-It returns a URLConnection object associated with the invoking URL object. it may throw an IOException. 4.3.5 JDBC Page 23

Dept Of ISE VKIT, Blore-74

2010-2011

ANTNET: A Mobile Agent Approach To Adaptive Routing

In an effort to set an independent database standard API for Java, Sun Microsystems developed Java Database Connectivity, or JDBC. JDBC offers a generic SQL database access mechanism that provides a consistent interface to a variety of RDBMS. This consistent interface is achieved through the use of plug-in database connectivity modules, or drivers. If a database vendor wishes to have JDBC support, he or she must provide the driver for each platform that the database and Java run on. To gain a wider acceptance of JDBC, Sun based JDBCs framework on ODBC. As you discovered earlier in this chapter, ODBC has widespread support on a variety of platforms. Basing JDBC on ODBC will allow vendors to bring JDBC drivers to market much faster than developing a completely new connectivity solution. JDBC Goals Few software packages are designed without goals in mind. JDBC is one that, because of its many goals, drove the development of the API. These goals, in conjunction with early reviewer feedback, have finalized the JDBC class library into a solid framework for building database applications in Java. The goals that were set for JDBC are important. They will give you some insight as to why certain classes and functionalities behave the way they do. The eight design goals for JDBC are as follows: 1. SQLevelAPI The designers felt that their main goal was to define a SQL interface for Java. Although not the lowest database interface level possible, it is at a low enough level for higher-level tools and APIs to be created. Conversely, it is at a high enough level for application programmers to use it confidently. Attaining this goal allows for future tool vendors to generate JDBC code and to hide many of JDBCs complexities from the end user.

2. SQL Conformance SQL syntax varies as you move from database vendor to database vendor. In an effort to support a wide variety of vendors, JDBC will allow any query statement to be passed through it to the underlying database driver. This allows the

Dept Of ISE VKIT, Blore-74

2010-2011

Page 24

ANTNET: A Mobile Agent Approach To Adaptive Routing

connectivity

module to handle non-standard functionality in a manner that is

suitable for its users. 3. JDBC must be implemental on top of common database interfaces The JDBC SQL API must sit on top of other common SQL level APIs. This goal allows JDBC to use existing ODBC level drivers by the use of a software interface. This interface would translate JDBC calls to ODBC and vice versa. 4. Provide a Java interface that is consistent with the rest of the Java system Because of Javas acceptance in the user community thus far, the designers feel that they should not stray from the current design of the core Java system. 5. Keep it simple This goal probably appears in all software design goal listings. JDBC is no exception. Sun felt that the design of JDBC should be very simple, allowing for only one method of completing a task per mechanism. Allowing duplicate functionality only serves to confuse the users of the API. 6. Use strong, static typing wherever possible Strong typing allows for more error checking to be done at compile time; also, less errors appear at runtime. 7. Keep the common cases simple Because more often than not, the usual SQL calls used by the programmer are simple SELECTs, INSERTs, DELETEs and UPDATEs, these queries should be simple to perform with JDBC. However, more complex SQL statements should also be possible.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 25

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 5 SYSTEM DESIGN

5.1 Design Overview

On-demand Route Maintenance results in delayed awareness of mobility, because a node is not notified when a cached route breaks until it uses the route to send packets. We classify a cached route into three types: pre-active, if a route has not been used; active, if a route is being used; post-active, if a route was used before but now is not. It is not necessary to detect whether a route is active or post-active, but these terms help clarify the cache staleness issue. Stale pre-active and post-active routes will not be detected until they are used. Due to the use of responding to ROUTE REQUESTS with cached routes, stale routes may be quickly propagated to the caches of other nodes. Thus, pre-active and post-active routes are important sources of cache staleness. When a node detects a link failure, our goal is to notify all reachable nodes that have cached that link to update their caches. To achieve this goal, the node detecting a link failure needs to know which nodes have cached the broken link and needs to notify such nodes efficiently. This goal is very challenging because of mobility and the fast propagation of routing information. Our solution is to keep track of topology propagation state in a distributed manner. Topology propagation state means which node has cached which link. In a cache table, a node not only stores routes but also maintain two types of information for each route: (1) how well routing information is synchronized among nodes on a route. (2) which neighbor has learned which links through a ROUTE REPLY. Each node gathers such information during route discoveries and data transmission. The two types of information are sufficient, because each node knows for each cached link which neighbors have that link in their caches. Each entry in the cache table contains a field called DataPackets. This field records whether a node has forwarded 0, 1,

Dept Of ISE VKIT, Blore-74

2010-2011

Page 26

ANTNET: A Mobile Agent Approach To Adaptive Routing

or 2 data packets. A node knows how well routing information is synchronized through the first data packet. When forwarding a ROUTE REPLY, a node caches only the downstream links; thus, its downstream nodes did not cache the first downstream link through this ROUTE REPLY. When receiving the first data packet, the node knows that upstream nodes have cached all downstream links. The node adds the upstream links to the route consisting of the downstream links. Thus, when a downstream link is broken, the node knows which upstream node needs to be notified. The node also sets DataPackets to 1 before it forwards the first data packet to the next hop. If the node can successfully deliver this packet, it is highly likely that the downstream nodes will cache the first downstream link; otherwise, they will not cache the link through forwarding packets with this route. Thus, if DataPackets in an entry is 1 and the route is the same as the source route in the packet encountering a link failure, downstream nodes did not cache the link. However, if DataPackets is 1 and the route is different from the source route in the packet, downstream nodes cached the link when the first data packet traversed the route. If DataPackets is 2, then downstream nodes also cached the link, whether the route is the same as the source route in the packet. Each entry in the cache table contains a field called ReplyRecord. This field records which neighbor learned which links through a ROUTE REPLY. Before forwarding a ROUTE REPLY, a node records the neighbor to which the ROUTE REPLY is sent and the downstream links as an entry. Thus, when an entry contains a broken link, the node will know which neighbor needs to be notified. The algorithm uses the information kept by each node to achieve distributed cache updating. When a node detects a link failure while forwarding a packet, the algorithm checks the DataPackets field of the cache entries containing the broken link: (1) If it is 0, indicating that the node has not forwarded any data packet using the route, then no downstream nodes need to be notified because they did not cache the broken link. (2) If it is 1 and the route being examined is the same as the source route in the packet, indicating that the packet is the first data packet, then no downstream nodes need to be notified but all upstream nodes do. Dept Of ISE VKIT, Blore-74 2010-2011 Page 27

ANTNET: A Mobile Agent Approach To Adaptive Routing

(3) If it is 1 and the route being examined is different from the source route in the packet, then both upstream and downstream nodes need to be notified, because the first data packet has traversed the route. (4) If it is 2, then both upstream and downstream nodes need to be notified, because at least one data packet has traversed the route. The algorithm notifies the closest upstream and/or downstream nodes and the neighbors that learned the broken link through ROUTE REPLIES. When a node receives a notification, the algorithm notifies selected neighbors: upstream and/or downstream neighbors, and other neighbors that have cached the broken link through R OUTE REPLIES. Thus, the broken link information will be quickly propagated to all reachable nodes that have that link in their caches. The Definition of a Cache Table we design a cache table that has no capacity limit. Without capacity limit allows DSR to store all discovered routes and thus reduces route discoveries. The cache size increases as new routes are discovered and decreases as stale routes are removed. There are four fields in a cache table entry: Route: It stores the links starting from the current node to a destination or from a source to a destination. SourceDestination: It is the source and destination pair. DataPackets: It records whether the current node has forwarded 0, 1, or 2 data packets. It is 0 initially, incremented to 1 when the node forwards the first data packet, and incremented to 2 when it forwards the second data packet. ReplyRecord: This field may contain multiple entries and has no capacity limit. A ReplyRecord entry has two fields: the neighbor to which a ROUTE REPLY is forwarded and the route starting from the current node to a destination. A ReplyRecord entry will be removed in two cases: when the second field contains a broken link, and when the concatenation of the two fields is a sub-route of the source route, which starts from the previous node in the source route to the destination of the data packet.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 28

ANTNET: A Mobile Agent Approach To Adaptive Routing

Module 1: Route Request When a source node wants to send packets to a destination to which it does not have a route, it initiates a Route Discovery by broadcasting a ROUTE REQUEST. The node receiving a ROUTE REQUEST checks whether it has a route to the destination in its cache. If it has, it sends a ROUTE REPLY to the source including a source route, which is the concatenation of the source route in the ROUTE REQUEST and the cached route. If the node does not have a cached route to the destination, it adds its address to the source route and rebroadcasts the ROUTE REQUEST. When the destination receives the ROUTE REQUEST, it sends a ROUTE REPLY containing the source route to the source. Each node forwarding a ROUTE REPLY stores the route starting from itself to the destination. When the source receives the ROUTE REPLY, it caches the source route. Module 2: Message Transfer The Message transfer relates with that the sender node wants to send a message to the destination node after the path is selected and status of the destination node through is true. The receiver node receives the message completely and then it send the acknowledgement to the sender node through the router nodes where it is received the message. Module 3: Route Maintenance Route Maintenance, the node forwarding a packet is responsible for confirming that the packet has been successfully received by the next hop. If no acknowledgement is received after the maximum number of retransmissions, the forwarding node sends a ROUTE ERROR to the source, indicating the broken link. Each node forwarding the ROUTE ERROR removes from its cache the routes containing the broken link. Module 4: Cache Updating When a node detects a link failure, our goal is to notify all reachable nodes that have cached that link to update their caches. To achieve this goal, the node detecting a link failure needs to know which nodes have cached the broken link and needs to notify such nodes efficiently. Our solution is to keep track of topology propagation state in a distributed manner.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 29

ANTNET: A Mobile Agent Approach To Adaptive Routing

The algorithm starts either when a node detects a link failure or when it receives a notification. In a cache table, a node not only stores routes but also maintain two types of information for each route: (1) how well routing information is synchronized among nodes on a route; (2) which neighbor has learned which links through a ROUTE REPLY. Each node gathers such information during route discoveries and data transmission, without introducing additional overhead. The two types of information are sufficient; because each node knows for each cached link which neighbors have that link in their caches.

5.2 Context Diagram

5.2.1 Route Request

Dept Of ISE VKIT, Blore-74

2010-2011

Page 30

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.2.2 Route Maintenance

5.2.3 Message Transfer

Dept Of ISE VKIT, Blore-74

2010-2011

Page 31

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.2.4 Cache Update

5.3 Data Flow Diagram

A data flow diagram (DFD) is graphic representation of the "flow" of data through business functions or processes. More generally, a data flow diagram is used for the visualization of data processing. It illustrates the processes, data stores, external entities, data flows in a business or other system and the relationships between them.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 32

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.3.1 Route Request

Dept Of ISE VKIT, Blore-74

2010-2011

Page 33

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.3.2 Message Transfer

Dept Of ISE VKIT, Blore-74

2010-2011

Page 34

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.3.3 Route Maintenance

Dept Of ISE VKIT, Blore-74

2010-2011

Page 35

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.3.4 Cache Update

Dept Of ISE VKIT, Blore-74

2010-2011

Page 36

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.4 Architectural Design

The overall logical structure of the project is divided into processing modules and conceptual data structures is defined as Architectural Design.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 37

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.5 Use Case

START AGENT

BROWSE SEND CLEAR EXIT

5.6 Sequence Diagram

Dept Of ISE VKIT, Blore-74

2010-2011

Page 38

ANTNET: A Mobile Agent Approach To Adaptive Routing

5.7 Class diagram

Dept Of ISE VKIT, Blore-74

2010-2011

Page 39

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 6 IMPLEMENTATION

Implementation is the stage in the project where the theoretical design is turned into a working system and is giving confidence on the new system for the users, which it will work efficiently and effectively. It involves careful planning, investigation of the current system and its constraints on implementation, design of methods to achieve the change over, an evaluation, of change over methods. Apart from planning major task of preparing the implementation are education and training of users. The more complex system being implemented, the more involved will be the system analysis and the design effort required just for implementation. An implementation co-ordination committee based on policies of individual organization has been appointed. The implementation process begins with preparing a plan for the implementation of the system. According to this plan, the activities are to be carried out, discussions made regarding the equipment and resources and the additional equipment has to be acquired to implement the new system. Implementation is the final and important phase, the most critical stage in achieving a successful new system and in giving the users confidence. That the new system will work be effective .The system can be implemented only after through testing is done and if it found to working according to the specification. This method also offers the greatest security since the old system can take over if the errors are found or inability to handle certain type of transactions while using the new system.

6.1 User Training

After the system is implemented successfully, training of the user is one of the most important subtasks of the developer. For this purpose user manuals are prepared and handled over to the user to operate the developed system. Thus the users are trained to operate the developed systems successfully in future. In order to put new application system into use, the following activities were taken care of: Preparation of user and system documentation Conducting user training with demo and hands on Dept Of ISE VKIT, Blore-74 2010-2011 Page 40

ANTNET: A Mobile Agent Approach To Adaptive Routing

Test run for some period to ensure smooth switching over the system. The users are trained to use the newly developed functions. User manuals describing the procedures for using the functions listed on menu and circulated to all the users .it is confirmed that the system is implemented up to user need and expectations.

6.2 Security

The Administrator checks the path information and status information before the data transfer Messages that are sent will receive the acknowledgements automatically if there is no link failure after the message received by the Receiver Node Failure of links will automatically make updation in the Cache table to other nodes in the Network We used two optimizations for our algorithm. First, to reduce duplicate notifications to a node, we attach a reference list to each notification. The node detecting a link failure is the root, initializing the list to be its notification list. Each child notifies only the nodes not in the list and updates the list by adding the nodes in its notification list. The graph will be close to a tree. Second, we piggyback a notification on the data packet that encounters a broken link if that packet can be salvaged. When using the algorithm, we also use a small list of broken links, which is similar to the negative cache proposed in prior work, to prevent a node from being re-polluted by in-flight stale routes.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 41

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 7 SYSTEM TESTING

The purpose of testing is to discover errors. Testing is the process of trying to discover every conceivable fault or weakness in a work product. It provides a way to check the functionality of components, sub assemblies, assemblies and/or a finished product. It is the process of exercising software with the intent of ensuring that the Software system meets its requirements and user expectations and does not fail in an unacceptable manner. There are various types of test. Each test type addresses a specific testing requirement.

7.1 Unit testing

Unit testing involves the design of test cases that validate that the internal program logic is functioning properly, and that program input produces valid outputs. All decision branches and internal code flow should be validated. It is the testing of individual software units of the application .it is done after the completion of an individual unit before integration. This is a structural testing, that relies on knowledge of its construction and is invasive. Unit tests perform basic tests at component level and test a specific business process, application, and/or system configuration. Unit tests ensure that each unique path of a business process performs accurately to the documented specifications and contains clearly defined inputs and expected results.

7.2 Functional test

Functional tests provide systematic demonstrations that functions tested are available as specified by the business and technical requirements, system documentation, and user manuals. Functional testing is centered on the following items: Valid Input Invalid Input Functions Output : identified classes of valid input must be accepted. : identified classes of invalid input must be rejected. : identified functions must be exercised. : identified classes of application outputs must be exercised.

Systems/Procedures : interfacing systems or procedures must be invoked.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 42

ANTNET: A Mobile Agent Approach To Adaptive Routing

7.3 System Test

System testing ensures that the entire integrated software system meets requirements. It tests a configuration to ensure known and predictable results. An example of system testing is the configuration oriented system integration test. System testing is based on process descriptions and flows, emphasizing pre-driven process links and integration points.

7.4 Performance Test

The Performance test ensures that the output be produced within the time limits, and the time taken by the system for compiling, giving response to the users and request being send to the system for to retrieve the results.

7.5 Integration Testing

Software integration testing is the incremental integration testing of two or more integrated software components on a single platform to produce failures caused by interface defects. The task of the integration test is to check that components or software applications, e.g. components in a software system or one step up software applications at the company level interact without error. Integration testing for Server Synchronization: Testing the IP Address for to communicate with the other Nodes Check the Route status in the Cache Table after the status information is received by the Node The Messages are displayed throughout the end of the application

7.6 Acceptance Testing

User Acceptance Testing is a critical phase of any project and requires significant participation by the end user. It also ensures that the system meets the functional requirements.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 43

ANTNET: A Mobile Agent Approach To Adaptive Routing

Acceptance testing for Data Synchronization: The Acknowledgements will be received by the Sender Node after the Packets are received by the Destination Node The Route add operation is done only when there is a Route request in need The Status of Nodes information is done automatically in the Cache Updation process

Dept Of ISE VKIT, Blore-74

2010-2011

Page 44

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 8 CONCLUSION

In this project, we presented the first work that proactively updates route caches in an adaptive manner. We defined a new cache structure called a cache table to maintain the information necessary for cache updates. We presented a distributed cache update algorithm that uses the local information kept by each node to notify all reachable nodes that have cached a broken link. The algorithm enables DSR to adapt quickly to topology changes. We show that, under non-promiscuous mode, the algorithm outperforms DSR with path caches by up to 19% and DSR with Link-MaxLife by up to 41% in packet delivery ratio. It reduces normalized routing overhead by up to 35% for DSR with path caches. Under promiscuous mode, the algorithm improves packet delivery ratio by up to 7% for both caching strategies, and reduces delivery latency by up to 27% for DSR with path caches and 49% for DSR with Link-MaxLife. The improvement demonstrates the benefits of the algorithm. Although the results were obtained under a certain type of mobility and traffic models, we believe that the results apply to other models, as the algorithm quickly removes stale routes no matter how nodes move and which traffic model is used. The central challenge to routing protocols is how to efficiently handle topology changes. Proactive protocols periodically exchange topology updates among all nodes, incurring significant overhead. On-demand protocols avoid such overhead but face the problem of cache updating. We show that proactive cache updating is more efficient than adaptive timeout mechanisms. Our work combines the advantages of proactive and on-demand protocols: on-demand link failure detection and proactive cache updating. Our solution is applicable to other on-demand routing protocols. We conclude that proactive cache updating is key to the adaptation of on-demand routing protocols to mobility.

Dept Of ISE VKIT, Blore-74

2010-2011

Page 45

ANTNET: A Mobile Agent Approach To Adaptive Routing

CHAPTER 9 FUTURE ENHANCEMENTS

As with other applications, there is certainly a scope for improvement in this application too. New modules are in pipeline for to increase the compatibility of the project. Once these improvements have been done, the majority of the features that make an application an excellent one would be there and the usage would become wider and more expensive. Here, there a some of decisions for to make our project effectively and efficiently in the future Implement Non-Adaptive Routing or Dynamic Routing while Message Transfer Send the messages in the Encrypted format show that the Network hackers are not able to interfere while transmission Establish Key agreement process between the Source and the Destination nodes Implement the Bidirectional route information between the source and the destination nodes

Dept Of ISE VKIT, Blore-74

2010-2011

Page 46

ANTNET: A Mobile Agent Approach To Adaptive Routing

REFERENCES

J. Broch, D. Maltz, D. Johnson, Y.-C. Hu, and J. Jetcheva. A performance comparison of multi-hop wireless ad hoc networkrouting protocols. In Proc. 4th ACM MobiCom, pp. 8597, 1998. G. Holland and N. Vaidya. Analysis of TCP performance over mobile ad hoc networks. In Proc. 5th ACM MobiCom,pp. 219230, 1999. Y.-C. Hu and D. Johnson. Caching strategies in on-demand routing protocols for wireless ad hoc networks. In Proc. 6th ACM MobiCom, pp. 231242, 2000. D. Johnson and D. Maltz. Dynamic Source Routing in ad hoc wireless networks. In Mobile Computing, T. Imielinski and H. Korth, Eds, Ch. 5, pp. 153181, Kluwer, 1996.

The Sites Referred http://www java.sun.com http://www.java2s.com http://www.w3schools.com

Dept Of ISE VKIT, Blore-74

2010-2011

Page 47

You might also like

- Study and Analysis of Throughput, Delay and Packet Delivery Ratio in MANET For Topology Based Routing Protocols (AODV, DSR and DSDV)Document5 pagesStudy and Analysis of Throughput, Delay and Packet Delivery Ratio in MANET For Topology Based Routing Protocols (AODV, DSR and DSDV)PremKumarNo ratings yet

- Routing in MANETsDocument25 pagesRouting in MANETsSubramanya Suresh GudigarNo ratings yet

- NS2 Report - Analysis of AODV & DSDV Routing Protocol: Muhammad SamiDocument3 pagesNS2 Report - Analysis of AODV & DSDV Routing Protocol: Muhammad SamiSamiunnNo ratings yet

- Performance Study of Dymo On Demand Protocol Using Qualnet 5.0 SimulatorDocument5 pagesPerformance Study of Dymo On Demand Protocol Using Qualnet 5.0 SimulatorIOSRJEN : hard copy, certificates, Call for Papers 2013, publishing of journalNo ratings yet

- Compusoft, 3 (6), 957-960 PDFDocument4 pagesCompusoft, 3 (6), 957-960 PDFIjact EditorNo ratings yet

- A Comparative Study of Broadcast Based Routing Protocols in Mobile Ad Hoc NetworksDocument6 pagesA Comparative Study of Broadcast Based Routing Protocols in Mobile Ad Hoc NetworksInternational Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- A Comparative Analysis of DSDV and DSR in Different Scenario of Mobile Ad Hoc NetworkDocument4 pagesA Comparative Analysis of DSDV and DSR in Different Scenario of Mobile Ad Hoc Networksurendiran123No ratings yet

- Comparing AODV and DSDV Routing Protocols in MANETsDocument12 pagesComparing AODV and DSDV Routing Protocols in MANETsAmhmed BhihNo ratings yet

- Ijaiem 2014 04 29 081Document7 pagesIjaiem 2014 04 29 081International Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- OLSRDocument6 pagesOLSRHanna RizqisdelightsNo ratings yet

- Performance Comparison and Analysis of DSDV and AODV For MANETDocument6 pagesPerformance Comparison and Analysis of DSDV and AODV For MANETHippihippyNo ratings yet

- Performance Analysis of Routing Protocols in Mobile AD-HOC Networks (MANETs)Document6 pagesPerformance Analysis of Routing Protocols in Mobile AD-HOC Networks (MANETs)International Organization of Scientific Research (IOSR)No ratings yet

- 1.1 Problem Statement: It Is Sometimes NotDocument4 pages1.1 Problem Statement: It Is Sometimes Notpiyushji125No ratings yet

- 1.1 About The Project: Unidirectional Link Routing (UDLR) Proposes A Protocol That Invokes TunnelingDocument46 pages1.1 About The Project: Unidirectional Link Routing (UDLR) Proposes A Protocol That Invokes TunnelingPattemNo ratings yet

- Performance Comparison of AODV, DSDV, OLSR and DSR Routing Protocols in Mobile Ad Hoc NetworksDocument4 pagesPerformance Comparison of AODV, DSDV, OLSR and DSR Routing Protocols in Mobile Ad Hoc Networksezhar saveroNo ratings yet

- Energy Efficient Routing Protocols in Mobile Ad Hoc NetworksDocument7 pagesEnergy Efficient Routing Protocols in Mobile Ad Hoc NetworksIJERDNo ratings yet

- Comparative Study of Mobile Ad-Hoc Routing Algorithm With Proposed New AlgorithmDocument8 pagesComparative Study of Mobile Ad-Hoc Routing Algorithm With Proposed New AlgorithmRakeshconclaveNo ratings yet

- IJCER (WWW - Ijceronline.com) International Journal of Computational Engineering ResearchDocument8 pagesIJCER (WWW - Ijceronline.com) International Journal of Computational Engineering ResearchInternational Journal of computational Engineering research (IJCER)No ratings yet

- Review MANET and ChallengesDocument5 pagesReview MANET and ChallengesDịu Thư NguyễnNo ratings yet

- Survey of Routing Protocols For Mobile Ad Hoc Networks: Ashima Batra, Abhishek Shukla, Sanjeev Thakur, Rana MajumdarDocument7 pagesSurvey of Routing Protocols For Mobile Ad Hoc Networks: Ashima Batra, Abhishek Shukla, Sanjeev Thakur, Rana MajumdarInternational Organization of Scientific Research (IOSR)No ratings yet

- Comparison of Effectiveness of Aodv, DSDV and DSR Routing Protocols in Mobile Ad Hoc NetworksDocument4 pagesComparison of Effectiveness of Aodv, DSDV and DSR Routing Protocols in Mobile Ad Hoc NetworksAlwarsamy RamakrishnanNo ratings yet

- Report On Glom OsimDocument16 pagesReport On Glom OsimmanishNo ratings yet

- QoS based Delay-Constrained AODV Routing in MANETDocument4 pagesQoS based Delay-Constrained AODV Routing in MANETSrikanth ShanmukeshNo ratings yet

- UNIT 8:-Mobile Ad-Hoc Networks, Wireless Sensor NetworksDocument15 pagesUNIT 8:-Mobile Ad-Hoc Networks, Wireless Sensor NetworksVenkatesh PavithraNo ratings yet

- Multipath Routing in Fast Moving Mobile Adhoc NetworkDocument4 pagesMultipath Routing in Fast Moving Mobile Adhoc NetworkInternational Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- Mobile Adhoc Network (MANETS) : Proposed Solution To Security Related IssuesDocument9 pagesMobile Adhoc Network (MANETS) : Proposed Solution To Security Related IssuesrachikaNo ratings yet

- Manets Using Advance DSR Algorithm and Improve The Secure TransmissionDocument4 pagesManets Using Advance DSR Algorithm and Improve The Secure Transmissionsyedbin2014No ratings yet

- Performance Evaluation of ADocument11 pagesPerformance Evaluation of ACS & ITNo ratings yet

- Period Based Defense Mechanism Against Data Flooding AttacksDocument5 pagesPeriod Based Defense Mechanism Against Data Flooding AttacksBhanuja MKNo ratings yet

- Opportunistic Data Forwarding in ManetDocument4 pagesOpportunistic Data Forwarding in ManetijtetjournalNo ratings yet

- A Review On Dynamic Manet On Demand Routing Protocol in ManetsDocument6 pagesA Review On Dynamic Manet On Demand Routing Protocol in Manetswarse1No ratings yet

- 2 Sima Singh 6 13Document8 pages2 Sima Singh 6 13iisteNo ratings yet

- Performance Analysis of AODV, DSR and ZRP Protocols in Vehicular Ad-Hoc Network Using QualnetDocument5 pagesPerformance Analysis of AODV, DSR and ZRP Protocols in Vehicular Ad-Hoc Network Using QualnetInternational Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- 1.1 Problem Statement: It Is Sometimes NotDocument8 pages1.1 Problem Statement: It Is Sometimes Notpiyushji125No ratings yet

- A Cluster Based Enhancement To AODV For Inter-Vehicular Communication in VANETDocument10 pagesA Cluster Based Enhancement To AODV For Inter-Vehicular Communication in VANETijgcaNo ratings yet

- Ad Hoc NetworkDocument10 pagesAd Hoc NetworkNikhil kushwahaNo ratings yet

- Routing Algorithms (Distance Vector, Link State) Study Notes - Computer Sc. & EnggDocument6 pagesRouting Algorithms (Distance Vector, Link State) Study Notes - Computer Sc. & Enggkavya associatesNo ratings yet

- Introduction of Mobile Ad Hoc Network (MANET)Document5 pagesIntroduction of Mobile Ad Hoc Network (MANET)Kartheeswari SaravananNo ratings yet

- 118cs0181 AODV ReportDocument13 pages118cs0181 AODV ReportVishvesh MuthuNo ratings yet

- A CLUSTER BASED ENHANCEMENT TO AODV FOR VANET ROUTINGDocument10 pagesA CLUSTER BASED ENHANCEMENT TO AODV FOR VANET ROUTINGelmamoun1No ratings yet

- Detailed Comparative Study of Various Routing Protocols in Vehicular Ad-Hoc NetworksDocument8 pagesDetailed Comparative Study of Various Routing Protocols in Vehicular Ad-Hoc NetworksElda StefaNo ratings yet

- Performance Analysis of Reactive & Proactive Routing Protocols For Mobile Adhoc - Networks Name of First Author, Name of Second AuthorDocument7 pagesPerformance Analysis of Reactive & Proactive Routing Protocols For Mobile Adhoc - Networks Name of First Author, Name of Second Authorpiyushji125No ratings yet

- Comparative Study of Reactive Routing Protocols 2Document4 pagesComparative Study of Reactive Routing Protocols 2International Journal of Innovative Science and Research TechnologyNo ratings yet

- A Review of Current Routing Protocols For Ad Hoc Mobile Wireless NetworksDocument10 pagesA Review of Current Routing Protocols For Ad Hoc Mobile Wireless Networkskidxu2002No ratings yet

- A Comparison Study of Qos Using Different Routing Algorithms in Mobile Ad Hoc NetworksDocument4 pagesA Comparison Study of Qos Using Different Routing Algorithms in Mobile Ad Hoc NetworksEEE_ProceedingsNo ratings yet

- Intelligent Decision Based Route Selection and Maintenance Approach Using AOMDV in VANETDocument9 pagesIntelligent Decision Based Route Selection and Maintenance Approach Using AOMDV in VANETIJRASETPublicationsNo ratings yet

- A Mobile Ad-Hoc Routing Algorithm With Comparative Study of Earlier Proposed AlgorithmsDocument5 pagesA Mobile Ad-Hoc Routing Algorithm With Comparative Study of Earlier Proposed AlgorithmsLong HoNo ratings yet

- Comparing Popular Routing Protocols in MANETsDocument20 pagesComparing Popular Routing Protocols in MANETspiyushji125100% (1)

- L D ' N Q: Ocal Istance S Eighbouring UantificationDocument11 pagesL D ' N Q: Ocal Istance S Eighbouring UantificationJohn BergNo ratings yet

- Hybrid 3Document4 pagesHybrid 3Raaj KeshavNo ratings yet

- Routing: Routing Is The Process of Selecting Paths in A Network Along Which To Send Network Traffic. Routing IsDocument8 pagesRouting: Routing Is The Process of Selecting Paths in A Network Along Which To Send Network Traffic. Routing IsMuslim Abdul Ul-AllahNo ratings yet

- Performance Evaluation of Proactive Routing Protocol For Ad-Hoc NetworksDocument6 pagesPerformance Evaluation of Proactive Routing Protocol For Ad-Hoc NetworksSuzaini SupingatNo ratings yet

- Performance Evaluation of Manet Routing Protocols On The Basis of TCP Traffic PatternDocument8 pagesPerformance Evaluation of Manet Routing Protocols On The Basis of TCP Traffic PatternijitcsNo ratings yet

- 9 Boshir Ahmed 61-71Document11 pages9 Boshir Ahmed 61-71iisteNo ratings yet

- Performance Evaluation of MANET Through NS2 Simulation: Ayush Pandey and Anuj SrivastavaDocument6 pagesPerformance Evaluation of MANET Through NS2 Simulation: Ayush Pandey and Anuj SrivastavaSwatiNo ratings yet

- BN 2422362241Document6 pagesBN 2422362241IJMERNo ratings yet

- Ijettcs 2013 07 29 055Document6 pagesIjettcs 2013 07 29 055International Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- Energy Management in Wireless Sensor NetworksFrom EverandEnergy Management in Wireless Sensor NetworksRating: 4 out of 5 stars4/5 (1)

- SS7 11 SuaDocument28 pagesSS7 11 SuapisacheffNo ratings yet

- Cambium Networks PMP 450 Access Point Specification PDFDocument2 pagesCambium Networks PMP 450 Access Point Specification PDFSebastián FenoglioNo ratings yet

- XG Firewall FeaturesDocument7 pagesXG Firewall FeaturesRickNo ratings yet

- Datasheet 12961954 PDFDocument4 pagesDatasheet 12961954 PDFBhageerathi SahuNo ratings yet

- Ericsson RNC Iu PS Link Creation ProceduDocument9 pagesEricsson RNC Iu PS Link Creation ProceduhamidboulahiaNo ratings yet

- Build Peer-To-Peer NetworkDocument7 pagesBuild Peer-To-Peer NetworkSpiffyladdNo ratings yet

- ChannelsDocument6 pagesChannelsshethNo ratings yet

- ASR 9000 Series Line Card Types - CiscoDocument5 pagesASR 9000 Series Line Card Types - CiscoAmitNo ratings yet

- HG8110H Datasheet 03Document2 pagesHG8110H Datasheet 03Alexander PischulinNo ratings yet

- +OSPF Config - v3Document5 pages+OSPF Config - v3Norwell SagunNo ratings yet

- Bài kiểm tra cuối khóa - ACL, OSPF, DHCP, HSRP, NAT, VRRPDocument17 pagesBài kiểm tra cuối khóa - ACL, OSPF, DHCP, HSRP, NAT, VRRPPhuong PhamNo ratings yet

- Useful Links For Ccie R - S On The Cisco Doc CDDocument2 pagesUseful Links For Ccie R - S On The Cisco Doc CDGauthamNo ratings yet

- CHAPTER 2: Network Models: Solutions To Review Questions and Exercises Review QuestionsDocument5 pagesCHAPTER 2: Network Models: Solutions To Review Questions and Exercises Review Questionsمحمد احمدNo ratings yet

- ifconfig Command ReferenceDocument132 pagesifconfig Command ReferenceSofyan MaulidyNo ratings yet