Professional Documents

Culture Documents

Huffman Coding Trees

Uploaded by

razee_Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Huffman Coding Trees

Uploaded by

razee_Copyright:

Available Formats

In partial fulfilment of the course ADVANCED DATA STRUCTUREs in Master in Information Technology

Submitted by: FILIPINA F. ATABAY ULYSSES MARION G. PALOAY JEA PULMANO CRISTY BANGUG GREGORY BANGUG

Submitted to: MS. ZHELLA ANNE NISPEROS Professor

Huffman Coding Trees

HUFFMAN CODING TREES

Huffman Coding Trees

Definition Huffman coding is an entropy encoding algorithm used for lossless data compression. The term refers to the use of a variable-length code table for encoding a source symbol (such as a character in a file) where the variable-length code table has been derived in a particular way based on the estimated probability of occurrence for each possible value of the source symbol. It was developed by David A. Huffman while he was a Ph.D. student at MIT, and published in the 1952 paper "A Method for the Construction of Minimum-Redundancy Codes". Discussion We now introduce an algorithm that takes as input the frequencies (which are the probabilities of occurrences) of symbols in a string and produces as output a prefix code that encodes the string using the fewest possible bits, among all possible binary prefix codes for these symbols. This algorithm, known as Huffman coding, was developed by David Huffman in a term paper he wrote in 1951 while a graduate student at MIT. (Note that this algorithm assumes that we already know how many times each symbol occurs in the string, so we can compute the frequency of each symbol by dividing the number of times this symbol occurs by the length of the string.) Huffman coding is a fundamental algorithm in data compression. The subject devoted to reducing the number of bits required to represent information. Huffman coding is extensively used to compress bit strings representing text and it also plays an important role in compressing audio and image files. Algorithm 1 presents the Huffman coding algorithm. Given symbols and their frequencies, our goal is to construct a rooted binary tree where the symbols are the labels of the leaves. The algorithm begins with a forest of trees each consisting of one vertex, where each vertex has a symbol as its label and where the weight of this vertex equals the frequency of the symbol that is its label. At each step, we combine two trees having the least total weight into a singletree by introducing a new root and placing the tree with larger weight as its left subtree and the tree with smaller weight as its right subtree. Furthermore, we assign the sum of the weights of the two subtrees of this tree as the total weight of the tree. (Although procedures for breaking ties by choosing between trees with equal weights can be specified, we will not specify such procedures here.) The algorithm is finished when it has constructed a tree, that is, when thefore it is reduced to a single tree. ALGORITHM 1 Huffman Coding. procedureHuffman(C: symbols aiwith frequencies wi, i = 1, . . . , n) F :=forest of n rooted trees, each consisting of the single vertex aiand assigned weight wi whileF is not a tree Replace the rooted trees T and T of least weights from F with w(T ) w(T) with a tree having a new root that has T as its left subtree and T as its right subtree. Label the newedge to T with 0 and the new edge to T with 1. Assign w(T ) + w(T) as the weight of the new tree.

Huffman Coding Trees

Example 1 illustrates how algorithm 1 is used to encode a set of five symbols. Example 1 Use Huffman coding to encode the following symbols with the frequencies listed: A: 0.08, B: 0.10, C: 0.12, D: 0.15, E: 0.20, F: 0.35. What is the average number of bits used to encode acharacter?

Solution: Figure 1 displays the steps used to encode these symbols. The encoding

producedencodes A by 111, B by 110, C by 011, D by 010, E by 10, and F by 00. The average numberof bits used to encode a symbol using this encoding is 3 0.08 + 3 0.10 + 3 0.12 + 3 0.15 + 2 0.20 + 2 0.35 = 2.45. Note that Huffman coding is a greedy algorithm. Replacing the two subtrees with the smallest weight at each step leads to an optimal code in the sense that no binary prefix code for these symbols can encode these symbols using fewer bits.

Huffman Coding Trees

0.08 A 0.12 C 0.10 B 0.15 D 0 B 0.18 0.20 E 0 D 0.27 0 D 1 C 0.35 F 0 E 0 B 0.38 0 E 1 0 1 A 1.00 0 1 0 E 1 1 A Step 5 F 0 D 0.62 1 Step 4 1 C 1 C 0.38 1 1 A 0.12 C 0.18 1 A 0.27 0.35 F Step 2 0.15 D 0.20 E 0.20 E 0.35 F 0.35 F

Initial Forest

Step 1

0 B

1 A

Step 3

0 B

0 F 0 D

1 1 C

0 B

Figure 1 Huffman Coding of Symbols in Example 1

Huffman Coding Trees

Example 2: Lets say you have a set of numbers and their frequency of use and want to create a Huffman encoding for them: FREQUENCY ----------------5 7 10 15 20 45 VALUE ---------1 2 3 4 5 6

Creating a Huffman tree is simple. Sort this list by frequency and make the two-lowest elements into leaves, creating a parent node with a frequency that is the sum of the two lower element's frequencies: 12:* / \ 5:1 7:2 The two elements are removed from the list and the new parent node, with frequency 12, is inserted into the list by frequency. So now the list, sorted by frequency, is: 10:3 12:* 15:4 20:5 45:6 You then repeat the loop, combining the two lowest elements. This results in: 22:* / \ 10:3 12:* / \ 5:1 7:2 and the list is now: 15:4 20:5 22:* 45:6 You repeat until there is only one element left in the list. 35:* / \ 15:4 20:5

Huffman Coding Trees

22:* 35:* 45:6 ___/ / 22:* / \ 10:3 12:* / \ 5:1 7:2 45:6 57:* 102:* __________________/ / 57:* __/ \___ / 22:* / \ 10:3 12:* 15:4 / \ 5:1 7:2 \ 35:* / \ 20:5 \__ \ 45:6 57:* \___ \ 35:* / \ 15:4 20:5

Now the list is just one element containing 102:*, you are done. This element becomes the root of your binary Huffman tree. To generate a Huffman code you traverse the tree to the value you want, outputing a 0 every time you take a lefthand branch, and a 1 every time you take a righthand branch. (normally you traverse the tree backwards from the code you want and build the binary Huffman encoding string backwards as well, since the first bit must start from the top). Example: The encoding for the value 4 (15:4) is 010. The encoding for the value 6 (45:6) is 1 Decoding a Huffman encoding is just as easy: as you read bits in from your input stream you traverse the tree beginning at the root, taking the left hand path if you read a 0 and the right hand path if you read a 1. When you hit a leaf, you have found the code. Generally, any Huffman compression scheme also requires the Huffman tree to be written out as part of the file, otherwise the reader cannot decode the data. For a static tree, you don't have to do this since the tree is known and fixed. The easiest way to output the Huffman tree itself is to, starting at the root, dump first the left hand side then the right hand side. For each node you output a 0, for each leaf you output a 1 followed by N bits representing the value. For example, the partial tree in my last example above using 4 bits per value can be represented as follows:

Huffman Coding Trees

000100 fixed 6 bit byte indicates how many bits the value for each leaf is stored in. In this case, 4. 0 root is a node left hand side is 10011 a leaf with value 3 right hand side is 0 another node recurse down, left hand side is 10001 a leaf with value 1 right hand side is 10010 a leaf with value 2 recursion return So the partial tree can be represented with 00010001001101000110010, or 23 bits. Not bad!

Huffman Coding Trees

References: http://en.wikipedia.org/wiki/Huffman_coding

http://www.siggraph.org/education/materials/HyperGraph/video/mpeg/mpegfaq/huffman_tutorial.html

http://michael.dipperstein.com/Huffman/ Rosen, Kenneth H. (2011). Discrete mathematics and its applications (7th ed.). New York: McGraw-Hill.

You might also like

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Computer Programming (Oracle Database) 11 - Q3 - PT1Document2 pagesComputer Programming (Oracle Database) 11 - Q3 - PT1razee_No ratings yet

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- Criteria Exceeded Expectations Meets Expectations Missed Expectations Did Not Meet ExpectationsDocument1 pageCriteria Exceeded Expectations Meets Expectations Missed Expectations Did Not Meet Expectationsrazee_No ratings yet

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (890)

- Computer Programming (Oracle Database) 11 - Q3 - ST1Document2 pagesComputer Programming (Oracle Database) 11 - Q3 - ST1razee_No ratings yet

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Computer Programming (Oracle Database) 11 - Q3 - PT3Document2 pagesComputer Programming (Oracle Database) 11 - Q3 - PT3razee_No ratings yet

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- Rubric 1Document1 pageRubric 1razee_No ratings yet

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- LoremDocument2 pagesLoremrazee_No ratings yet

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Computer Programming (Oracle Database) 11 - Q3 - PT3Document2 pagesComputer Programming (Oracle Database) 11 - Q3 - PT3razee_No ratings yet

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Computer Programming (Oracle Database) 11 - Q3 - PT4Document1 pageComputer Programming (Oracle Database) 11 - Q3 - PT4razee_No ratings yet

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Computer Programming (Oracle Database) 11 - Q3 - PT3Document2 pagesComputer Programming (Oracle Database) 11 - Q3 - PT3razee_No ratings yet

- Powerpoint2016 Charts PracticeDocument4 pagesPowerpoint2016 Charts Practicerazee_No ratings yet

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- PL/SQL Activities Activity 1Document1 pagePL/SQL Activities Activity 1razee_No ratings yet

- Rubric 1Document1 pageRubric 1razee_No ratings yet

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Department of Education: Republic of The PhilippinesDocument2 pagesDepartment of Education: Republic of The Philippinesrazee_No ratings yet

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Diagnostic Test - PrintDocument8 pagesDiagnostic Test - Printrazee_No ratings yet

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- 1 DepED SHS Voucher ProgramDocument14 pages1 DepED SHS Voucher ProgramZenda Marie Facinal SabinayNo ratings yet

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- CTL Classroom Assessment Tools GuideDocument70 pagesCTL Classroom Assessment Tools GuidemartinamalditaNo ratings yet

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- DLL TemplateDocument3 pagesDLL Templaterazee_No ratings yet

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- Digital RubricDocument2 pagesDigital Rubricrazee_No ratings yet

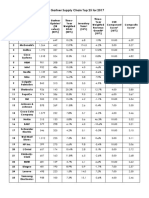

- The Gartner Supply Chain Top 25 For 2017Document1 pageThe Gartner Supply Chain Top 25 For 2017razee_No ratings yet

- The Gartner Supply Chain Top 25 For 2017Document1 pageThe Gartner Supply Chain Top 25 For 2017razee_No ratings yet

- Assessing student performanceDocument2 pagesAssessing student performancerazee_No ratings yet

- Criteria Exceeded Expectations Meets Expectations Missed Expectations Did Not Meet ExpectationsDocument1 pageCriteria Exceeded Expectations Meets Expectations Missed Expectations Did Not Meet Expectationsrazee_No ratings yet

- DBMS Project RubricDocument1 pageDBMS Project Rubricrazee_100% (1)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Gartner Supply Chain Top 25 For 2017Document1 pageThe Gartner Supply Chain Top 25 For 2017razee_No ratings yet

- Set Theory 2Document3 pagesSet Theory 2razee_No ratings yet

- User Interface Design Guidelines - Nielsen & MolichDocument8 pagesUser Interface Design Guidelines - Nielsen & Molichrazee_No ratings yet

- User-Centered Design Process Map - UsabilityDocument4 pagesUser-Centered Design Process Map - Usabilityrazee_No ratings yet

- OpenECBCheck Quality Criteria 2012Document20 pagesOpenECBCheck Quality Criteria 2012razee_No ratings yet

- DSLR Camera Parts GuideDocument15 pagesDSLR Camera Parts Guiderazee_No ratings yet

- Basic Electronics Project Documentation TemplateDocument3 pagesBasic Electronics Project Documentation Templaterazee_No ratings yet

- Cambridge Assessment International Education: English Language 9093/12 March 2019Document4 pagesCambridge Assessment International Education: English Language 9093/12 March 2019Nadia ImranNo ratings yet

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- Onset and Rime PlannerDocument15 pagesOnset and Rime Plannerapi-284217383No ratings yet

- Pronoun choicesDocument4 pagesPronoun choicesLaura Cisterne MatallanaNo ratings yet

- Teaching Unit Project - Shane 1Document12 pagesTeaching Unit Project - Shane 1api-635141433No ratings yet

- Redfish Ref Guide 2.0Document30 pagesRedfish Ref Guide 2.0MontaneNo ratings yet

- 1A Unit2 Part2Document46 pages1A Unit2 Part2Krystal SorianoNo ratings yet

- Telefol DictionaryDocument395 pagesTelefol DictionaryAlef BetNo ratings yet

- WaymakerDocument3 pagesWaymakerMarilie Joy Aguipo-AlfaroNo ratings yet

- Thompson. Elliptical Integrals.Document21 pagesThompson. Elliptical Integrals.MarcomexicoNo ratings yet

- MacbethDocument16 pagesMacbethLisa RoyNo ratings yet

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- UML Activity DiagramDocument13 pagesUML Activity DiagramMuqaddas RasheedNo ratings yet

- Esperanza CH 1-5 TestDocument4 pagesEsperanza CH 1-5 TestAnonymous Zue9MP100% (2)

- KUKA OfficeLite 87 enDocument48 pagesKUKA OfficeLite 87 enMatyas TakacsNo ratings yet

- Voice Based Web BrowserDocument3 pagesVoice Based Web BrowserSrini VasNo ratings yet

- Course: CCNA-Cisco Certified Network Associate: Exam Code: CCNA 640-802 Duration: 70 HoursDocument7 pagesCourse: CCNA-Cisco Certified Network Associate: Exam Code: CCNA 640-802 Duration: 70 HoursVasanthbabu Natarajan NNo ratings yet

- Coherence Cohesion 1 1Document36 pagesCoherence Cohesion 1 1Shaine MalunjaoNo ratings yet

- Grammar Test 8 – Parts of speech determiners article possessive adjective demonstrative adjective quantifiersDocument2 pagesGrammar Test 8 – Parts of speech determiners article possessive adjective demonstrative adjective quantifiersThế Trung PhanNo ratings yet

- Manage inbound and outbound routesDocument25 pagesManage inbound and outbound routesJavier FreiriaNo ratings yet

- Purposive Communication Exam PrelimDocument3 pagesPurposive Communication Exam PrelimJann Gulariza90% (10)

- Alinco DJ g5 Service ManualDocument66 pagesAlinco DJ g5 Service ManualMatthewBehnkeNo ratings yet

- RossiniDocument158 pagesRossiniJuan Manuel Escoto LopezNo ratings yet

- Hiebert 1peter2 Pt1 BsDocument14 pagesHiebert 1peter2 Pt1 BsAnonymous Ipt7DRCRDNo ratings yet

- Using Hermeneutic PhenomelogyDocument27 pagesUsing Hermeneutic PhenomelogykadunduliNo ratings yet

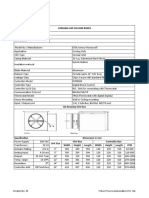

- VAV Honeywell Tech SubmittalDocument1 pageVAV Honeywell Tech SubmittalMUHAMMED SHAFEEQNo ratings yet

- Pronoun and AntecedentDocument7 pagesPronoun and AntecedentRegie NeryNo ratings yet

- DLP 1 English 10 Formalism and StructuralismDocument16 pagesDLP 1 English 10 Formalism and StructuralismAgapito100% (1)

- Babylonian and Hebrew Theophoric NamesDocument10 pagesBabylonian and Hebrew Theophoric NamesAmon SakifiNo ratings yet

- SCIE1120 Chemistry Term Paper Marking SchemeDocument5 pagesSCIE1120 Chemistry Term Paper Marking SchemeGary TsangNo ratings yet

- Jurnal Riset Sains Manajemen: Volume 2, Nomor 3, 2018Document9 pagesJurnal Riset Sains Manajemen: Volume 2, Nomor 3, 2018Deedii DdkrnwnNo ratings yet

- Resume - Suchita ChavanDocument2 pagesResume - Suchita ChavanAkshay JagtapNo ratings yet

- Generative AI: The Insights You Need from Harvard Business ReviewFrom EverandGenerative AI: The Insights You Need from Harvard Business ReviewRating: 4.5 out of 5 stars4.5/5 (2)

- ChatGPT Side Hustles 2024 - Unlock the Digital Goldmine and Get AI Working for You Fast with More Than 85 Side Hustle Ideas to Boost Passive Income, Create New Cash Flow, and Get Ahead of the CurveFrom EverandChatGPT Side Hustles 2024 - Unlock the Digital Goldmine and Get AI Working for You Fast with More Than 85 Side Hustle Ideas to Boost Passive Income, Create New Cash Flow, and Get Ahead of the CurveNo ratings yet

- Scary Smart: The Future of Artificial Intelligence and How You Can Save Our WorldFrom EverandScary Smart: The Future of Artificial Intelligence and How You Can Save Our WorldRating: 4.5 out of 5 stars4.5/5 (54)

- How to Make Money Online Using ChatGPT Prompts: Secrets Revealed for Unlocking Hidden Opportunities. Earn Full-Time Income Using ChatGPT with the Untold Potential of Conversational AI.From EverandHow to Make Money Online Using ChatGPT Prompts: Secrets Revealed for Unlocking Hidden Opportunities. Earn Full-Time Income Using ChatGPT with the Untold Potential of Conversational AI.No ratings yet

- CCNA: 3 in 1- Beginner's Guide+ Tips on Taking the Exam+ Simple and Effective Strategies to Learn About CCNA (Cisco Certified Network Associate) Routing And Switching CertificationFrom EverandCCNA: 3 in 1- Beginner's Guide+ Tips on Taking the Exam+ Simple and Effective Strategies to Learn About CCNA (Cisco Certified Network Associate) Routing And Switching CertificationNo ratings yet

- ChatGPT Money Machine 2024 - The Ultimate Chatbot Cheat Sheet to Go From Clueless Noob to Prompt Prodigy Fast! Complete AI Beginner’s Course to Catch the GPT Gold Rush Before It Leaves You BehindFrom EverandChatGPT Money Machine 2024 - The Ultimate Chatbot Cheat Sheet to Go From Clueless Noob to Prompt Prodigy Fast! Complete AI Beginner’s Course to Catch the GPT Gold Rush Before It Leaves You BehindNo ratings yet