Professional Documents

Culture Documents

TSP Paper Oxford

Uploaded by

ModelaciónOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

TSP Paper Oxford

Uploaded by

ModelaciónCopyright:

Available Formats

1

A Neural Network for the Travelling

Salesman Problem with a Well Behaved

Energy Function

Marco Budinich and Barbara Rosario

Dipartimento di Fisica & INFN, Via Valerio 2, 34127 Trieste, Italy

E-mail: mbh@trieste.infn.it

(Contributed paper to MANNA Conference, Oxford, July 3-7, 1995)

Abstract - We present and analyze a Self Organizing Feature Map (SOFM) for the

NP-complete problem of the travelling salesman (TSP): finding the shortest closed

path joining N cities. Since the SOFM has discrete input patterns (the cities of the

TSP) one can examine its dynamics analytically. We show that, with a particular

choice of the distance function for the net, the energy associated to the SOFM has its

absolute minimum at the shortest TSP path. Numerical simulations confirm that this

distance augments performances. It is curious that the distance function having this

property combines the distances of the neuron and of the weight spaces.

1 - Introduction

Solving difficult problems is a natural arena for a would-be new calculus paradigm

like that of neural networks. One can delineate a sharper image of their potential with

respect to the blurred image obtained in simpler problems.

Here we tackle the Travelling Salesman Problem (TSP, see [Lawler 1985],

[Johnson 1990]) with a Self Organizing Feature Map (SOFM). This approach,

proposed by [Angniol 1988] and [Favata 1991], started to produce respectable

performances with the elimination of the non-injective outputs produced by the SOFM

[Budinich 1995]. In this paper we further improve its performances by choosing a

suitable distance function for the SOFM.

An interesting feature is that this net is open to analytical inspection down to a

level that is not usually reachable [Ritter 1992]. This happens because the input

patterns of the SOFM, namely the cities of the TSP, are discrete. As a consequence we

can show that the energy function, associated with SOFM learning, has its absolute

minimum in correspondence to the shortest TSP path.

In what follows we start with a brief presentation of the working principles of this

net and of its basic theoretical analysis (section 2). In section 3 we propose a new

distance function for the network and show its theoretical advantages while section 4

contains numerical results. The appendix contains the detailed description of

parameters needed to reproduce these results.

2

2 - Solving the TSP with self-organizing maps

The basic idea comes from the observation that in one dimension the exact solution

to the TSP is trivial: always travel to the nearest unvisited city. Consequently, let us

suppose we have a smart map of the TSP cities onto a set of cities distributed on a

circle, we will easily find the shortest tour for these image cities that will give also

a path for the original cities. It is reasonable to conjecture that the better the distance

relations are preserved, the better will be the approximate solution found.

Each neuron receives the (x, y) =

r

q coordinates of the cities and has thus two

weights: (w

x

, w

y

) =

r

w. In this view both patterns and neurons can be thought as points

in two dimensional space. In response to input

r

q, the r -th neuron produces output

o

r

=

r

q.

r

w

r

. Figure 1 gives a schematic view of the net while figure 2 represents

both patterns and neurons as points in the plane.

r

q

i

r

w

s

r

w

s -1

r

w

s +1

D

r

w

s

D

r

w

s -1

D

r

w

s +1

Fi gur e 2 Weights modification in a learning

step: neurons (small gray circles) are moved

towards the pattern

r

q

i

(black circle) by an amount

given by (1). The solid line represents the neuron

ring. The shape of the deformation of the ring is

given by the relative magnitude of the

D

r

w that is

in turn given by the distance function h

rs

.

Learning follows the standard

Kohonen al gori thm [Kohonen

1984]: a city

r

q

i

is selected at

random and proposed to the net; let

s be the most responding neuron

(i.e. the neuron nearest to

r

q

i

)

then al l neuron wei ghts are

updated with the rule:

D

r

w

r

= e h

rs

r

q

i

-

r

w

r

( )

(1)

where 0 < e <1 is the learning

constant and h

rs

is the distance

function.

In this way, the original TSP is

reduced to a search of a good

neighborhood-preserving map: here we

build it via unsupervised learning of a

SOFM.

The TSP we consider is constituted of

N cities randomly distributed in the plane

(actually in the (0,1) square). The net is

formed by N neurons logically organized

in a ring. The cities are the input

patterns of the network and the (0,1)

square its input space.

y

x

s

r

Figure 1 Schematic net: not all connections

from input neurons are drawn.

3

This function determines the local deformations along the chain and controls the

number of neurons affected by the adaptation step (1); thus it is crucial for the

evolution of the network and for the whole learning process (see figure 2).

Step (1) is repeated several times while e and the width of the distance function

are being reduced at the same time. A common choice for h

rs

is a Gaussian-like function

like h

rs

= e

-

d

rs

s

2

where d

rs

is the distance between neurons r and s (the number

of steps between r and s ) and s is a parameter which determines the number of

neurons r such that

D

r

w

r

0; during learning e, s 0 so that

D

r

w

r

0 and

h

rs

d

rs

.

After learning, the network maps the two dimensional input space onto the one

dimensional space given by the ring of neurons and neighboring cities are mapped onto

neighboring neurons. For each city its image is given by the nearest neuron. From the

tour on the neuron ring one obtains the path for the original TSP

1

.

The standard theoretical approach to these nets considers the expectation value

E D

r

w

r

|

r

w

r

[ ]

[Ritter 1992]. In general

E D

r

w

r

|

r

w

r

[ ]

cannot be treated analytically except

when the input patterns have a discrete probability distribution as it happens for the

TSP. In this case,

E D

r

w

r

|

r

w

r

[ ]

can be expressed as the gradient of an energy function,

i.e.

E D

r

w

r

|

r

w

r

[ ]

= -e

r

w

r

V W ( ), with

V W ( ) =

1

2N

h

rs

rs

r

q

i

-

r

w

r

( )

2

q

i

F

s

( 2 )

where W =

{

r

w

r

} and the second sum is over the set of all the cities

r

q

i

having

r

w

s

as

nearest neuron, i.e. the cities contained in F

s

, the Voronoi cell of neuron

r

w

s

. On

average, V W ( ) decreases during learning since

E DV| W [ ] = -e ||

r

r

w

r

V||

2

.

Substantially, in this case, there exists an energy function which describes the

dynamics of the SOFM and which is minimized during learning; formally, the learning

process is the descent along the gradient of V W ( ).

Unfortunately V W ( ) escapes analytical treatment until the end of the learning

process when some simplifications are applicable. Since at the end of learning

h

rs

d

rs

, we can suppose h

rs

is significantly different from zero only for r = s, s 1;

in this case (2) becomes

V W ( ) @

1

2N

h

s-1, s

r

q

i

-

r

w

s-1

( )

2

+ h

ss

r

q

i

-

r

w

s

( )

2

+ h

s+1, s

r

q

i

-

r

w

s+1

( )

2

[ ]

q

i

F

s

( 3 )

1

The main weakness of this algorithm is that, in about half of the cases, the map

produced is not injective. The definition of a continuous coordinate along the neuron

ring solves this problem yielding a competitive algorithm [Budinich 1995].

4

In addition, simulations support that, at the end of learning, most neurons are selected

by just one city to which they get nearer and nearer. This means that F

s

contains just

one city, let's call it

r

q

i s ( )

, and that

r

w

s

r

q

i( s)

, consequently

V W ( ) @

1

2N

h

s-1, s

r

q

i s ( )

-

r

q

i s-1 ( )

( )

2

+ h

s+1, s

r

q

i s ( )

-

r

q

i s+1 ( )

( )

2

[ ]

s

( 4 )

and assuming h

rs

symmetric i.e. h

s-1, s

= h

s+1, s

= h, we get

V W ( ) =

h

2N

r

q

i s ( )

-

r

q

i s-1 ( )

( )

2

+

r

q

i s ( )

-

r

q

i s+1 ( )

( )

2

[ ]

s

=

h

N

L

TSP

2

where L

TSP

2

is the length of the tour of TSP considering the squares of the distances

between cities. Thus the Kohonen algorithm for TSP minimizes an energy function

which, at the end of the leaning process, is proportional to the sum of the squares of the

distances. Numerical simulations confirm this result.

3 - A new distance function

Our hypothesis is that we can obtain better results for the TSP using a distance

function h

rs

such that, at the end of the process, V W ( ) is proportional to the simple

length of the tour L

TSP

, namely V W ( )

r

q

i s ( )

-

r

q

i s-1 ( )

( )

s

= L

TSP

since, in general,

minimizing L

TSP

2

is not equivalent to minimizing L

TSP

.

h

rs

= 1+

D

rs

s

-d

rs

2

( 5 )

We thus consider a function h

rs

depending both

on the distance d

rs

and on another distance D

rs

defined in weight space:

D

rs

= |

r

w

j

j =r +1

s

-

r

w

j -1

|

If we define

D

1

D

2

D

3

1

2

3

4

r

w

1

r

w

2

r

w

3

r

w

4

Fi gure 3 Distances between

neur ons s =4 and r =1:

D

1,4

= D

1

+ D

2

+ D

3

and d

1,4

=3.

5

0

0.5

1

0

1

2

3

4

0

0.25

0.5

0.75

1

D

rs

d

rs

h

rs

Fi gure 4 Set of h

rs

given by (5) for 0 < D

rs

<1 and d

rs

= 0 , 1 , . . . , 4 .

when s 0, we get for h

s1, s

h

s1, s

= 1+

D

s1, s

s

-1

@

s

D

s1, s

=

s

r

w

s

-

r

w

s1

@

s

r

q

i s ( )

-

r

q

i s1 ( )

and substituting this expression in (4) we obtain

6

V W ( ) @

1

2N

s

r

q

i s ( )

-

r

q

i s-1 ( )

r

q

i s ( )

-

r

q

i s-1 ( )

( )

2

+

s

r

q

i s ( )

-

r

q

i s+1 ( )

r

q

i s ( )

-

r

q

i s+1 ( )

( )

2

=

s

N

r

q

i s ( )

-

r

q

i s+1 ( )

s

=

s

N

L

TSP

With this choice of h

rs

the minimization of the energy V W ( ) is equivalent to the

minimization of the TSP path.

We remark that the introduction of the distance D

rs

between weights is a slightly

unusual hypothesis for this kind of nets that usually keep well separated neuron and

weight spaces in the sense that the distance function h

rs

depends only on the distance

d

rs

.

4 - Numerical results

Since the performances of this kind of TSP algorithms are good for problems with

more than 500 cities and more critical in smaller problems [Budinich 1995], we

began testing the performances produced by the new distance function (5) in problems

with 50 cities.

We compared the quality of TSP solutions obtained with this net to those of two

other algorithms both deriving from the idea of a topology preserving map and that

both actually minimizes L

TSP

2

: the elastic net of Durbin and Willshaw [Durbin 1987]

and this same algorithm with a standard distance choice.

As a test set, we considered the very same 5 sets of 50 randomly distributed cities

used for the elastic net.

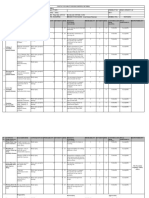

Table 1 contains a comparison of the best TSP path obtained in several runs of the

different algorithms expressed as percentual increments over the best known solution

for the given problem.

7

City set Min. length [Durbi n 1987] [Budi ni ch 1995] This algorithm

1 5. 8358 2.47 % 1.65 % 0.96 %

2 5. 9945 0.59 % 1.66 % 0.31 %

3 5. 5749 2.24 % 1.06 % 1.05 %

4 5. 6978 2.85 % 1.37 % 0.70 %

5 6. 1673 5.23 % 5.25 % 0.43 %

Average 2.68 % 2.20 % 0.69 %

Table 1 Comparison of the best TSP solution obtained in 10 runs of the various algorithms.

Rows refer to the 5 different problems each of 50 cities randomly distributed in the (0,1)

square. Column 2 reports the length of the best known solution for the given problem. Columns

3 to 5 contain the best lengths obtained by the three algorithms under study expressed as

percentual increments from the minimal length; the number of runs of the algorithms is

respectively: unknown, 5 and 10. Last row gives the increment averaged over the 5 city

sets.

Another measure of the quality of the solution is the mean length obtained in the 10

runs. The percentual increment of these mean lengths, averaged over the 5 sets, was

for this algorithm 2.49%, showing that even the averages found with the new distance

function are better than the minima found with the elastic net.

These results clearly show that distance choice (5) gives better solutions in this

SOFM application, thus supporting the guess that an energy V W ( ) directly

proportional to the length of the tour L

TSP

, is better tuned to this problem.

One could wonder if adding weight space information to the distance function could

give interesting results also in other SOFM applications.

Appendix

Here we describe the network setting that produces the quoted numerical results.

Apart from the distance definition (5) we apply a standard Kohonen algorithm and

exponentially decrease parameters e and s with learning epoch n

e

(a learning epoch

correspond to N weights update with rule (1))

e = e

0

a

n

e

s = s

0

b

n

e

and learning stops when e reaches 5

10

-3

. Numerical simulations clearly indicate that

best results are obtained when the final value of s is very small ( 5

10

-3

) and

when e and s decrease together reaching their final value at the same time.

Consequently given values for a and s

0

one easily finds b.

In other words there are just three free parameters to play with to optimize

results, namely e

0

and s

0

and a. After some investigation we obtained the following

values that produce the quoted results: e

0

= 0.8, s

0

= 14 and a = 0 . 9 9 9 6 .

8

References:

[Angniol 1988] B. Angniol, de G. La Croix Vaubois and J.-Y. Le Texier, Sel f

Organising Feature Maps and the Travelling Salesman Problem,

Neural Networks 1 1988 pp. 289-293;

[Budinich 1995] M. Budinich, A Self-Organising Neural Network for the

Travelling Salesman Problem that is Competitive with

Simulated Annealing, to appear in: Neural Computation;

[Durbin 1987] R. Durbin and D. Willshaw, An Analogue Approach to the

Travelling Salesman Problem using an Elastic Net Method,

Nature 336 1987 pp. 689-691;

[Favata 1991] F. Favata and R. Walker, A Study of the Application of

Kohonen-type Neural Networks to the Travelling Salesman

Probl em, Biological Cybernetics 64 1991 pp. 463-468;

[Johnson 1990] D. S. Johnson, Local Optimization and the Traveling Salesman

Problem, in: Proceedings of the 17

th

Colloquium on Automata,

Languages and Programming, 1990 Springer-Verlag New York,

pp. 446-461;

[Kohonen 1984] T. Kohonen, Self-Organisation and Associative Memory, 1984

(3

rd

Ed. 1989) Springer-Verlag Berlin Heidelberg;

[Lawler 1985] E. L. Lawler, J. K. Lenstra , A. G. Rinnoy Kan and D. B. Shmoys

(editors), The Traveling Salesman Problem - A Guided Tour

of Combinatorial Optimization, John Wiley & Sons, New York

1990, IV Reprint, pp. x 474;

[Ritter 1992] H. Ritter, T. Martinetz and K. Schulten, Neural Computation and

Self-Organizing Maps, Addison-Wesley Publishing Company,

Reading Massachusetts 1992, pp. 306.

You might also like

- Slides v1 PDFDocument90 pagesSlides v1 PDFModelaciónNo ratings yet

- Perceptron Learning RulesDocument38 pagesPerceptron Learning RulesVivek Goyal50% (2)

- ArsatDocument1 pageArsatModelaciónNo ratings yet

- ViajanteDocument4 pagesViajanteModelaciónNo ratings yet

- TSP 03Document8 pagesTSP 03ModelaciónNo ratings yet

- TSP 04Document5 pagesTSP 04ModelaciónNo ratings yet

- TSP 04Document5 pagesTSP 04ModelaciónNo ratings yet

- SOM Toolbox For MATLAB - DocumentationDocument60 pagesSOM Toolbox For MATLAB - DocumentationFranck DernoncourtNo ratings yet

- TSP 001Document3 pagesTSP 001ModelaciónNo ratings yet

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Okumas Guide To Gaijin 1Document90 pagesOkumas Guide To Gaijin 1Diogo Monteiro Costa de Oliveira SilvaNo ratings yet

- DinmjgDocument10 pagesDinmjghaker linkisNo ratings yet

- HLN Applications enDocument27 pagesHLN Applications enClint TcNo ratings yet

- Advance Probability and Statistics - 2014 - 2da Edición - Ottman PDFDocument383 pagesAdvance Probability and Statistics - 2014 - 2da Edición - Ottman PDFAdan Graus RiosNo ratings yet

- Brunei 2Document16 pagesBrunei 2Eva PurnamasariNo ratings yet

- Carcass Strength Relationships Conveyor BeltsDocument9 pagesCarcass Strength Relationships Conveyor Beltseduardo_chaban100% (1)

- Lit 1 ReportDocument21 pagesLit 1 ReportTrexie De Vera JaymeNo ratings yet

- Hira - For Shot Blasting & Upto 2nd Coat of PaintingDocument15 pagesHira - For Shot Blasting & Upto 2nd Coat of PaintingDhaneswar SwainNo ratings yet

- Method Statement of T-Beams PDFDocument14 pagesMethod Statement of T-Beams PDFKAmi KaMranNo ratings yet

- Mechanical Reasoning - Test 2: 40 QuestionsDocument14 pagesMechanical Reasoning - Test 2: 40 Questionskyloz60% (5)

- Therelek - Heat Treatment ServicesDocument8 pagesTherelek - Heat Treatment ServicesTherelek EngineersNo ratings yet

- Global Projects Organisation: Material Specification For 316/316L and 6mo Austenitic Stainless SteelDocument33 pagesGlobal Projects Organisation: Material Specification For 316/316L and 6mo Austenitic Stainless SteelThiyagarajan JayaramenNo ratings yet

- Smart City Scheme GuidelinesDocument48 pagesSmart City Scheme GuidelinesKarishma Juttun100% (1)

- Transdermal Drug Delivery System ReviewDocument8 pagesTransdermal Drug Delivery System ReviewParth SahniNo ratings yet

- Part 7 Mean Field TheoryDocument40 pagesPart 7 Mean Field TheoryOmegaUserNo ratings yet

- J. Agric. Food Chem. 2005, 53, 9010-9016Document8 pagesJ. Agric. Food Chem. 2005, 53, 9010-9016Jatyr OliveiraNo ratings yet

- IKEA - Huntsman Positive List - 27 May 2016 - EN - FINAL - v1Document30 pagesIKEA - Huntsman Positive List - 27 May 2016 - EN - FINAL - v1Flávia DutraNo ratings yet

- Details of Placed Students in Academic Session 2022-23Document10 pagesDetails of Placed Students in Academic Session 2022-23Rahul MishraNo ratings yet

- Global Environment Unit 2Document13 pagesGlobal Environment Unit 2Se SathyaNo ratings yet

- BNB SB0114Document4 pagesBNB SB0114graziana100% (2)

- ELK-3 550 1HC0000742AFEnDocument20 pagesELK-3 550 1HC0000742AFEnOnur FişekNo ratings yet

- Marcelo - GarciaDocument6 pagesMarcelo - GarciaNancy FernandezNo ratings yet

- Chapter 01 Vacuum Chambers Special Components PDFDocument14 pagesChapter 01 Vacuum Chambers Special Components PDFmindrumihaiNo ratings yet

- People at Virology: Dmitri Iosifovich Ivanovsky - Founders of VirologyDocument2 pagesPeople at Virology: Dmitri Iosifovich Ivanovsky - Founders of VirologyFae BladeNo ratings yet

- Measuring Salinity in Crude Oils Evaluation of MetDocument9 pagesMeasuring Salinity in Crude Oils Evaluation of Metarmando fuentesNo ratings yet

- Calibrating Images TutorialDocument14 pagesCalibrating Images TutorialtrujillomadrigalNo ratings yet

- Manuel Alava 01-12-10 PLDocument3 pagesManuel Alava 01-12-10 PLAshley RodriguezNo ratings yet

- CEBUANO ERNESTO CODINA (Astonaut Hardware Designer)Document1 pageCEBUANO ERNESTO CODINA (Astonaut Hardware Designer)Dessirea FurigayNo ratings yet

- Ap Art and Design Drawing Sustained Investigation Samples 2019 2020 PDFDocument102 pagesAp Art and Design Drawing Sustained Investigation Samples 2019 2020 PDFDominic SandersNo ratings yet

- Pruebas y Mantenimiento Automático Centralizado para Detectores de Humo Direccionales Vesda VeaDocument50 pagesPruebas y Mantenimiento Automático Centralizado para Detectores de Humo Direccionales Vesda Veasanti0305No ratings yet