Professional Documents

Culture Documents

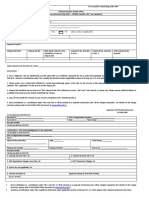

Tmcode Text Mining

Uploaded by

ratan2030 ratings0% found this document useful (0 votes)

42 views2 pagestmcode text mining

Original Title

tmcode text mining

Copyright

© © All Rights Reserved

Available Formats

TXT, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this Documenttmcode text mining

Copyright:

© All Rights Reserved

Available Formats

Download as TXT, PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

42 views2 pagesTmcode Text Mining

Uploaded by

ratan203tmcode text mining

Copyright:

© All Rights Reserved

Available Formats

Download as TXT, PDF, TXT or read online from Scribd

You are on page 1of 2

library(tm)

#Create Corpus - CHANGE PATH AS NEEDED

docs <- Corpus(DirSource("C:/Users/<YourPath>/Documents/TextMining"))

#Check details

inspect(docs)

#inspect a particular document

writeLines(as.character(docs[[30]]))

#Start preprocessing

toSpace <- content_transformer(function(x, pattern) { return (gsub(pattern, " ",

x))})

docs <- tm_map(docs, toSpace, "-")

docs <- tm_map(docs, toSpace, ":")

docs <- tm_map(docs, toSpace, "'")

docs <- tm_map(docs, toSpace, "'")

docs <- tm_map(docs, toSpace, " -")

#Good practice to check after each step.

writeLines(as.character(docs[[30]]))

#Remove punctuation - replace punctuation marks with " "

docs <- tm_map(docs, removePunctuation)

#Transform to lower case

docs <- tm_map(docs,content_transformer(tolower))

#Strip digits

docs <- tm_map(docs, removeNumbers)

#Remove stopwords from standard stopword list (How to check this? How to add you

r own?)

docs <- tm_map(docs, removeWords, stopwords("english"))

#Strip whitespace (cosmetic?)

docs <- tm_map(docs, stripWhitespace)

#inspect output

writeLines(as.character(docs[[30]]))

#Need SnowballC library for stemming

library(SnowballC)

#Stem document

docs <- tm_map(docs,stemDocument)

#some clean up

docs <- tm_map(docs, content_transformer(gsub),

pattern = "organiz", replacement = "organ")

docs <- tm_map(docs, content_transformer(gsub),

pattern = "organis", replacement = "organ")

docs <- tm_map(docs, content_transformer(gsub),

pattern = "andgovern", replacement = "govern")

docs <- tm_map(docs, content_transformer(gsub),

pattern = "inenterpris", replacement = "enterpris")

docs <- tm_map(docs, content_transformer(gsub),

pattern = "team-", replacement = "team")

#inspect

writeLines(as.character(docs[[30]]))

#Create document-term matrix

dtm <- DocumentTermMatrix(docs)

#inspect segment of document term matrix

inspect(dtm[1:2,1000:1005])

#collapse matrix by summing over columns - this gets total counts (over all docs

) for each term

freq <- colSums(as.matrix(dtm))

#length should be total number of terms

length(freq)

#create sort order (asc)

ord <- order(freq,decreasing=TRUE)

#inspect most frequently occurring terms

freq[head(ord)]

#inspect least frequently occurring terms

freq[tail(ord)]

#remove very frequent and very rare words

dtmr <-DocumentTermMatrix(docs, control=list(wordLengths=c(4, 20),

bounds = list(global = c(3,27))))

freqr <- colSums(as.matrix(dtmr))

#length should be total number of terms

length(freqr)

#create sort order (asc)

ordr <- order(freqr,decreasing=TRUE)

#inspect most frequently occurring terms

freqr[head(ordr)]

#inspect least frequently occurring terms

freqr[tail(ordr)]

#list most frequent terms. Lower bound specified as second argument

findFreqTerms(dtmr,lowfreq=80)

#correlations

findAssocs(dtmr,"project",0.6)

findAssocs(dtmr,"enterprise",0.6)

findAssocs(dtmr,"system",0.6)

#histogram

wf=data.frame(term=names(freqr),occurrences=freqr)

library(ggplot2)

p <- ggplot(subset(wf, freqr>100), aes(term, occurrences))

p <- p + geom_bar(stat="identity")

p <- p + theme(axis.text.x=element_text(angle=45, hjust=1))

p

#wordcloud

library(wordcloud)

#setting the same seed each time ensures consistent look across clouds

set.seed(42)

#limit words by specifying min frequency

wordcloud(names(freqr),freqr, min.freq=70)

#...add color

wordcloud(names(freqr),freqr,min.freq=70,colors=brewer.pal(6,"Dark2"))

You might also like

- HechoEnJapons PICTUREBOOK DE CARDINGDocument67 pagesHechoEnJapons PICTUREBOOK DE CARDINGDean Diana93% (29)

- Power BIDocument32 pagesPower BIDeepak Arya100% (4)

- Scripts 1Document31 pagesScripts 1Anjali AgarwalNo ratings yet

- Tarea de Ciencia de DatosDocument32 pagesTarea de Ciencia de DatosLeomaris FerrerasNo ratings yet

- Writing Efficient R CodeDocument5 pagesWriting Efficient R CodeOctavio FloresNo ratings yet

- Machine Learning Theory (CS351) Report Text Classification Using TF-MONO Weighting SchemeDocument16 pagesMachine Learning Theory (CS351) Report Text Classification Using TF-MONO Weighting SchemeAmeya Deshpande.No ratings yet

- PySpark Data Frame Questions PDFDocument57 pagesPySpark Data Frame Questions PDFVarun Pathak100% (1)

- M8254APB - Programmable Interval Timer With APB Interface: Features Functional OverviewDocument2 pagesM8254APB - Programmable Interval Timer With APB Interface: Features Functional OverviewkofostceNo ratings yet

- Ejercicio #1Document3 pagesEjercicio #1Michelle Galarza S.No ratings yet

- Lab Digital Assignment 6 Data Visualization: Name: Samar Abbas Naqvi Registration Number: 19BCE0456Document11 pagesLab Digital Assignment 6 Data Visualization: Name: Samar Abbas Naqvi Registration Number: 19BCE0456SAMAR ABBAS NAQVI 19BCE0456No ratings yet

- Mod11 TextminingDocument4 pagesMod11 TextminingSandhya KuppalaNo ratings yet

- R语言基础入门指令 (tips)Document14 pagesR语言基础入门指令 (tips)s2000152No ratings yet

- Text Mining KNNDocument2 pagesText Mining KNNvedavarshniNo ratings yet

- 21blc1084 Edalab11Document4 pages21blc1084 Edalab11vishaldev.hota2021No ratings yet

- RDataMining Slides Text MiningDocument34 pagesRDataMining Slides Text MiningSukhendra SinghNo ratings yet

- 9 20bec1318Document7 pages9 20bec1318Christina CeciliaNo ratings yet

- R Code NBDocument3 pagesR Code NBbrahmesh_smNo ratings yet

- CodigoR PDFDocument1 pageCodigoR PDFTori RodriguezNo ratings yet

- Text Processing in R.RDocument3 pagesText Processing in R.RKen MatsudaNo ratings yet

- R CommandsDocument18 pagesR CommandsKhizra AmirNo ratings yet

- Spam ClassDocument21 pagesSpam Classparidhi kaushikNo ratings yet

- Spam Classification2Document21 pagesSpam Classification2paridhi kaushikNo ratings yet

- TP STDocument1 pageTP STSalma JedidiNo ratings yet

- Linux Tutorial Cheat SheetDocument2 pagesLinux Tutorial Cheat SheetHamami InkaZo100% (2)

- Text Mining Package and Datacleaning: #Cleaning The Text or Text TransformationDocument6 pagesText Mining Package and Datacleaning: #Cleaning The Text or Text TransformationArush sambyalNo ratings yet

- RDataMining Slides Text MiningDocument35 pagesRDataMining Slides Text MiningIqbal TawakalNo ratings yet

- Diary TopicDocument5 pagesDiary TopicRifki EdoNo ratings yet

- Text Mining CodeDocument3 pagesText Mining CodeyashsetheaNo ratings yet

- FAST TRACK 2021-2022: Slot: L29+L30+L59+L60Document10 pagesFAST TRACK 2021-2022: Slot: L29+L30+L59+L60SUKANT JHA 19BIT0359No ratings yet

- Package Inpdfr': R Topics DocumentedDocument29 pagesPackage Inpdfr': R Topics DocumentedahrounishNo ratings yet

- DOMDocument7 pagesDOMgopivanamNo ratings yet

- Alert For Database ChangeDocument2 pagesAlert For Database ChangepeddareddyNo ratings yet

- R FunctionsDocument8 pagesR FunctionsOwais ShaikhNo ratings yet

- RSQLML Final Slide 15 June 2019 PDFDocument196 pagesRSQLML Final Slide 15 June 2019 PDFThanthirat ThanwornwongNo ratings yet

- AssDocument5 pagesAssTaqwa ElsayedNo ratings yet

- Step 1: Create A CSV File: # For Text MiningDocument9 pagesStep 1: Create A CSV File: # For Text MiningdeekshaNo ratings yet

- Write and Run Servlet Code To Fetch and Display All The Fields of A Student Table Stored in An OracleDocument4 pagesWrite and Run Servlet Code To Fetch and Display All The Fields of A Student Table Stored in An OracleRacheNo ratings yet

- This Powershell Script Allows To Download All The Files Inside A Folder From Azure Data Lake StorageDocument2 pagesThis Powershell Script Allows To Download All The Files Inside A Folder From Azure Data Lake StorageChandra ShekarNo ratings yet

- R Syntax Examples 1Document6 pagesR Syntax Examples 1Pedro CruzNo ratings yet

- TerraformDocument5 pagesTerraformilyas2sapNo ratings yet

- Aped For Fake NewsDocument6 pagesAped For Fake NewsBless CoNo ratings yet

- Ajava From Pract 8 To LastDocument44 pagesAjava From Pract 8 To LastketanjnpdubeyNo ratings yet

- Econ589multivariateGarch RDocument4 pagesEcon589multivariateGarch RJasonClarkNo ratings yet

- CICS Commands: Indicates You Must Specify One of The OptionsDocument8 pagesCICS Commands: Indicates You Must Specify One of The Optionssug2010No ratings yet

- 2013 - Notes - R Trinker'S - NotesDocument274 pages2013 - Notes - R Trinker'S - NotesCody WrightNo ratings yet

- List Sas in DirDocument4 pagesList Sas in DirOb S QuraNo ratings yet

- Simple Tutorial in RDocument15 pagesSimple Tutorial in RklugshitterNo ratings yet

- DatasetsDocument40 pagesDatasetsAsmatullah KhanNo ratings yet

- CICS Commands: Indicates You Must Specify One of The OptionsDocument10 pagesCICS Commands: Indicates You Must Specify One of The OptionsnarasimhachinniNo ratings yet

- R StudioDocument25 pagesR StudioN KNo ratings yet

- Linux Lab RecordDocument48 pagesLinux Lab RecordHari Krishna KondaNo ratings yet

- 10-Visualization of Streaming Data and Class R Code-10!03!2023Document19 pages10-Visualization of Streaming Data and Class R Code-10!03!2023G Krishna VamsiNo ratings yet

- QuantedaDocument2 pagesQuantedaData ScientistNo ratings yet

- Cheat Sheet: Extract FeaturesDocument2 pagesCheat Sheet: Extract FeaturesGeraldNo ratings yet

- Machine LeaarningDocument32 pagesMachine LeaarningLuis Eduardo Calderon CantoNo ratings yet

- CSE100 Wk11 FilesDocument21 pagesCSE100 Wk11 FilesRazia Sultana Ankhy StudentNo ratings yet

- Import Numpy As NPDocument6 pagesImport Numpy As NPMaciej WiśniewskiNo ratings yet

- Unix CommandsDocument15 pagesUnix Commandssai.abinitio99No ratings yet

- Useful FoxPro CommandsDocument3 pagesUseful FoxPro Commandssmartguy007No ratings yet

- Ui PatternsDocument54 pagesUi PatternsSajid RahmanNo ratings yet

- Daily ChecksDocument4 pagesDaily CheckslakshmanNo ratings yet

- Creating Leanpub CoursesDocument39 pagesCreating Leanpub Coursesratan203No ratings yet

- Learning Brochures OPMDocument18 pagesLearning Brochures OPMratan203No ratings yet

- The Startup Checklist Ebook PDFDocument22 pagesThe Startup Checklist Ebook PDFratan203No ratings yet

- NPS Contribution Form PDFDocument1 pageNPS Contribution Form PDFratan203No ratings yet

- Tidy Data PDFDocument24 pagesTidy Data PDFblueyes78No ratings yet

- FTF AsitDocument25 pagesFTF Asitratan203100% (2)

- Top 10 Stocks To Buy Now 2018 Motilal Oswal PDFDocument26 pagesTop 10 Stocks To Buy Now 2018 Motilal Oswal PDFratan203No ratings yet

- DB Structure Pivot EtcDocument14 pagesDB Structure Pivot Etcratan203No ratings yet

- 30 December TO 1 January, 2020: 4Th Ica Open Below 1600 Fide Rating Chess TournamentDocument4 pages30 December TO 1 January, 2020: 4Th Ica Open Below 1600 Fide Rating Chess TournamentrahulNo ratings yet

- Exponential SmoothingDocument5 pagesExponential Smoothingratan203No ratings yet

- DB Structure Pivot EtcDocument1 pageDB Structure Pivot Etcratan203No ratings yet

- MCX - 3QFY18 - HDFC Sec-201801170938543652976Document12 pagesMCX - 3QFY18 - HDFC Sec-201801170938543652976ratan203No ratings yet

- When To Sell: GM BreweriesDocument12 pagesWhen To Sell: GM Breweriesratan203No ratings yet

- National Pension System (NPS) : Subscriber Registration Form (All Citizen Model) eNPS FormDocument5 pagesNational Pension System (NPS) : Subscriber Registration Form (All Citizen Model) eNPS Formratan203No ratings yet

- Reliance Capital (RELCAP) : Businesses Growing, Return Ratios ImprovingDocument15 pagesReliance Capital (RELCAP) : Businesses Growing, Return Ratios Improvingratan203No ratings yet

- FI ISIP Biller Registration-ICICIDocument10 pagesFI ISIP Biller Registration-ICICIratan203No ratings yet

- SCMA 632 SyllabusDocument1 pageSCMA 632 Syllabusratan203No ratings yet

- M3665 MathGambing TalkDocument26 pagesM3665 MathGambing Talkratan203No ratings yet

- Aksharchem Annual Report 2011-12Document53 pagesAksharchem Annual Report 2011-12ratan203No ratings yet

- HDFC Sec PharmaDocument10 pagesHDFC Sec Pharmaratan203No ratings yet

- Q4FY17 Earnings Report Reliance Capital LTD: Operating Income Ppop PAT Margin Net ProfitDocument5 pagesQ4FY17 Earnings Report Reliance Capital LTD: Operating Income Ppop PAT Margin Net Profitratan203No ratings yet

- Ashiana Housing Investor UpdateDocument25 pagesAshiana Housing Investor Updateratan203No ratings yet

- Annual Report 2014 PDFDocument76 pagesAnnual Report 2014 PDFratan203No ratings yet

- 1513588238933Document460 pages1513588238933ratan203No ratings yet

- Edel 01-08-2017-07Document18 pagesEdel 01-08-2017-07ratan203No ratings yet

- Candidate Guide: Financial Risk ManagerDocument24 pagesCandidate Guide: Financial Risk Managerratan203No ratings yet

- Mosl MCXDocument10 pagesMosl MCXratan203No ratings yet

- Fortis Healthcare: Performance In-Line With ExpectationsDocument13 pagesFortis Healthcare: Performance In-Line With Expectationsratan203No ratings yet

- TCS Buyback Opportunity To Earn Around 13% in 3 Months (62% Annualized)Document4 pagesTCS Buyback Opportunity To Earn Around 13% in 3 Months (62% Annualized)ratan203No ratings yet

- Piramal New Pharma Presentation Jan2017Document33 pagesPiramal New Pharma Presentation Jan2017ratan203No ratings yet

- Ns3 On Windows and EclipseDocument43 pagesNs3 On Windows and Eclipseuntuk100% (1)

- Pointers in CDocument22 pagesPointers in CMano RanjaniNo ratings yet

- New Server ChecklistDocument4 pagesNew Server Checklistubub1469No ratings yet

- Formal Verification of Low Power TechniquesDocument6 pagesFormal Verification of Low Power TechniquesShiva TripathiNo ratings yet

- Chapter5-Pic Programming in CDocument53 pagesChapter5-Pic Programming in Cadamwaiz93% (14)

- Poka SoftDocument12 pagesPoka Softprabu06051984No ratings yet

- MBAASDocument35 pagesMBAASBudy SantosoNo ratings yet

- Job SequencerDocument19 pagesJob Sequenceraddubey2No ratings yet

- Lesson Plan of Signals and SystemsDocument3 pagesLesson Plan of Signals and SystemsSantosh KumarNo ratings yet

- Chapter 3: Introduction To C/Al Programming: Training ObjectivesDocument8 pagesChapter 3: Introduction To C/Al Programming: Training ObjectivescapodelcapoNo ratings yet

- Mca Syllabus2018 2019Document143 pagesMca Syllabus2018 2019ekamkohliNo ratings yet

- Token Bus (IEEE 802)Document9 pagesToken Bus (IEEE 802)anon_185146696No ratings yet

- SAP With Microsoft SQL Server 2008 and SQL Server 2005 - Best Practices For High Availability, Maximum Performance, and Scalability - Part I - SAP Architectur PDFDocument110 pagesSAP With Microsoft SQL Server 2008 and SQL Server 2005 - Best Practices For High Availability, Maximum Performance, and Scalability - Part I - SAP Architectur PDFmzilleNo ratings yet

- 3dprint Tips For Users of RhinoDocument2 pages3dprint Tips For Users of Rhinoel_emptyNo ratings yet

- Fast Geo Efficient Geometric Range Queries OnDocument3 pagesFast Geo Efficient Geometric Range Queries OnIJARTETNo ratings yet

- Flukeview Forms: Documenting SoftwareDocument8 pagesFlukeview Forms: Documenting SoftwareItalo AdottiNo ratings yet

- Oracle® Enterprise Manager: Concepts 11g Release 11.1.0.1Document260 pagesOracle® Enterprise Manager: Concepts 11g Release 11.1.0.1Lupaşcu Liviu MarianNo ratings yet

- Java Is A Programming Language Originally Developed by James Gosling at Sun MicrosystemsDocument18 pagesJava Is A Programming Language Originally Developed by James Gosling at Sun MicrosystemsKevin MeierNo ratings yet

- A NOR Emulation Strategy Over NAND Flash Memory PDFDocument8 pagesA NOR Emulation Strategy Over NAND Flash Memory PDFmuthu_mura9089No ratings yet

- Perintah Lubuntu ServerDocument4 pagesPerintah Lubuntu ServerGabriel SimatupangNo ratings yet

- WT - NotesDocument102 pagesWT - NotesshubhangiNo ratings yet

- (Ebook - ) Secrets of Borland C++ Masters PDFDocument765 pages(Ebook - ) Secrets of Borland C++ Masters PDFdogukanhazarozbeyNo ratings yet

- Smart Connector Support ProductsDocument8 pagesSmart Connector Support ProductsvptecblrNo ratings yet

- SigmaWin+ Ver. 7 Usage Notes (ReadMe File) PDFDocument15 pagesSigmaWin+ Ver. 7 Usage Notes (ReadMe File) PDFEsteban GarciaNo ratings yet

- VMware VSphere Data ProtectionDocument53 pagesVMware VSphere Data ProtectionHaiNo ratings yet

- KPD 503 - Database Security ManagementDocument5 pagesKPD 503 - Database Security ManagementShakirinah AbdullahNo ratings yet

- Experiment 7Document13 pagesExperiment 7Sheraz HassanNo ratings yet