Professional Documents

Culture Documents

Compusoft, 3 (3), 614-618 PDF

Uploaded by

Ijact EditorOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Compusoft, 3 (3), 614-618 PDF

Uploaded by

Ijact EditorCopyright:

Available Formats

COMPUSOFT, An international journal of advanced computer technology, 3 (3), March-2014 (Volume-III, Issue-III)

ISSN:2320-0790

Simulation of Conjugate Structure Algebraic Code

Excited Linear Prediction Speech Coder

Ritisha Virulkar1 , A.P.Khandait1 , Gautam Bacher2 , Abhijit.B.Maidamwar3

1

PCOE, Nagpur

2

BITS, Goa

3

RGCER, Nagpur

Abstract : The CS-ACELP is a speech coder that is based on the linear prediction coding technique. It gives us the bit rate

reduced to up to 8kbps and at the same time reduces the computational comp lexity of speech search described in ITU recommendation G.729. This codec is used for compression of speech signal. The idea behind this algorithm is to predict the

next co ming signals by the means of linear prediction. For his it uses fixed codebook and adaptive codebook. The quality of

speech delivered by this coder is equivalent to 32 kbps ADPCM . The processes responsible for achieving reduction in bit rate

are: sending less number of bits for no voice detection and carrying out conditional search in fixed codebook.

Keywords: 8 kbps algorith m, codebook search, CS-A CELP

analysis gives a set of coefficients called the LP filter coefficients.These are further converted to Line Spectrum Pair

I INTRODUCTION

The ITU-T standardized 8 kbits/s speech codec to operate

with a discrete-time speech signal. G.729 provides coding of

speech signals used in multimedia applications at 8 kb its/s

using Conjugate-Structure Algebraic-Code-Excited LinearPrediction (CS-A CELP) [1][2]. The quality of speech produced by our coder is equivalent to a 32 kbits/s ADPCM fo r

most operating conditions. These conditions include clean

and a noise containign speech, multip le levels of encoding,

variations in level and non-speech inputs.The typical input

rates are mu-law or A-law 64 kbits/s PCM or 128 kbit/s lin ear PCM providing a compression ratio of 16:l. The coder

designed is robust against channel errors. This means that

the coder should be able to withstand these errors without

introducing any major effects. Also if radio channels suffer

fro m long distance fades and complete frames are lost then

with minimu m loss in the quality of speech the decoder

should be able to retain those missing frames.

The coder generally breaks up the speech into small units

called frames. For each speech frame a set of parameters are

generated and are sent to the decoder. This signifies that the

frame time represents a lower bound on the system delay

and the encoder must wait for at least a frame worth of

speech before it can even begin the encode process. Then the

input signal is passed through a preprocessing block which

consists of a high pass filter. A 10th order linear prediction

614

(LSP) coefficients and are quantized using Vector Quantization (V Q).

The excitation signal is chosen and an open-loop pitch delay is estimated with a speech signal that is perceptually

weighted and low-pass filtered.This speech codecs relative

low co mp lexity makes it an attractive choice for Internet

telephony.

The algorith m can be divided into two sections. Section I

will describe the CS-A CELP encoder and Section II will

describe the CS-A CELP decoder. The encoder can be subdivided into various parts:

a.

b.

c.

d.

e.

f.

Preprocessing

Linear Predict ion Analysis

Open loop pitch search

Closed loop pitch search

Fixed codebook search

Memory update

A. Preprocessing

A 16 bit pulse code modulated signal is assumed to be the

input to the encoder. But before encoding the signal is

needed to pass through two preprocessing blocks. They are:

1)

Signal scaling

2)

high-pass filtering

The scaling process consists of dividing the input signal by a

factor 2 so that the possibility of overflows in the fixed-point

implementation is reduced. The high-pass filter is used as a

precaution against the undesired components that are of low

frequency. A second order filter of pole/zero type with a cutoff frequency of 140 Hz is used. Both the processes of scaling and high-pass filtering are co mbined together by dividing the coefficients at the numerator of this filter by 2. And

we get the resulting filter which is is given by:

computation of the LP filter coefficients. These LP coefficients are then converted to Line Spectru m Pair (LSP) coefficients and are quantized using predictive two-stage Vector

Quantizat ion (VQ) with 18 bits [3][4]. By using an analysisby-synthesis search procedure in which the error between

the original and reconstructed speech is minimized according to a perceptually weighted distortion measure, the excitation signal is chosen. To do this the error signal is filtered

with a perceptual weighting filter, the coefficients of which

1

2 can be derived from the unquantized LP filter. The percep0.46363718 0.92724705 z 0.46363718 z

tual weighting is made adaptive so that the performance fo r

H h1 z

1

2

input signals with a flat frequency response is improved. The

1 1.9059465 z 0.9114024 z

excitation parameters (fixed and adaptive

codebook parame(1)

ters) are determined per sub-frame of 5 ms (40 samples)

This input signal that is filtered through Hh1 (z) is referred to

each. The LP filter coefficients (both quantized and unas s(n), and is used further in all the subsequent coder opera- quantized) are used for the second sub-frame, whereas in the

tions.

first sub-frame interpolated LP filter coefficients (both quantized and un-quantized) are used . An open-loop pitch delay

B. Li near Prediction Analysis

denoted by TOP is estimated once per 10 ms frame by using

the perceptually weighted speech signal Sw (n) [1][2].

In the LP analysis the redundancy in the speech signal is

exploited. The primary objective of LP analysis is to compute the LP coefficients which minimized the prediction

error. The popular method for computing the LP coefficients

is autocorrelation method. This achieved by min imizing the

total prediction error. The short-term analysis and synthesis

filters are based on 10th order linear prediction (LP) filters.

The LP synthesis filter is defined as:

1

A ( z )

1

10

1 a i z

(2)

i

i 1

where i , i = 1,...,10, are the (quantized) linear prediction

(LP) coefficients. The short-term predict ion, or linear prediction analysis is performed once per speech frame using the

autocorrelation method with a 30 ms asymmetric window.

After every 80 samples (10 ms), the autocorrelation coefficients of windowed speech are computed and are converted

to the LP coefficients making use of the Levinson-Durbin

algorith m. Then these LP coefficients are transformed to the

LSP do main for quantization and interpolation purpos es.

The quantized interpolated and unquantized filters are converted back to the LP filter coefficients (to construct the synthesis and weighting filters for each subframe).

A( z )

1

1

Figure 1:- Block diagram of CS -ACELP Encoder

10

ai z i

The weighted speech signal Sw (n) is used for the open loop

pitch lag estimation.

i 1

C. Open loop pitch search

The input signal is passed through high-pass filter and is

scaled in the pre-processing block. This pre-processed signal

act as an input signal for all the further analysis. LP analysis

is performed once for per 10 ms frame for purpose of the

615

The three maxima of the correlation are found and they are

in following three ranges; (20:39), (40:79), (80:143). The

open loop pitch is obtained by taking the maxima of the

three ranges by using the normalized autocorrelat ion function.

For one frame, the total operations required are 10160 mu ltiplicat ions, 10033 additions, 123 co mparisons, 3 radical and

3 div ision operations and estimate the open loop pitch.

The co mputation of the pitch is dependent on the voiced

and the unvoiced signal. The pitch contour lies in the voiced

signal only. The weighted delta-LSP function (Wd) is used

to differentiate between voice and unvoiced signal. The

function Wd is given by:

= 10

=1

12

If the value of Wd is greater than some pre-defined threshold, then the open loop pitch lag is estimated otherwise the

pitch value is taken as same as that of prev ious frame. The

is the LSP coefficient of the kth order at the ith frame

and wk is the weighted coefficient [5]. Hence the calcu lations

that are required in th is are automatically reduced.

D. Closed l oop pi tch search

For good performance of the CELP algorith m at an intermediate bit rate either a closed or an open pitch loop is essential. The closed pitch loop can be called as an adaptive codebook of overlapping candidate vectors. Either a method

called the endpoint correction or the energy recursion method can be applied to the closed pitch loop, as both these

procedures take advantage of the overlapping nature of the

codebook and are not affected by its

dynamic character. Closed-loop pitch analysis is then done

(to find the adaptive-codebook delay and gain), using the

target signal x(n) and impulse response h(n), by searching

around and estimating the value of the open-loop pitch delay. A fractional pitch delay having a resolution of 1/3 is

used. The pitch delay is encoded with 8 bits in the first subframe and is differentially encoded with 5 b its in the second

subframe

Pulse

Sign

Positions

i0

s0 : 1

m0 : 0, 5, 10, 15, 20, 25, 30, 35

i1

s1 : 1

m1 : 1, 6, 11, 16, 21, 26, 31, 36

i2

s2 : 1

m2 : 2, 7, 12, 17, 22, 27, 32, 37

i3

s3 : 1

m3 : 3, 8, 13, 18, 23, 28, 33, 38

4, 9, 14, 19, 24, 29, 34,

39

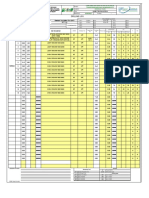

Table 1:- Fixed codebook search structure

F. Memory Update

The states of the synthesis and weighting filters are needed

to be updated to compute the target signal in the next subframe. After quantizing the two gains, the excitation signal

denoted by u(n), in the present subframe is obtained using

the equation:

un g p vn g c cn

n 0,...,39

where g^p are the quantized adaptive-codebook gains and g^c

are fixed-codebook gains, v(n) is the vector of adaptivecodebook (past interpolated excitation), and c(n) is the vector of fixed-codebook including harmonic enhancement. The

filter states can be updated by filtering the signal r(n) u(n )

(difference between residual and excitation) through the

filters 1/(z) and A(z/1 )/A(z/2 ) for the 40 samp le subframe

and saving the states of the filters. Th is would require three

operations of the filter. A simp ler approach, that requires

only one filter operation, is as follows. The locally reconstructed speech ^s (n) is computed by filtering the excitation

signal through 1/(z). The filter output due to the input

r(n) u(n) is equivalent to e(n) = s(n) s^(n). So the states of

the synthesis filter 1/(z) are given by e(n), n = 30,...,39.

Updating the filter states A(z/1 )/A(z/2 ) can be done by filtering the error signal e(n) through this filter to find the error

ew(n) wh ich is perceptually weighted. However, the signal

ew(n) can also be found by:

ewn xn g p yn g c zn

Since the signals x(n), y(n) and z(n) are now available, the

weighting filter states are updated by computing ew(n) as in

equation (76) fo r n = 30,...,39. Th is saves two filter operations.

E. Fixed codebook search

The fixed codebook usually occupies 17 bits. The case

where it takes 11 bits can be considered as mentioned in [4].

The pulse positions of the first two pulses are each encoded

with the help of three bits, whereas the third pulse position

is encoded with the help of four bits. The global sign for the

three pulses is encoded with one bit. The first two pulses in

the sequence have fixed amp litudes of +1, and the last pulse

has fixed amp litude of -1.

II B IT ALLOCATION OF THE 8 KB IT/S CS-ACELP

ALGORITHM

The CS-A CELP coder is based on the code-excited linear

prediction (CELP) coding model. This coder operates on

10 ms speech frames that corresponds to 80 samples at a

sampling rate of 8000 samples per second. For each frame o f

10 ms, the speech signal is analyzed to extract the parame616

ters of the CELP model (linear prediction filter coefficients,

the indices and gains of adaptive and fixed-codebook).

These parameters are then encoded and further transmitted.

The bit allocation of the coder parameters is shown in Table 1. At the decoder, these filter parameters are used to retrieve the excitation and synthesis filter parameters. The

speech signal is reconstructed by filtering this excitation

through a filter called the short-term synthesis filter, as

shown in Figure 1. The short-term synthesis filter is based

on a 10th order linear prediction (LP) filter. The long-term,

or pitch synthesis filter is imp lemented using the approach of

adaptive-codebook. After the computation of the reconstructed speech, it is passed through a postfilter to further

enhanced its properties.

Parameter

Codeword

Line spectrum

pairs

L0, L1,

L2, L3

Adaptivecodebook

delay

P1, P2

Pitch-delay

parity

P0

Fixedcodebook

index

C1, C 2

13

13

26

[2] ITU-T G.729: Coding of speech at 8 kb/s using CSACELP, 1996.

Fixedcodebook sign

S1, S2

Codebook

gains (stage 1)

GA1,

GA2

[3] Kataoka et al: An 8 kb/s speech coder based on conjugate structured CELP, IEEE int. conf. acoustic, speech,

signal processing, 1993.

Codebook

gains (stage 2)

GB1,

GB2

Total

Subframe

1

Subframe

2

converted to 16-bit linear PCM before encoding, or from 16bit linear PCM to the appropriate format after decoding.

Fo r simu lation we used a matlab Software. The graph

shows the original speech and the same type of graph is expected at the decoder output.

Total

per

frame

18

Graph1:- Original S peech

13

IV REFERENCES

[1] Salami et al: Design and Description of CS -ACELP: A

toll quality 8kb/s speech coder, IEEE trans Speech Audio

Process, 1996.

[4] kataoka et al: LSP and gain quantization for prop osed

ITU-T 8 kb/s speech coding standard, IEEE workshop on

speech coding, 1995.

80

[5] Shaw Hwa Hwang: Co mputational improvement for

G.729 standard, 2003.

Table2:- Bit allocation of CS-A CELP algorith m for 8

[6] A. B. Roach, Session Initiation Protocol (SIP) -specific

event notification, RFC 3265, June 2002.

kbit/s

III CONCLUS ION AND S IMULATION RES ULT

This coder is designed to operate with a digital signal

which is obtained by first performing telephone bandwidth

filtering of the analogue input signal, then sampling it at

8000 Hz, and is followed by conversion to 16-bit linear

PCM fo r the input to the encoder. The output of the decoder

is to be converted back to an analogue signal by similar method. Another input/output characteristics of the signal, like

those specified by for 64 kb it/s PCM data, is needed to be

617

[7] A. Johnston, S. Donovan, R. Sparks, C. Cunningham,

and K. Su mmers, Session Initiat ion Protocol (SIP) Public

Switched Telephone Network (PSTN) call flo ws, RFC

3666, December 2003.

[8] R. Sparks, The Session Initiation Protocol (SIP) refer

method, RFC 3515, April 2003.

[9] ITU-T Reco mmendation P.862, Perceptual evaluation

of speech quality (PESQ): An objective method for end -to-

end speech quality assessment of narrow-band telephone

networks and speech codecs, Feb. 2001.

[10] ITU-T Recommendation P.862 A mend ment 1, Source

code for reference imp lementation and conformance tests,

March 2003.

[11] A. E. Conway, Output-based method of applying

PESQ to measure the perceptual quality of framed speech

signals, in IEEE Wireless Co mmunications and Networking

Conference, Vo l. 4, pp. 2521-2526, March 2004.

618

[12] Prof M Noor,Israr K., "Real-Time Implementation And

Optimization Of ITU-Ts G.729Speech Codec Running

At8kbits/Sec Using CS-ACELP On TM-1000VLIW DSP

CPU", Co mmunicat ions Magazine,IEEE, 1997, 35 (9) :8291.

[13] Duttweiler D L., "Proportionate normalized least mean

squares adaptation in echo cancellers", IEEE Transactions

on Speech and Audio Processing, 2000, 8 (5) :508-518.

[14] Texas Instruments Incorporated, Codec Engine Application Developer User's Gu ide, www.t i.co m, 2007.

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (894)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Recent Advances in Risk Analysis and Management (RAM)Document5 pagesRecent Advances in Risk Analysis and Management (RAM)Ijact EditorNo ratings yet

- Compusoft, 1 (1), 8-12Document5 pagesCompusoft, 1 (1), 8-12Ijact EditorNo ratings yet

- Implementation of Digital Watermarking Using MATLAB SoftwareDocument7 pagesImplementation of Digital Watermarking Using MATLAB SoftwareIjact EditorNo ratings yet

- Adaptive Firefly Optimization On Reducing High Dimensional Weighted Word Affinity GraphDocument5 pagesAdaptive Firefly Optimization On Reducing High Dimensional Weighted Word Affinity GraphIjact EditorNo ratings yet

- Information Technology in Human Resource Management: A Practical EvaluationDocument6 pagesInformation Technology in Human Resource Management: A Practical EvaluationIjact EditorNo ratings yet

- Compusoft, 2 (5), 114-120 PDFDocument7 pagesCompusoft, 2 (5), 114-120 PDFIjact EditorNo ratings yet

- Compusoft, 2 (5), 127-129 PDFDocument3 pagesCompusoft, 2 (5), 127-129 PDFIjact EditorNo ratings yet

- Compusoft, 2 (5), 121-126 PDFDocument6 pagesCompusoft, 2 (5), 121-126 PDFIjact EditorNo ratings yet

- A New Cryptographic Scheme With Modified Diffusion For Digital ImagesDocument6 pagesA New Cryptographic Scheme With Modified Diffusion For Digital ImagesIjact EditorNo ratings yet

- Graphical Password Authentication Scheme Using CloudDocument3 pagesGraphical Password Authentication Scheme Using CloudIjact EditorNo ratings yet

- Measurement, Analysis and Data Collection in The Programming LabVIEWDocument5 pagesMeasurement, Analysis and Data Collection in The Programming LabVIEWIjact EditorNo ratings yet

- Compusoft, 2 (5), 108-113 PDFDocument6 pagesCompusoft, 2 (5), 108-113 PDFIjact EditorNo ratings yet

- Role of Information Technology in Supply Chain ManagementDocument3 pagesRole of Information Technology in Supply Chain ManagementIjact EditorNo ratings yet

- Attacks Analysis and Countermeasures in Routing Protocols of Mobile Ad Hoc NetworksDocument6 pagesAttacks Analysis and Countermeasures in Routing Protocols of Mobile Ad Hoc NetworksIjact EditorNo ratings yet

- Audit Planning For Investigating Cyber CrimesDocument4 pagesAudit Planning For Investigating Cyber CrimesIjact EditorNo ratings yet

- Compusoft, 3 (12), 1374-1376 PDFDocument3 pagesCompusoft, 3 (12), 1374-1376 PDFIjact EditorNo ratings yet

- Impact of E-Governance and Its Challenges in Context To IndiaDocument4 pagesImpact of E-Governance and Its Challenges in Context To IndiaIjact Editor100% (1)

- Compusoft, 3 (12), 1364-1368 PDFDocument5 pagesCompusoft, 3 (12), 1364-1368 PDFIjact EditorNo ratings yet

- Compusoft, 3 (12), 1369-1373 PDFDocument5 pagesCompusoft, 3 (12), 1369-1373 PDFIjact EditorNo ratings yet

- Compusoft, 3 (12), 1386-1396 PDFDocument11 pagesCompusoft, 3 (12), 1386-1396 PDFIjact EditorNo ratings yet

- Archiving Data From The Operating System AndroidDocument5 pagesArchiving Data From The Operating System AndroidIjact EditorNo ratings yet

- Compusoft, 3 (12), 1377-1385 PDFDocument9 pagesCompusoft, 3 (12), 1377-1385 PDFIjact EditorNo ratings yet

- Compusoft, 3 (12), 1360-1363 PDFDocument4 pagesCompusoft, 3 (12), 1360-1363 PDFIjact EditorNo ratings yet

- Compusoft, 3 (12), 1350-1353 PDFDocument4 pagesCompusoft, 3 (12), 1350-1353 PDFIjact EditorNo ratings yet

- Compusoft, 3 (12), 1354-1359 PDFDocument6 pagesCompusoft, 3 (12), 1354-1359 PDFIjact EditorNo ratings yet

- Compusoft, 3 (11), 1343-1349 PDFDocument8 pagesCompusoft, 3 (11), 1343-1349 PDFIjact EditorNo ratings yet

- Compusoft, 3 (11), 1327-1336 PDFDocument10 pagesCompusoft, 3 (11), 1327-1336 PDFIjact EditorNo ratings yet

- Compusoft, 3 (11), 1337-1342 PDFDocument6 pagesCompusoft, 3 (11), 1337-1342 PDFIjact EditorNo ratings yet

- Compusoft, 3 (11), 1317-1326 PDFDocument10 pagesCompusoft, 3 (11), 1317-1326 PDFIjact EditorNo ratings yet

- V Series: User'S Manual SetupDocument109 pagesV Series: User'S Manual SetupTFNo ratings yet

- Build a better internet together with Cloudflare partnersDocument19 pagesBuild a better internet together with Cloudflare partnersVicky MahiNo ratings yet

- 034 PhotogrammetryDocument19 pages034 Photogrammetryparadoja_hiperbolicaNo ratings yet

- The Effect of Technology To The Everyday Living of A First Year Nursing Student in Terms of Academic PerformanceDocument18 pagesThe Effect of Technology To The Everyday Living of A First Year Nursing Student in Terms of Academic PerformanceVanessa Mae IlaganNo ratings yet

- Conventional Software Testing Using White Box Method: KINETIK, Vol. 3, No. 1, February 2018, Pp. 65-72Document8 pagesConventional Software Testing Using White Box Method: KINETIK, Vol. 3, No. 1, February 2018, Pp. 65-72Achmad YuszrilNo ratings yet

- Selecting Software Testing ToolsDocument29 pagesSelecting Software Testing ToolsJay SawantNo ratings yet

- Gxv3140 Usermanual EnglishDocument151 pagesGxv3140 Usermanual Englishindio007No ratings yet

- Testing Suite PartA - PyDocument14 pagesTesting Suite PartA - PyHugsNo ratings yet

- Chapter 22 Transport LayerDocument25 pagesChapter 22 Transport LayerAnonymous ey6J2bNo ratings yet

- 12drilling Log Spt04 160622Document2 pages12drilling Log Spt04 160622Angelica E. Rabago LopezNo ratings yet

- Chapter 3!: Agile Development!Document21 pagesChapter 3!: Agile Development!Irkhas Organ SesatNo ratings yet

- Forescout Modern NAC and Device VisibilityDocument12 pagesForescout Modern NAC and Device VisibilityM Saad KhanNo ratings yet

- Manual CX600 X8Document261 pagesManual CX600 X8volicon voliconNo ratings yet

- Electronic Hand Control MH 2000Document3 pagesElectronic Hand Control MH 2000Christian ChumpitazNo ratings yet

- Project ChiptopDocument39 pagesProject ChiptopNikita Patel086No ratings yet

- Petrel ShaleDocument4 pagesPetrel ShaleAnderson Portilla BenavidesNo ratings yet

- Cyber TerrorismDocument20 pagesCyber TerrorismJane Omingo100% (1)

- Chapter 12 Multiple-Choice Questions on Auditing IT SystemsDocument12 pagesChapter 12 Multiple-Choice Questions on Auditing IT SystemsJohn Rey EnriquezNo ratings yet

- Nid Automotive Mechtronics Curriculum and Course SpecificationsDocument220 pagesNid Automotive Mechtronics Curriculum and Course SpecificationsSamuel KwaghterNo ratings yet

- Nti P/N: E-Stsm-E7: RJ45-FF Coupler (Supplied)Document1 pageNti P/N: E-Stsm-E7: RJ45-FF Coupler (Supplied)Diana LopezNo ratings yet

- F5 Training Lab GuideDocument11 pagesF5 Training Lab GuideBrayan Anggita LinuwihNo ratings yet

- 2021-12-Clamp Proximity Sensor TestingDocument4 pages2021-12-Clamp Proximity Sensor TestingSARET respaldoNo ratings yet

- Course Outline - Design Sketching (AutoRecovered)Document10 pagesCourse Outline - Design Sketching (AutoRecovered)Krishnanunni Karayil UllasNo ratings yet

- Manual Toshiba 32TL515UDocument102 pagesManual Toshiba 32TL515URené J Izquierdo RNo ratings yet

- Unit-I DW - ArchitectureDocument96 pagesUnit-I DW - ArchitectureHarish Babu100% (1)

- Curriculum Vitae: Executive Summary & Covering LetterDocument9 pagesCurriculum Vitae: Executive Summary & Covering LetterKhalid MahmoodNo ratings yet

- Functional Level StrategyDocument32 pagesFunctional Level StrategyAmit Yadav100% (2)

- Sunrise Systems: Presentation Pipenet SoftwareDocument35 pagesSunrise Systems: Presentation Pipenet SoftwareKathirNo ratings yet

- Chziri zvf330 Ac Drive User Manual E375Document59 pagesChziri zvf330 Ac Drive User Manual E375Diego Armando Carrera palmaNo ratings yet

- Vehicle Renting SystemDocument24 pagesVehicle Renting SystemSubham Kumar IET StudentNo ratings yet