Professional Documents

Culture Documents

FPGA Implementation of FIR Filters Using Pipelined Bit-Serial CSD Multipliers

Uploaded by

SARATH MOHANDASOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

FPGA Implementation of FIR Filters Using Pipelined Bit-Serial CSD Multipliers

Uploaded by

SARATH MOHANDASCopyright:

Available Formats

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

CHAPTER 1

INTRODUCTION

Digital signal processing has many advantages over analog signal processing. Digital

signals are more robust than analog signals with respect to temperature and process variations.

The accuracy in digital representations can be controlled better by changing the word length of

the signal. And DSP techniques can cancel the noise and interference while amplifying the

signal. In contrast, both signal and noise are amplified in analog signal processing. Further-more,

the DSP systems have high precision, high signal to noise ratio(SNR), repeatability and

flexibility. The two important features that distinguish DSP from other general purpose

computations are real time throughput requirement and data-driven property. The hardware

should be designed to meet the tight constraint of the real time processing where new input

samples need to be processed as they are received periodically from the signal source. The

second constraint is data driven property in which any sub tasks or computations in a DSP

system can be performed once when all inputs are available.

The goal of digital design is to maximize the performance of the system at low cost. The

performance is measured on the basis of amount of hardware circuitry and resources required,

the speed of execution, which depends on both throughput and clock rate; amount of power

dissipation etc.

The FIR filter is one of the fundamental processing elements in any DSP systems. FIR

filters are used in the DSP applications ranging from video and image processing to the wireless

communications. Sometimes the FIR filters are able to operate at high frequencies, sometimes at

low frequencies. Parallel, or block processing can be applied to the digital filters either to

increase the effective throughput of the original filter or reduce the power consumption of the

original filter.

For many years, numerous efforts have been made to reduce the implementation

complexity of signal processors, which is measured by the Area-Time, or AT product. The two

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

main aspects of this complexity is that of computation and communication. For computation

complexity efforts have been focusing on minimizing the number of necessary operations,

mainly that of multiplications, and efficient implementation of such operations, which is

represented by signed digit algorithms such as modified Booth coding and CSD coding

algorithms. Although CSD coding algorithm has been proved to be optimal as for reduction of

the non-zero digits, it has found only very limited applications due the coding complexity and

the varying operation delay. For communication complexity reduction one attractive solution is

to use bit-serial architecture, which is distinguished by the efficient inner and inter chip

communications and small, tightly pipelined processing elements. Various bit-serial multipliers

have been built based on modified Booth algorithms.

In the 1970s, logic systems were created by building PCB boards comprising of TTL

logic chips. However, one the limitations was that as the functions got larger, the size of the logic

increased, but more importantly, the number of logic levels increased, thereby compromising the

speed of the design. Typically, designers used logic minimization techniques such as those based

on Karnaugh maps or QuineMcCluskey minimization, to create a sum of products expression

which could be created by generating the product terms using AND gates and summing them

using an OR gate. The concept of creating a structure to achieve implementation of this

functionality, was captured in the programmable array logic (PAL) device, introduced by

Monolithic Memories in 1978. The PAL comprised a programmable AND matrix connected to a

fixed OR matrix which allowed sum of products structures to be implemented directly from the

minimized expression.

The early FPGA structures comprised a Manhattan style architecture where each

individual cell comprised simple logic structures and cells were linked by programmable

connections. Thus the FPGA could be viewed as comprising the following:

programmable logic units that can be programmed to realize different digital

functions

programmable interconnect to allow different blocks to be connected together

finally programmable I/O pins.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Thus FPGA is an integrated circuit that contains many large number of identical logic

cells that can be viewed as standard components. Each logic cell can independently take on any

one of a limited set of personalities. The individual cells are interconnected by a matrix of wires

and programmable switches. A user's design is implemented by specifying the simple logic

function for each cell and selectively closing the switches in the interconnect matrix. The array

of logic cells and interconnects form a fabric of basic building blocks for logic circuits. Complex

designs are created by combining these basic blocks to create the desired circuit. Conceptually it

can be considered as an array of Configurable Logic Blocks (CLBs) that can be connected

together through a vast interconnection matrix to form complex digital circuits.

Recent development on Field Programmable Gate Array, FPGA, has presented a user

programmable, regular, register-rich architecture with abundant local and global connection

resources. This architecture is very attractive to bit-serial and bit-level systolic processing.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

CHAPTER 2

LITERATURE SURVEY

Digital Signal Processing (DSP) is used in numerous applications such as video

compression, digital set-top box, cable modems, digital versatile disk, portable video systems,

global positioning systems, bio medical processing transmission of systems etc. The field of DSP

has driven by the advances in DSP applications and in scaled very-large-scale-integrated (VLSI)

technologies [1]. For the past decades many efforts have been made to reduce the

implementation of the complexity of the signal processors, which is measured in Area-time. The

two main aspects of the complexity is that of computation and communication [2].

For computation complexity efforts have been focusing on minimizing the number of

necessary operations, mainly that of multiplications, and efficient implementation of such

operations, which is represented by signed digit algorithms such as modified Booth coding

[Homayoon Sam et.al] and CSD coding algorithms [3]. Although CSD coding algorithm has

been proved to be optimal as for reduction of the non-zero digits, it has found only very limited

applications due the coding complexity and the varying operation delay. For communication

complexity reduction one attractive solution is to use bit serial architecture [4], which is

distinguished by the efficient inner and inter chip communications and small, tightly pipelined

processing elements. The Bit-serial architecture offers a great advantage in comparison with bitparallel architectures as regards area minimization. Bit parallel designs process all of the bits of

an input simultaneously at a significant hardware cost. In contrast, a bit serial structure processes

the input one bit at a time, generally using the results of the operations on the first bits to

influence the processing of subsequent bits. The advantage enjoyed by the bit serial design is that

all of the bits pass through the same logic, resulting in a huge reduction in the required hardware.

Typically, the bit serial approach requires 1/mth of the hardware required for the equivalent m-bit

parallel design .

In synchronous design, the performance of these architectures is affected by the long

lines which are used to control the operators and the gated clocks. Long control lines can be

avoided by a local distribution of the control circuitry on the operator level. The non-zero digit

is avoided, because each nonzero digit of the fixed-point coefficients costs an adder, many

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

researchers try to minimize the number of nonzero digits in the coefficient design phase [5].

Multipliers are expensive functional units to implement using FPGA technology, so virtually all

of the methods employ techniques that avoid the requirement for a full multiplier [6].

High-speed digital filtering applications (such as, sample rates in excess of 20MHz)

generally require the use of custom application specific integrated circuits (ASICs), because

programmable signal processors (such as DSPs) cannot accommodate such high sample rates

without an excessive amount of parallel processing. And for dedicated applications, the

flexibility of a filter with high-speed multipliers is not necessary. A new high-speed, CSD

coefficient FIR filter structure is presented by Zhangwen Tang, Jie Zhang and Hao Min et.al.

Through studying CSD coefficient filters, Booth multipliers and high-speed adders, we propose a

new programmable CSD encoding structure is proposed [7]. With this structure, we can

implement any order of high-speed FIR filters, and the critical path is almost not proportional to

the tap number. The New Synchronous pipelined Architecture [8] has the peculiar feature of

being self-timed and comprises a fully interlocked pipelining structure which aims at controlling

the different computational paths of a system design. The paper shows how useful this approach

is in terms of chip area, low-power design, and speed. Furthermore the architecture allows the

mapping of different dataflow graph into one graph by including router in the graph and routing

information in the data packet. This offers the freedom of reconfiguration within the

implementation and supports also rapid system prototyping. For the design of embedded

systems, to be applied for control purposes in mechatronic systems, this new architecture

represents a novel approach, especially as regards the distribution of controllers and data

information. The implementation of the platform is done on the FPGA. The FPGAs are

increasingly used for a variety of computationally intensive applications, mainly in the field of

DSP and communications. Due to rapid increase in the technology, current generation of FPGAs

contain very high number of CLBs (Configurable Logic block). The high NRE (Non-Recurring

Engineering) cost and long development time for ASICs make FPGA more attractive [9]. A

serial data stream of data has better match with the structure of an FPGA [10]. Thus in an actual

implementation, the speed of the full-serial circuit have lower cost in area, runs more faster than

the need of the application.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

CHAPTER 3

FIR FILTERS BASICS

3.1 INTRODUCTION TO DSP

The processing of analogue electrical signals and digital data from one form to another is

fundamental to many electronic circuits and systems. Both analogue (voltage and current) signals

and digital (logic value) data can be processed by many types of circuits, and the task of finding

the right design is a sometimes confusing but normal part of the design process. It depends on

identifying the benefits and limitations of the possible implementations to select the most

appropriate solution for the particular scenario.

ASP and DSP each has its own advantages and disadvantages:

3.1.1 ANALOGUE IMPLEMENTATION:

(a)Advantages:

high bandwidth (from DC up to high signal frequencies)

high resolution

ease of design

good approach for simpler design solutions

(b)Disadvantages:

component value change occurs with component aging

component value change occurs with temperature variations

behavior variance between manufactured circuits due to component

tolerances

difficult to change circuit operation

3.1.2 DIGITAL IMPLEMENTATION:

(a)Advantages:

programmable and configurable solution (either programmed in software

on a processor or configured in hardware on a CPLD/FPGA)

operation insensitive to temperature variations

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

precise behavior (no behavior variance due to varying component

tolerances)

can implement algorithms that cannot be implemented in analogue

ease of upgrading and modifying the design

(b)Disadvantages:

implementation issues due to issues related to numerical calculations

requires high-performance digital processing

design complexity

higher cost

3.2 DIGITAL FILTERS

A filter is a circuit that performs some type of signal processing on a frequency

dependent basis. These filters can be realized in both analogue and digital circuits. Digital filters

receive one or more discrete time signals (signal samples) and modify these signals to produce

one or more outputs, and filters will pass or reject frequencies based on their required operation:

1. Low-pass filters will pass low-frequency signals but reject high-frequency signals.

2. High-pass filters will pass high-frequency signals but reject low-frequency signals.

A filter is used to modify an input signal in order to facilitate further processing. A digital

filter works on a digital input (a sequence of numbers, resulting from sampling and quantizing an

analog signal) and produces a digital output. The filter coefficients which represents the impulse

response of the proposed filter design. These coefficients, in linear convolution with the input

sequence will result in the desired output. The linear convolution process can be represented as:

( eq: 3.1 )

Here, y[n] signifies the output of the filter, x[n] is the digital input to the filter. The impulse

response of the filter is given by f[k] and the operator * denotes the convolution operation. It

can be seen that the extent of the summation is governed by k, which denotes the extent of the

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

impulse response of the filter. Therefore, if the filter has an infinite impulse response, the

summation extends to infinity and the filter is said to be an Infinite Impulse Response (IIR) filter.

A filter with a finite value for k is said to be a Finite Impulse Response (FIR) filter. It can be

inferred that the output of an FIR filter remains dependant only on the inputs and the coefficients.

Therefore, the filter detailed above is an LTI filter. Equation (3.1) can be re-written as follows,

for an order of L, as follows:

(eq: 3.2)

Fig 3.1: Schematic of an LTI filter of order L.

The coefficients of an FIR filter, as mentioned earlier, denote the impulse response of the filter. It

is imperative for any system implementation of such a filter to use a number format that

represents the coefficients to as much precision as allowed by the resource constraints.

3.2.1 INFINITE IMPULSE RESPONSE FILTER (IIR):

The infinite impulse response (IIR) filter is a recursive filter in that the output from the

filter is computed by using the current and previous inputs and previous outputs. Because the

filter uses previous values of the output, there is feedback of the output in the filter structure. The

design of the IIR filter is based on identifying the pulse transfer function G(z) that satisfies the

requirements of the filter specification. This can be undertaken either by developing an analogue

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

prototype and then transforming it to the pulse transfer function, or by designing directly in

digital. Fig.3.2 shows typical IIR filter architecture.

Fig:3.2: Typical Architecture of IIR Filter

3.2.2.FINITE IMPULSE RESPONSE FILTERS (FIR)

The finite impulse response (FIR) filter is a non-recursive filter in that the output from the filter

is computed by using the current and previous inputs. It does not use previous values of the

output, so there is no feedback in the filter structure. The design of the FIR filter is based on

identifying the pulse transfer function G(z) that satisfies the requirements of the filter

specification. This can be undertaken either by developing an analogue prototype and then

transforming this to the pulse transfer function, or by designing directly in digital. A nonrecursive filter is always stable, and the amplitude and phase characteristics can be arbitrarily

specified. However, a non-recursive filter generally requires more memory and arithmetic

operations than a recursive filter equivalent. Fig:3.3 shows typical FIR filter architecture.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Fig:3.3: Typical FIR filter architecture

Here, the filter input is applied to a sequence of sample delays (z -1), and the outputs from each

delay (and the input itself) are applied to the inputs of multipliers. Each multiplier has a

coefficient set by the filter requirements. The outputs from each multiplier are then applied to the

inputs of an adder, and the filter output is then taken from the output of the adder.

3.3 DIFFERENT OPERATIONS IN DIGITAL FILTERS

There are different operations for increasing the performance of the digital filters, they

are :

1. Pipelining transformation

2. Parallel Transformation

Pipelining transformation leads in the reduction of the critical path, which results either

increase in the clock speed or sample speed or to reduce power consumption at same speed. For

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

10

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

parallel processing, on the other hand, multiple outputs are computed in parallel in a clock period

It can also used for the reduction in the power consumption.

Fig: 3.4: (a) Original Datapath (b)2 level pipelined architecture (c) 2-level parallel processing structure

Consider the fig: 3.4(a) ,where computation time of the of the critical path is 2T A .the

fig:3.4(b) shows the 2-level pipelined structure ,where latch 1 is placed between 2 adders and

hence the critical path is reduced half (TA). Its 2-level pipelined architecture is showed in

fig:3.4(c), here the same hardware is duplicated so that 2 inputs can be processed at the same

time .thus the sampling rate is increased by two.

The another type of transformation technique used in the Digital filters are Unfolding

Technique. The unfolding can be defined as the transformation technique that can applied to the

DSP program to create a new program describing more than one iteration of the original program

,ie unfolding a DSP program by a unfolding factor of J creates a new program that describes J

consecutive iterations of the original program. It is also referred as loop unrolling.

For example consider the DSP program,

y(n)=a y(n-9)+x(n)

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

(eqn:3.3)

11

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Replacing the index n with 2k, results in

y(2k)= a y(2k-9)+x(2k)

(eqn: 3.4)

Similarly, replace the index with 2k+1, results in

y(2k+1)= a y(2k-8)+x(2k+1)

(eqn: 3.5)

Eqns 3.4 and 3.5 describes the 2-folded version of the eqn: 3.3 of the DSP program. It is also

noted by the equations that the unfolding operation is equivalent to the parallel operation. The

parallel operations can be classified into two:

1. Word-level-parallel processing

2. Bit-level parallel processing

The bit level parallel processing and digit-serial processing can be obtained from the bit-serial

architecture .Similar transformation can be done for the Word-level parallel processing.

(a)Bit-serial processing:

Here one bit is processed per clock cycle and complete word is processed in W clock

cycles which is shown in figure 3.5

Fig:3.5: Bit serial processing of the wordlength W=6

(b)Bit-parallel processing:

As per fig: 3.6, the one word of W bits is processed per clock cycle

(c) Digit-serial processing:

Here N bits are processed per clock cycle and word is processed in W/N clock cycles.

The parameter N is referred to as digit size.( shown in fig:3.7)

Fig:3.6:bit-parallel architecture

fig:3.7:digit serial architecture

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

12

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

CHAPTER 4

NUMBER REPRESENTATIONS

Fig:4.1 Survey of Number representations

4.1 REPRESENTATION OF NUMBERS

All signal processing applications deal with numbers. Usually in these applications an

analog signal is first digitized and then processed. The discrete number is represented and stored

in N bits. Let this N-bit number be a

N - 1

. . .a2a1a0. This may be treated as signed or unsigned.

There are several ways of representing a value in these N bits. When the number is unsigned then

all the N bits are used to express its magnitude. For signed numbers, the representation must

have a way to store the sign along with the magnitude.

There are several representations for signed numbers . Some of them are ones

complement, sign magnitude, canonic sign digit (CSD), and twos complement. In digital system

design the last two representations are normally used.

4.1.1 SIGNED MAGNITUDE

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

13

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

In signed magnitude systems, the n 1 lower significant bits represent the magnitude,

and the msb, xn bit, represents the sign. In the signed magnitude notation, the magnitude of the

word is decided by the three lower significant bits, and the sign determined by the sign bit, i.e.

msb. However, this representation presents a number of problems. First, there are two

representations of 0 which must be resolved by any hardware system, particularly if 0 is used to

trigger any event, e.g. checking equality of numbers. As equality is normally achieved by

checking bit-by-bit, this complicates the hardware. Lastly, operations such as subtraction are

more complex, as there is no way to check the sign of the resulting value, without checking the

size of the numbers and organizing accordingly.

4.1.2 ONES COMPLEMENT

In ones complement systems, the inverse of the number is obtained by inverting

operation.

4.1.3 TWOS COMPLEMENT

In twos complement representation of a signed number a

N - 1

. . .a2a1a0, the most

significant bit (MSB) aN -1 represents the sign of the number. If a is positive, the sign bit a N -1 is

zero, and the remaining bits represent the magnitude of the number:

(eqn.4.1)

Therefore the twos complement implementation of an N-bit positive number is equivalent to the

(N-1)-bit unsigned value of the number. In this representation, 0 is considered as a positive

number. The range of positive numbers that can be represented in N bits is from 0 to (2N-1-1).

For negative numbers, theMSB aN -1 has a negative weight and all the other bits have positive

weight. A closed-form expression of a twos complement negative number is:

(eqn:4.2)

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

14

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

(eqn:4.3)

It is also interesting to observe an unsigned equivalent of negative twos complement numbers.

Many SW and HW simulation tools display all numbers as unsigned numbers. While displaying

numbers these tools assign positive weights to all the bits of the number. The equivalent

unsigned representation of negative numbers is given by:

(eqn: 4.4)

where |a| is equal to the absolute value of the negative number a.

4.1.4 CANONIC SIGNED DIGIT NUMBER(CSD)

Signed Digit Number is represented as tertary values {-1,1,0}. and having the canonical

property that -1 and 1 are always followed by 0 in CSD strings.It is very useful in carry free

adders or multipliers with less complexity, since efforts of the multiplication can be estimated

through number of non zero elements.

The following are the properties of CSD numbers:

No 2 consecutive bits in a CSD number are non-zero.

The CSD representation of a number contains the minimum possible number of

non-zero bits, thus the name canonic.

The CSD representation of a number is unique.

CSD numbers cover the range (-4/3,4/3), out of which the values in the range [1,1) are of greatest interest.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

15

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Among the W-bit CSD numbers in the range [-1,1), the average number of nonzero bits is W/3 + 1/9 + O(2-W). Hence, on average, CSD numbers contains about 33%

fewer non-zero bits than twos complement numbers.

Conversion of W-bit number to CSD format:

An algorithm for computing the CSD format of a W-bit number is given as:

Let A = aW-1. aW-2 a1. a0 be a 2s complement number and its CSD representation is notation

is aW-1. aW-2 a1. a0. The conversion is illustrated for using following iterative algorithm:

Algorithm to obtain CSD representation is given as:

a-1 = 0;

-1 = 0;

aW = aW-1;

for (i = 0 to W-1)

{

qi = ai xor ai-1;

i = i-1qi;

ai = (1 - 2ai+1) i;

}

I

ai

i

1 - 2ai+1

ai

W

1

W-1

1

1

0

-1

0

0

1

1

-1

-1

1

0

0

1

0

1

0

0

-1

0

1

1

1

-1

-1

0

0

0

-1

1

0

0

0

1

0

1

1

1

1

-1

0

1

0

0

-1

1

-1

Table 1 :Example for the conversion of the number 1.01110011 to its CSD representation

CHAPTER 5

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

16

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

PIPELINED BIT SERIAL ARCHITECTURE

5.1 GENERAL BIT SERIAL ARCHITECTURE

In a bit-serial implementation, each delay element of the filter is replaced by an M-stage

single-bit shift register, as shown in Figure 5.1, where M is the wordlength of the filter. If the

coefficient value is an integer power-of-two, the multiplier can be replaced by a barrel shifter.

There is a more efficient method, however, for implementing a coefficient value which is an

integer power-of-two. It will be shown later that moving the adder k places to the right achieves

the same effect as would be achieved by a coefficient value of 2-k.

Fig:5.1: General Structure of Bit Serial Architecture

Consider the bit-serial summation of Sn and Un to produce Vn, as shown in Figure 2,

where Sn, Un, and Vn are given by

M 1

Sn=

m=0

(m)2m,

sn(m)=0,1

(eqn:5.1)

(m)2m,

un(m)=0,1

(eqn:5.2)

(m)2m,

vn(m)=0,1

(eqn:5.3)

M 1

Un=

m=0

M 1

Vn=

m=0

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

17

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Fig: 5.2: Bit Serial Summation, vn(m - k) = sn(m) + un(m-k)

The variables sn(m), un(m)and vn(m) and do not exist for m < 0 and m > M -1. In Fig:5.2,

)

un(m) is clocked into a shift register, least significant bit first, and sn(m) is added to u(m- k) at the stage

kth

producing v(m- k). The adder is a single-bit full adder. Hence,

vn(m) = un(m) + sn(m+k)

(eqn:5.4)

The requirements for the existence of un(m), vn(m), and sn(m + k), and the possibility of a carry

being generated are ignored at this moment; these will be discussed in the next subsection.

Multiplying both sides of (4) by 2m and summing from m = 0 to M- 1, we have

Suppose that Sn is derived from an R-bit data word Dn, where

M 1 +k

Vn = Un + 2

-k

sn (m) 2

m=k

(eqn:5.5)

M1

-k

Vn = Un + 2 Sn-

sn(m)2 m

m=0

Vn = Un + 2-k Sn, if sn(m)=0 for m=0... k-1

(eqn:5.6)

(eqn:5.7)

Suppose that Sn is derived from an R-bit data word Dn, where

M 1

Dn=

m= M R

r (m) 2

,r(m)=0,1 and R M

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

(eqn:5.8)

18

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Sn = Dn, then it is necessary that sn(m) = 0 form m = 0M-R-1. Thus, if M-R

k, then Vn

= Un + 2-k Sn We shall refer to R as the input signal wordlength. Note that k is the equivalent

coefficient wordlength. Hence, if the signal wordlength plus the coefficient wordlength is

less than or equal to the internal wordlength of the filter, the coefficient multiplier 2 -k can be

implemented using (4).

5.2 BIT SERIAL PIPELINED ARCHITECTURE

A pipelinable bit-serial multiplier using Canonic Signed Digit, or CSD code to represent

constant coefficients is introduced. Bit-serial operation processes the input one bit at a time.

Therefore bit-serial architecture simplifies the hardware since all of the bits pass through a single

bit wide module. Moreover, bit-serial architecture can operate at a higher clock frequency than

bit-parallel architecture since it takes no account of carry propagation chain. In the case of

FPGAs, signal routing delay is significant factor and it is not too much to say that a delay

determines the system performance. Bit-serial architecture tends to have very localized routing,

often to only a few local destinations. In contrast, the bit-parallel architecture requires many

modules, so the routing resources often are insufficient (low utilization) or the resulting design is

to slow (large routing delay). The bit-serial architecture has more efficiency in a FPGA which

has limited routing resource. In add operation, two input data are shifted from LSB (Least

Significant Bit) at the same time. The carry is stored in register and then fed back to the carry

input of full adder. Unlike bit-parallel operation, carry propagation does not occur (carry is

saved). The output of the circuit is stored in registers.

CSD coding technique, similar to Booth coding, is a signed digit notation, in which each

digit is to have three possible values: {1,0, l}, where 1 represent -1. CSD code has the property

that it is unique (canonic) and requires minimal number of non-zero digit in its representation[4].

It takes a value-dependent steps of iterations to convert a twos complement code or any other

non signed digit code) into CSD code. This has been the main obstacle for its application in

multiplier designs. For fixed coefficient multiplication, like most appear in digital filters, the

conversion can be carried out in advance and the coefficients can be considered as a constant

vector of digit from the triple {1,0,1}.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

19

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Assume without losing generality, that both the data and coefficients before CSD coding

are in n-bit twos compliment representation, as a fractional number in the range of -1 < x < 1, a

multiplication will generate a result of precision of 2n - 1 bits, out of these bits, only n Most

Significant Bit, or MSB, will be taken to represent a rounded production in a pipelined

multiplier.

Fig:5.3: Bit serial pipeline multiplier

The initial bit-serial multiplier to perform multiplication by a CSD coded coefficient can

be derived, where the coefficient bits are applied parallel in a Least Significant Bits, LSB, first

(to leftmost module and data in a LSB first stream, Fig:5.1. The coefficient bits are replaced by

CSD coded bits, as shown in Fig:5.2 for the coefficient bits to be l, 0 and 1 respectively.

Carry set/reset and sign extension, obtained with an extra flip-flop, are provided to allow two's

complement computation. On the k-th step, each accumulated partial product is truncated to k - 1

bits while the corresponding carries are saved to next accumulation. Rounding is obtained by

adding an offset 1 at the (n - 1)-th LSB and truncating at the corresponding step.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

20

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

If the modules are cascaded through so --c si terminals except the final stage(fig5.4),

where the final product comes out of the Is terminal, the multiplier will have a desirable n bittime latency, or wordlength latency but the performance will be degraded by the partial sum

propagation delay if the CSD coded coefficients has relatively more non-zero bits. An alternative

is to connect the modules through 1s -, si terminals to allow an increased bit rate, and insert

registers to both the data and synchronizing signal path to align the partial product accumulation.

This will also double the latency of the multiplier.

Fig:5.4: Bit-serial modules for CSD coded coefficient multiplier

5.3 OPERATION MODULES IN FIR FILTER DESIGN

A prominent property of FIR filters is that linear phase response can be obtained by

imposing symmetry or anti-symmetry conditions on the coefficients:

h ( i ) = h ( N - 1 - i ) i = 0 , 1 , ..., N 1

(eqn. 5.9)

This linear phase character has also the advantages in implementation that only half

number of the multipliers are required since the system function can be written, for N even and

odd respectively, as:

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

21

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Fig:5.5: a(x y )z

-l

operator

Fig:5. 6: a(x- y )z

-l

operator

Direct employment of bit-serial multiplier described above to FIR filter design will not

only cause some hardware redundancy, but also introduce some extra latency in the processing. It

is obvious, as can been seen in above equations, that most efficient implementation of FIR filter

will need a module to calculated a(x y )z - l, as Fig:5.3 shows. The CSD multiplier modules in

above section can ke further extended to include operation of 1 * (x+ y), 1 * (x + y) and 1 * (x y). An l+(x-y) module is shown in Fig:4. l*(x+y) modules are similar except minor differences

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

22

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

in carry set/reset circuitry. -1* (x - y) module is not necessary since -1*(x+y) .= 1 * (y - x), the

operation can be accomplished by just exchanging the x,y terminals of 1 * (x - y) module.

Note that signal applied to these module has to be scale down by half to prevent overflow

of the addition, before the multiplication takes place.

Bit-serial multiplier can produce a 2N-bit double precision product in every N clock

cycles for N-bit inputs. Bit-serial double precision data is represented by two wires. In both

cases, the output is bit-serial, also with the LSB. This structure is suited for FPGAs because of its

routing to nearest neighbors with inputs and outputs. The number of cells is proportional to the

input data width.

5.4 IMPLEMENTATION OVER FPGA

Field-programmable Gate Arrays(FPGAs) emerged as simple glue logic technology,

providing programmable connectivity between major components where the programmability

was based on either antifuse, EPROM or SRAM technologies (Maxfield 2004). An FPGA is a

device that consists of thousands or even millions of transistors connected to perform logic

functions. They perform functions from simple addition and subtraction to complex digital

filtering and error detection and correction. Aircraft, automobiles, radar, missiles, and computers

are just some of the systems that use FPGAs. A main benefit to using FPGAs is that design

change(s) need not have an impact on the external hardware. Under certain circumstances, an

FPGA design change can affect the external hardware (i.e., printed wiring board), but for the

most part, this is not the case.

The architecture is based on a regular array of basic programmable logic cells (LC) and a

programmable interconnect matrix surrounding the logic cells . The array of basic programmable

logic cells and programmable interconnect matrix form the core of the FPGA. This is surrounded

by programmable I/O cells. The programmable interconnect is placed in routing channels. The

specific design details within each of the main functions (logic cells, programmable interconnect,

and programmable I/O) will vary among vendors.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

23

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Fig:5.7: Generic FPGA Architecture

The high-density of current FPGAs make the construction of custom DSP (CDSP) singlechip systems possible. The advantages of this approach are multiple:

A higher speed with respect to the general-purpose DSP solution can be

obtained.

The FPGA reprogrammability can be exploited to construct reconfigurable

systems.

Future DSP ASICs can be fast-prototyped, different design options can be

emulated, and exhaustive or heterogeneous simulations can be avoided.

Off-chip interconnections and external components like FIFOs or RAMs can be

integrated in the embedded application.

Hard-wired DSP cores can be simplified and optimised for a given application

(data rate and precision, peculiarities of the coefficient, etc.).

A custom solution also allows the designer to select different types of arithmetic and

styles of implementations. In several cases, it is senseless to use conventional bit-parallel

circuits: they have an important cost in area and run faster than the speed needed by the

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

24

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

application. In this way, digit-serial architectures become an important alternative to efficiently

implementing a wide range of real-time signal processing circuits. The digit-serial approach

allows the designer to select an intermediate area-time figure, situated between the bit-parallel

and the bit-serial implementations. In addition, a serial stream of data matches better with the

structure of an FPGA; thus, in an actual implementation, the speed of a full serial circuit is not N

times lower than the equivalent N-bit parallel approach.

The efficiency of the bit serial architecture is demonstrated by implementation on

commercially available field programmable gate arrays (FPGAs), in particular the Xilinx

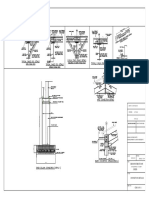

XC3100 series components. This approach is illustrated in Fig:5.8, where the interconnection

pattern for a typical filter tap is shown. Each block corresponds to a single configurable logic

block (CLB), and most data signals are routed locally. Control signals for implementing sign

extension are distributed using the horizontal long lines of the array (denoted by the dotted lines)

and are applied to the input signal in the centrally located control block. Automatic generation of

the FPGA configuration information starting from the specification of the filter response is

straightforward, as only the interconnection pattern of the adder and delay element inputs change

with filter characteristics.

Fig: 5.8: FPGA Filter Tap Implementation

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

25

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

CHAPTER 6

PERFORMANCE ANALYSIS

Table 2: Implementation results

All circuits exhibit a similar throughput (Fig. 6.1). The achieved sample frequency goes

from 6MHz in the bit-serial version to 25MHz in the bit-parallel one. The filter clock rates for

the digit-size 1, 2 and 4 bits are shown in Fig.6.2 and the main difference among design

operation is considered at small digits. The canonical forms always achieve higher frequency

than the inverted ones. Furthermore, maximum values correspond to the anti-symmetrical and

symmetrical canonical forms, since their occupation level is lower than it is in the other

structure. On the other hand, if greater digit-sizes are considered those differences disappear. The

higher delay of these global lines is masked for dense FPGA occupations. In that case, even local

wires can reach higher interconnection delay than global ones. The results shows the actual

properties of FPGA implementations: the smaller the circuit, the faster the clock rate.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

26

SEMINAR REPORT

Fig:6.1: throughput vs digit size

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Fig:6.2:filter clock rate vs digit size

The relative speedup in each circuit with respect to its bit-serial version is depicted in Fig.

6.3. The speed increment achieved with the bit-parallel versions goes from 3 times higher than

the bit-serial one in the anti-symmetric canonical structure to 4 times higher in the symmetric

inverted topology. ie. the area is reduced by nearly a half when symmetrical structures are used.

In the small digit-size cases the canonical forms filters make use of less FPGA resources than the

inverted form ones. That difference in the occupation of the chip is mainly caused by the

registers used in the implementation of the delays. In the inverted form, the triple precision data

has to be delayed, and the number of registers needed are given by (P-1)*3*(M-1). Meanwhile,

in the canonical one, just the input data has to be delayed, resulting in a register count of 8*(M1). be 8 times higher. This effect is the consequence of the influence of the routing process in the

FPGA. In high digit-size circuits, a large set of paths exists, and each of them can become the

critical path after the implementation. The limited resources of interconnection in the FPGA

make it difficult for the router to assign a low delay value to each of these paths. On the contrary,

relatively few paths determine the delay in serial circuits.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

27

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

Fig:6.3:relative speed

fig:6.4:A-T vs Digit size

In Fig.6.4 the area-time product of the circuits for each digit-size is shown. The area is

measured as the number of LEs, and the time is the inverse of the sample period or throughput.

As can be seen the canonical forms are more efficient than the inverted ones for all digit-sizes.

Considering only the canonical form filters, those with 2 bits digit-size have better area-time

product than those with 1 and 4 bits digitsize have. If inverted forms are considered it does not

happen in this way, they are more efficient according to digit-size increases.

6.1 PERFORMANCE MEASURES

A DSP implementation is subject to various performance measures. These are important

for comparing design tradeoffs.

6.1.1 ITERATION PERIOD

For a single-rate signal processing system, an iteration of the algorithm acquires a sample

from an A/D converter and performs a set of operations to produce a corresponding output

sample. The computation of this output sample may depend on current and previous input

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

28

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

samples, and in a recursive system the earlier output samples are also used in this calculation.

The time it takes the system to compute all the operations in one iteration of an algorithm is

called the iteration period. It is measured in time units or in number of cycles. For a generic

digital system, the relationship between the sampling frequency fs and the circuit clock

frequency fc is important.

In designs where fc>fs, the iteration period is measured in terms of the number of clock

cycles required to compute one output sample. This definition can be trivially extended for multirate systems.

6.1.2 SAMPLING PERIOD AND THROUGHPUT

The sampling period Ts is defined as the average time between two successive data

samples. The period specifies the number of samples per second of any signal. The sampling rate

or frequency (fs=1/Ts) requirement is specific to an application and subsequently constrains the

designer to produce hardware that can process the data that is input to the system at this rate.

Often this constraint requires the designer to minimize critical path delays. They can be reduced

by using more optimized computational units or by adding pipelining delays in the logic (see

later). The pipelining delays add latency in the design. In designs where fs<fc, the digital

designer explores avenues of resource sharing for optimal reuse of computational blocks.

6.1.3 LATENCY

Latency is defined as the time delay for the algorithm to produce an output y[n] in

response to an input x[n]. In many applications the data is processed in batches. First the data is

acquired in a buffer and then it is input for processing. This acquisition of data adds further

latency in producing corresponding outputs for a given set of inputs. Beside algorithmic delays,

pipelining registers are the main source of latency in an FDA. In DSP applications, minimization

of the critical path is usually considered to be more important than reducing latency. There is

usually an inverse relationship between critical path and latency. In order to reduce the critical

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

29

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

path, pipelining registers are added that result in an increase in latency of the design. Reducing a

critical path helps in meeting the sampling requirement of a design.

6.1.4 POWER DISSIPATION

Power is another critical performance parameter in digital design. The subject of

designing for low power use is gaining more importance with the trend towards more handheld

computing platforms as consumer devices. There are two classes of power dissipation in a digital

circuit, static and dynamic. Static power dissipation is due to the leakage current in digital logic,

while dynamic power dissipation is due to all the switching activity. It is the dynamic power that

constitutes the major portion of power dissipation in a design. Dynamic power dissipation is

design-specific whereas static power dissipation depends on technology.

In an FPGA, the static power dissipation is due to the leakage current through reversedbiased diodes. On the same FPGA, the use of dynamic power depends on the clock frequency,

the supply voltage, switching activity, and resource utilization. For example, the dynamic power

consumption Pd in a CMOS circuit is:

Pd=C V2DDf

(eqn.6.1)

where a and C are, respectively, switching activity and physical capacitance of the design, VDD

is the supply voltage and f is the clock frequency. Power is a major design consideration

especially for battery-operated devices.

In many digital system processing (DSP) and communication algorithms a large

proportion of multiplications are by constant numbers. For example, the finite impulse response

(FIR) and infinite impulse response (IIR) filters are realized by difference equations with

constant coefficients. In image compression, the discrete cosine transform (DCT) and inverse

discrete cosine transform (IDCT) are computed using data that is multiplied by cosine values that

have been pre-computed and implemented as multiplication by constants. The same is the case

for fast Fourier transform (FFT) and inverse fast Fourier transform (IFFT) computation. For fully

dedicated architecture (FDA), where multiplication by a constant is mapped on a dedicated

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

30

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

multiplier, the complexity of a general purpose multiplier is not required. The binary

representation of a constant clearly shows the non-zero bits that require the generation of

respective partial products (PPs) whereas the bits that are zero in the representation can be

ignored for the PP generation operation. Representing the constant in canonic sign digit (CSD)

form can further reduce the number of partial products as the CSD representation of a number

has minimum number of non-zero bits. All the constant multipliers in an algorithm are in doubleprecision floating-point format. These numbers are first converted to appropriate fixed-point

format. In the case of hardware mapping of the algorithm as FDA, these numbers in fixed-point

format are then converted into CSD representation.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

31

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

CONCLUSIONS

For years numerous efforts have been made to reduce the implementation complexity of

signal processors. One of the method, A pipelinable bit-serial multiplier using Canonic Signed

Digit or CSD code, is proposed, which is to represent constant coefficients is introduced to

reduce the implementation complexity of signal processors, which is measured by the AreaTime, or AT product. This architecture has the peculiar feature of being self-timed and comprises

a fully interlocked pipelining structure which aims at controlling the different computational

paths of a system design. We have shown how useful this approach is in terms of chip area, lowpower design, and speed. Furthermore the architecture allows the mapping of different dataflow

graph into one graph by including router in the graph and routing information in the data packet.

This offers the freedom of reconfiguration within the implementation and supports also rapid

system prototyping. For the design of embedded systems, to be applied for control purposes in

mechatronic systems, this new architecture represents a novel approach, especially as regards the

distribution of controllers and data information. One example is the automotive industry where

performance, space, cost, size, and weight are of vital importance. With the architecture

described it is possible to implement different controllers on different hierarchical levels (e.g., in

cascade control) on one or more chips in a transparent way. In this seminar, it is shown that

FPGA architecture is an ideal vehicle for thus optimized bit-serial processing. Thus for the

Digital Signal Processing (DSP) and Communication the FPGAs are being increasingly used for

a variety of computationally intensive applications.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

32

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

REFERENCES

[1] Keshab K. Parhi, VLSI Digital Signal Processing systems Design and Implementation, 2nd

ed., Chap 13, pp. 477-518.

[2] Shousheng He and Mats Torkelson, FPGA Implementation of FIR Filters Using Pipelined

Bit-Serial Canonical Signed Digit Multipliers IEEE Custom integrated Circuits Conference,

July1994, pp.81-84

[3] Jeffrey O. Coleman, Arda Yurdakul, Fractions in the Canonical-Signed-Digit Number

System, IRE Trans. Electron. Comp.,vol. EC-10, pp. 389400,Aug 1961.

[4] D.S. Dawoud and S. Masupa, Design And FPGA Implementation Of Digit-Serial FIR

Filters, South African Institute Of Electrical Engineers, Vol.97(3) September 2006, pp.216-222.

[5] Chia-Yu Yao and Chung-Lin Sha, Fixed-point FIR Filter Design and Implementation in the

Expanding Subexpression Space, Jan 2010, pp.185-188

[6] Chris H. Dick' Fred Harris, Implementing Narrow-Band Fir Filters Using FPGAs, pp.

289-292, Nov. 1996

[7] Zhangwen Tang, Jie Zhang and Hao Min, A High-Speed, Programmable, CSD Coefficient

Fir Filter ,IEEE Transactions on Consumer Electronics, Vol. 48, No. 4, NOVEMBER 2002.,pp

834-837.

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

33

SEMINAR REPORT

FPGA IMPLEMENTATIONOF FIR FILTERS

USINGPIPELINEDBIT SERIAL CSD MULTIPLIERS

[8] Achim Rettberg, Mauro Zanella, Thomas Lehmann, Christophe Bobda, A New Approach of

a Self-Timed Bit-Serial Synchronous Pipeline Architecture, 14th IEEE International Workshop

on Rapid Systems Prototyping (RSP03), Sept. 2003, pp 203-207.

[9] FPGA Implementation of High Speed FIR Filters Using Add and Shift Method, Centre for

Dynamics and Control of Smart Structures, http://www.google.com

[10] Javier Valls, Marcos M. Peir, Trini Sansaloni, Eduardo Boemo, A Study about FPGAbased Digital filters IRE Trans. Electron. Comp.,vol. EC-13, pp. 389400,Aug 2003

[11] Roger Woods, John McAllister, Gaye Lightbody, Ying Yi, FPGA-based Implementation of

Signal Processing Systems, John Wiley and Sons, Ltd., Publication

[12] Shoab Ahmed Khan., Digital Design of Signal Processing Systems: A Practical Approach,

First Edition, Chapt: 1 -2 ,pp. 1-37,John Wiley & Sons, Ltd. Published 2011.

[13] R. Woods, J. McAllister, G. Lightbody and Y. Yi. FPGA-based Implementation of Signal

Processing Systems, 2008 John Wiley & Sons, Ltd

[14] Shousheng He and Mats Torkelson, FPGA Implementation of FIR Filters Using Pipelined

Bit-Serial

Canonical

Signed

Digit

Multipliers

IEEE

Custom

integrated

Circuits

Conference,1994

[15] Achim Rettberg, Mauro Zanella, Thomas Lehmann, Christophe Bobda, A New Approach

of a Self-Timed Bit-Serial Synchronous Pipeline Architecture, 14th IEEE International

Workshop on Rapid Systems Prototyping (RSP03),pp. 1-7, July2003

DEPT. OF ECE, SREEBUDDHA COLLEGE OF ENGINEERING

34

You might also like

- Sewerage Project of Barmer TownDocument1 pageSewerage Project of Barmer TownsombansNo ratings yet

- Final Manual For Specification StandardsDocument192 pagesFinal Manual For Specification Standardsbhargavraparti100% (1)

- Management of Small Hydro Projects Developer/investor's PerspectiveDocument0 pagesManagement of Small Hydro Projects Developer/investor's PerspectivesapkotamonishNo ratings yet

- Smart Highway KSCE PDFDocument1 pageSmart Highway KSCE PDFMuhammadSaadNo ratings yet

- City Centre Future Access Study Section 1Document349 pagesCity Centre Future Access Study Section 1Ben RossNo ratings yet

- CB510 Project Cash Flow AnalysisDocument3 pagesCB510 Project Cash Flow AnalysisShroukAdelMohamedGaribNo ratings yet

- Baudrate GeneratorDocument88 pagesBaudrate GeneratorT.snehaNo ratings yet

- Soft-Error-Aware Read-Stability-Enhanced Low-Power 12T SRAM With Multi-Node Upset Recoverability For Aerospace ApplicationsDocument11 pagesSoft-Error-Aware Read-Stability-Enhanced Low-Power 12T SRAM With Multi-Node Upset Recoverability For Aerospace ApplicationsZhongpeng LiangNo ratings yet

- 1 - Aridod Corporate ProfileDocument47 pages1 - Aridod Corporate ProfileAdnan IslamNo ratings yet

- Ethiopian Water Technology Institute Terms of Reference /TOR/ For Delivery ofDocument8 pagesEthiopian Water Technology Institute Terms of Reference /TOR/ For Delivery ofMustefa Mohammed AdemNo ratings yet

- Basic Signal Processing OperationsDocument3 pagesBasic Signal Processing Operationsmvsrr100% (1)

- Btech Final Year Engineering ProjectsDocument21 pagesBtech Final Year Engineering ProjectsDebasish PadhyNo ratings yet

- Toll Gate Collection Using Delphi7Document60 pagesToll Gate Collection Using Delphi7herveNo ratings yet

- Facilities - NSTLDocument3 pagesFacilities - NSTLKurian JosephNo ratings yet

- Brochure Integrated Container Terminal Planning Optimization EN PDFDocument10 pagesBrochure Integrated Container Terminal Planning Optimization EN PDFHerum ManaluNo ratings yet

- Civil Installation, Maintenance, Materials & ServicesDocument3 pagesCivil Installation, Maintenance, Materials & ServicesSunday JamesNo ratings yet

- Report Site VisitDocument4 pagesReport Site VisitFaiz SalimNo ratings yet

- Durban PortDocument12 pagesDurban PortAnton HarmanNo ratings yet

- Parking Management Software for Sustainable OperationsDocument24 pagesParking Management Software for Sustainable OperationsTeros01No ratings yet

- Create an effective Project plan in 5 stepsDocument15 pagesCreate an effective Project plan in 5 stepstaolawaleNo ratings yet

- Environmental Solutions Online Business PlanDocument34 pagesEnvironmental Solutions Online Business PlanKahlouche HichemNo ratings yet

- Monorail Reconfiguration Evaluation ReportDocument43 pagesMonorail Reconfiguration Evaluation ReportThe Urbanist100% (1)

- MAIN REPORT VOL 4-Drainage DevelopmentDocument88 pagesMAIN REPORT VOL 4-Drainage Developmentsoleb100% (2)

- Preparation Schedule for Nghi Son 2 BOT Thermal Power Plant Dredging ProjectDocument1 pagePreparation Schedule for Nghi Son 2 BOT Thermal Power Plant Dredging ProjectjeorgeNo ratings yet

- Karuma Hydropower Road Upgrade DesignDocument60 pagesKaruma Hydropower Road Upgrade Design范小爷No ratings yet

- Price - Part Star TecDocument1 pagePrice - Part Star TecAkhmarrezal Abu KassimNo ratings yet

- CARE assigns 'BBB-' rating to Dharti Dredging's long-term bank facilitiesDocument6 pagesCARE assigns 'BBB-' rating to Dharti Dredging's long-term bank facilitiesshankarswaminathanNo ratings yet

- Cranes With Brains: Euromax - The Modern Automatic Container TerminalDocument4 pagesCranes With Brains: Euromax - The Modern Automatic Container TerminalElafanNo ratings yet

- Development Depth Control and Stability Analysis of An Underwater Remotely Operated Vehicle ROVDocument6 pagesDevelopment Depth Control and Stability Analysis of An Underwater Remotely Operated Vehicle ROVSuman SahaNo ratings yet

- CleanSweep 11Document54 pagesCleanSweep 11Syed Jasam HussainiNo ratings yet

- Shehazad AutoCAD 3D Max Modeling CVDocument4 pagesShehazad AutoCAD 3D Max Modeling CVshehazad khursheed akhterNo ratings yet

- Management contract for railway O&M in EthiopiaDocument68 pagesManagement contract for railway O&M in EthiopiaFNo ratings yet

- Microcontroller Based Stepper Motor Speed and Position ControlDocument46 pagesMicrocontroller Based Stepper Motor Speed and Position Controlzelalem wegayehuNo ratings yet

- Slipway Shed Connections DWGDocument1 pageSlipway Shed Connections DWGTheodore Teddy KahiNo ratings yet

- 5 Depreciation Rates As Per Co ActDocument5 pages5 Depreciation Rates As Per Co ActAnonymous Q3J7APoNo ratings yet

- Smart Pole EOI Patna PDFDocument19 pagesSmart Pole EOI Patna PDFICT WalaNo ratings yet

- GMBDocument24 pagesGMBsrisaraswatiNo ratings yet

- Bricscad PDFDocument23 pagesBricscad PDFAlvaro Carroza MillaNo ratings yet

- India Inland Dredging Conference Reservoir DredgingDocument22 pagesIndia Inland Dredging Conference Reservoir DredgingjannesNo ratings yet

- Dynamic Simulation Services: Germanischer Lloyd - Service/Product DescriptionDocument20 pagesDynamic Simulation Services: Germanischer Lloyd - Service/Product DescriptionDiarista Thoma SaputraNo ratings yet

- Guidelines For Agreements, Licences and Permits PDFDocument143 pagesGuidelines For Agreements, Licences and Permits PDFAri PranantaNo ratings yet

- Vehicle & Drivers Safety Policy: China Communication Construction Company LimitedDocument3 pagesVehicle & Drivers Safety Policy: China Communication Construction Company LimitedDistinctive learningNo ratings yet

- Panguloori - Design of Solution For Solar Street LightingDocument7 pagesPanguloori - Design of Solution For Solar Street LightingISSSTNetworkNo ratings yet

- Standar Operational Procedure (Electrical) : Pt. Krakatau PoscoDocument24 pagesStandar Operational Procedure (Electrical) : Pt. Krakatau Poscosefina mecNo ratings yet

- F Documents 1Document36 pagesF Documents 1Vishak ThebossNo ratings yet

- Tender Document CONCORDocument109 pagesTender Document CONCORgiri_placid100% (1)

- Internship HarshaDocument15 pagesInternship HarshaPratheek GowdaNo ratings yet

- Completion Dates Revision Dates: Status Updated by On Engg PlanningDocument7 pagesCompletion Dates Revision Dates: Status Updated by On Engg PlanningfrndrobinsterNo ratings yet

- Bamboo Flooring Manufacturing Unit PDFDocument18 pagesBamboo Flooring Manufacturing Unit PDFRij GurungNo ratings yet

- Resume VenugopalDocument3 pagesResume Venugopalani_ami_papa100% (1)

- How To Estimate The Cost of Mechanical DredgingDocument21 pagesHow To Estimate The Cost of Mechanical DredgingKaren LimNo ratings yet

- Assignment Time Cost Trade-OffDocument4 pagesAssignment Time Cost Trade-OffASAD ULLAHNo ratings yet

- Vision Long Island Infrastructure ListDocument33 pagesVision Long Island Infrastructure ListLong Island Business NewsNo ratings yet

- Technology Software Module: Simoreg DC - Master 6ra70 and T300Document56 pagesTechnology Software Module: Simoreg DC - Master 6ra70 and T300chochoroyNo ratings yet

- Design and Fabrication of Oil Skimmer RobotDocument46 pagesDesign and Fabrication of Oil Skimmer RobotSathiya Udumalpet100% (2)

- Major ProjectDocument55 pagesMajor ProjectpraveenpusaNo ratings yet

- Implementation of DSP Algorithms On Reconfigurable Embedded PlatformDocument7 pagesImplementation of DSP Algorithms On Reconfigurable Embedded PlatformGV Avinash KumarNo ratings yet

- Fpga Based An Advanced Lut Methodology For Design of A Digital FilterDocument5 pagesFpga Based An Advanced Lut Methodology For Design of A Digital FilterIjesat JournalNo ratings yet

- A Software-Programmable Multiple-Standard Radio PlatformDocument5 pagesA Software-Programmable Multiple-Standard Radio PlatformdvdreadsNo ratings yet

- Smart Card:: Smart Cards-What Are They?Document12 pagesSmart Card:: Smart Cards-What Are They?infernobibekNo ratings yet

- Notes On Micro Controller and Digital Signal ProcessingDocument70 pagesNotes On Micro Controller and Digital Signal ProcessingDibyajyoti BiswasNo ratings yet

- Iir 1Document55 pagesIir 1Joyita BiswasNo ratings yet

- DSP EditedDocument153 pagesDSP Editedjamnas176No ratings yet

- Design Model Simulink To Denoise Ecg Signal UsingDocument18 pagesDesign Model Simulink To Denoise Ecg Signal UsingMaydiana Nurul K0% (1)

- PS7203-Advanced Power System ProtectionDocument6 pagesPS7203-Advanced Power System ProtectionntrimurthuluNo ratings yet

- Lab09 PDFDocument11 pagesLab09 PDFDaNo ratings yet

- Biomedical Signal Processing and ControlDocument10 pagesBiomedical Signal Processing and Controlmahsa sherbafiNo ratings yet

- B. Frequency Domain Representation of Lti Systems: ObjectiveDocument4 pagesB. Frequency Domain Representation of Lti Systems: Objectivenaiksuresh100% (1)

- HW 09 SolDocument24 pagesHW 09 SolNanilyn TumlodNo ratings yet

- Communication System Design Using DSP Algorithms - With Laboratory Experiments2Document199 pagesCommunication System Design Using DSP Algorithms - With Laboratory Experiments2Văn CôngNo ratings yet

- Sap MagDocument6 pagesSap MagakunooNo ratings yet

- SDR Applications Using VLSI Design of Reconfigurable DevicesDocument6 pagesSDR Applications Using VLSI Design of Reconfigurable DevicesSteveNo ratings yet

- Lab 5: The FFT and Digital Filtering: 1. GoalsDocument3 pagesLab 5: The FFT and Digital Filtering: 1. GoalsTrung LyamNo ratings yet

- DSP QBDocument4 pagesDSP QBakhila pemmarajuNo ratings yet

- Median Filter Is The Best Solution To Remove Salt and Pepper NoiseDocument4 pagesMedian Filter Is The Best Solution To Remove Salt and Pepper NoiserajanladNo ratings yet

- DSP Course Outline 2023Document2 pagesDSP Course Outline 2023perry ofosuNo ratings yet

- EC6502 Principles of Digital Signal ProcessingDocument320 pagesEC6502 Principles of Digital Signal ProcessinglazezijoNo ratings yet

- Ideal Filters: S X N S N yDocument26 pagesIdeal Filters: S X N S N yPreethiNo ratings yet

- Digital Signal Processing Multiple Choice Questions and Answers - SanfoundryDocument13 pagesDigital Signal Processing Multiple Choice Questions and Answers - SanfoundryOMSURYACHANDRAN0% (1)

- MTech I Sem Exam Question Paper on Multirate Signal ProcessingDocument2 pagesMTech I Sem Exam Question Paper on Multirate Signal Processingsafu_117No ratings yet

- DSP Fundamentals ExplainedDocument34 pagesDSP Fundamentals ExplainedThiagu Rajiv100% (1)

- CoreFIR HBDocument69 pagesCoreFIR HBalokNo ratings yet

- Project Assignment: R. Nassif, ECE Department, AUB EECE 491-691, Digital Signal ProcessingDocument3 pagesProject Assignment: R. Nassif, ECE Department, AUB EECE 491-691, Digital Signal ProcessingHussein BayramNo ratings yet

- Esat Learning StatusDocument2 pagesEsat Learning StatusReiniel AntonioNo ratings yet

- Stein J.Y. Digital Signal Processing - A Computer Science Perspective (Wiley, 2000) (T) (869s)Document869 pagesStein J.Y. Digital Signal Processing - A Computer Science Perspective (Wiley, 2000) (T) (869s)joseivanmu100% (1)

- VT CAMP 2G05 ManualDocument30 pagesVT CAMP 2G05 Manualibere cristiano SilvaNo ratings yet

- MATLAB Filter Design and Audio Enhancement: ELL319 Digital Signal ProcessingDocument10 pagesMATLAB Filter Design and Audio Enhancement: ELL319 Digital Signal ProcessingHimanshu GaudNo ratings yet

- Equalization and Diversity Techniques For Wireless Communicati 2Document27 pagesEqualization and Diversity Techniques For Wireless Communicati 2Arun GopinathNo ratings yet

- DSP 3rd ECE-II Sem MID2 BitsDocument4 pagesDSP 3rd ECE-II Sem MID2 Bitsnick furiasNo ratings yet

- CMR Engineering College Kandlakoya (V), Medchal Road, Hyderabad - 501401Document4 pagesCMR Engineering College Kandlakoya (V), Medchal Road, Hyderabad - 501401Satya NarayanaNo ratings yet