Professional Documents

Culture Documents

Out of Order Floating Point Coprocessor For RISC V ISA

Uploaded by

kiranOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Out of Order Floating Point Coprocessor For RISC V ISA

Uploaded by

kiranCopyright:

Available Formats

Out Of Order Floating Point Coprocessor

For RISC V ISA

Vinayak Patil, Aneesh Raveendran, Sobha P M, A David Selvakumar, Vivian D

Centre for Development of Advanced Computing, Bangalore, INDIA

{ vinayakp,raneesh, sobhapm, david, vivand} @ cdac.in

Abstract— Floating point operations are important and coprocessor is integrated within processor chip like in ARM,

essential part of many scientific and engineering MIPS etc. FPU acts a kind of accelerator and work in parallel

applications. Floating point coprocessor (FPU) performs with the integer pipeline. It will offload large computational,

operations like addition, subtraction, division, square root, high latency floating point instruction from main processor. If

multiplication, fused multiply and accumulate and these instructions were executed by main processor, then it will

compare. Floating point operations are part of ARM, slow down main processor as operations like floating point

MIPS, and RISC-V etc. instruction sets. The FPU can be a division, square root, multiply and accumulate takes large

part of hardware or be implemented in software. This computation and long latency. Further out of order execution

paper details an architectural exploration for floating floating point coprocessor unit helps to speed up whole chip.

point coprocessor enabled with RISC-V [1] floating point The standardized methods to represent floating point numbers

instructions. The Floating unit that has been designed with have been instituted by IEEE 754 standard through which the

RISC-V floating point instructions is fully compatible with floating point operation can be carried out with modest storage

IEEE 754-2008 standard as well. The floating point co- requirements [6].

processor is capable of handling both single and double Three basic components in IEEE 754 are sign (S), exponent

precision floating point data operands in out-of-order for (E) and mantissa (M). Bit width and bit allocations are as

execution and in-order commit. The front end of the shown in TABLE I.

floating point processor accepts three data operands,

TABLE I : FLOATING POINT BIT REPRESENTATION

rounding mode and associated Opcode fields for decoding.

Each floating point operation is tagged with an instruction Precision Sign Exponent Mantissa Bias

token for in-order completion and commit. The output of Single 1[31] 8[30:23] 23[22:0] 127

the floating point unit is tagged with either single or Double 1[63] 11[62:52] 52[51:0] 1023

double precision results along with floating point

exceptions, if any, based on the RISC-V instruction set. Number = (-1)S*(H.M)E where H = hidden bit

The coprocessor for floating point integrates with integer IEEE 754 2008 standard supports all floating point

pipeline. The proposed architecture for floating point operations. It handles all special input like sNaN, qNaN,

coprocessor with out-of-order execution, in-order commit +Infinity, –Infinity positive zero and negative zero. It handles

and completion/retire has been synthesized, tested and five exceptions namely overflow, underflow, invalid, inexact

verified on Xilinx Virtex 6 xc6vlx550t-2ff1759 FPGA. A and division by zero. It supports five different rounding modes.

system frequency of 240MHz for single precision floating

II. RELATED WORKS

point and 180 MHz double precision floating point

operations has been observed on FPGA. High computational intensive algorithms and simulations

such as Monte Carlo simulations [7] [8] [9] finite element

Index Terms—IEEE 754 floating point standard, floating point analysis [10] [11], Eigen value calculation [4], Jacobi solver

co-processor, RISC processor, RISC-V instruction set, analysis [5] requires large amount of floating point operations.

Microprocessor. Many embedded processors, especially older designs, do not

have hardware support for floating-point operations. In the

I. INTRODUCTION

absence of an FPU as hardware, many FPU functions can be

Many scientific and engineering applications require emulated through software libraries [12], which save the added

floating point arithmetic. Application varies from climate hardware cost of an FPU but are significantly slower in speed.

modeling, supernova simulations, electromagnetic scattering In order to improve the performance, hardware implementation

theory, Computational Geometry and Grid Generation [2] to of floating point unit is needed.

image processing, FFT calculation [3], matrix arithmetic, Eigen Up to now many efforts has been taken to design of

value calculation [4], Jacobi solver [5], and so on. To support floating point coprocessor. But many of design do not have

and accelerate these applications, high performance computing wide precision support. Some designs support single precision

devices which can produce high throughput are required. [13] [14]. Some designs have limited arithmetic operation

Floating point coprocessors drastically increase the support like only MAC [15], or only addition, subtraction,

performance of these high computation applications. In most division [14].

modern general purpose computer architectures, floating point

978-1-4799-1743-3/15/$31.00 ©2015 IEEE

Register

Read

Integer

Integer

Instruction Fetch Decode Execute

Reg file

Memory Unit Write

back

Floating point

Floating

Reg File

Co-Processor

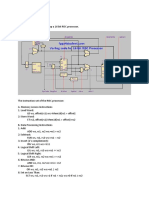

Fig. 1. Top Level Architecture For RISC V Processor

FPU with SPARC architecture has been implemented [16],

which considers only addition, multiplication and division IV. PIPELINE ORGANIZATION OF RISC-V PROCESSOR

operations. Some efforts have been made for FPU with RISC A. RISC Processor Architecture

architecture [17] but this paper considers only single precision

Fig. 1. shows the top level architecture of the RISC

operations, major operation like fused multiply and accumulate

processor. Although in this section whole processor

is missing, with system frequency of 70 Mhz.

environment has been discussed, this paper focuses on floating

Proposed paper implements floating point coprocessor

point co-processor implementation. Fig. 1. also shows how

which supports both single and double precision. It also has

FPU coprocessor is going to integrate with main processor.

wide number of arithmetic operations like addition,

The fetch unit fetches the instructions from the program

subtraction, division, square root, multiplication, fused

memory based on the program counter value. Decoder decodes

multiply and accumulates and compare. It is fully compatible

the instructions and passes to the register select unit. If the

with IEEE754 standard. One of the key benefits of this

current instruction is integer related instruction, then it passes

implementation is that it is enabled with an open source RISC

to the integer pipeline and if the instruction is related the

V ISA [1].

floating point, then passes to the floating point co-processor

III. INSTRUCTION SET ANALYSIS pipeline. For floating point instructions, integer decoder just do

partial decoding, full decoding of those instruction will takes

Before the selection of the RISC-V instruction set for place in FPU coprocessor. The data from register select module

implementation, an analysis on floating point instructions in is given to the floating point and integer modules. The floating

the MIPS, ARM, MIPS, and RISC-V has been done. point co-processor will accepts input signals as three input

TABLE II : ISA Analysis:-RISC-V, ARM and MIPS operands (either single or double precision), opcode and round

mode. It decodes instructions and executes them. Final result is

RISC-V written back to the registers through write back unit.

Design Philosophy ARM [18] MIPS[19]

[1]

B. Out Of Order FPU Coprocessor

ISA encoding Fixed and Fig. 2. shows the top level architecture of an in order issue

Fixed Fixed

scheme variable out-of-order execution pipelined FPU co- processor.

16/32/64/ 16/32/

Instruction size 32/64-bit FPU ALU

128-bit 64-bit

Add / Sub

General purpose

32 31 31 I O

registers Multiplication

N U

Floating point P Input Division T Fpu_res

32 31 31 Fpu_req

U Output P

registers decoder

T Square root decoder U

Is all rounding F T

Yes Yes Yes Fused multiply

mode supported I F

and accumulate

F I

Single precision F

O Compare

floating point 26 33 15 O

operations

Instruction

Double precision scheduler queue

floating point 26 29 15

operations Fig. 2. Architecture of Out-of-order FPU Co-Processor

In order FPU cannot utilize full capability of design. In this

Opcode

type of design many cycles gets waste during stall of pipeline.

Opcode decoder

To make use of these waste cycles, out of order FPU have been

implemented. It receives in order instructions, performs out of

order execution and in order commit.

a) Input FIFO Add /

Overflow

Sub

The floating point unit accepts FPU request packet and

Exception handler

stores it into input FIFO which is further read by input decoder. Underflow

Multiply

Fig. 3. shows packet format. Packet contains three input Op1

Invalid

operands, opcode, F7, F5, F3 and rounding mode. Division

Op2

Inexact

119….88 87….56 55….24 23…18 17..11 10..6 5..3 2...0 Op3 Divbyzero

Multiply

Op1(SP/DP) Op2(SP/DP) Op3(SP/DP) Opcode F7 F5 F3 RM accumulate

Rmode

Fig. 3. FPU request packet

Square

Root

Opcode, F7, F5 and F3 are specific for each floating point

Out data mux

instruction. Rounding mode will be variable. It can have any Compare

value as mention in IEEE754 standard. Packet has three

operands as op1, op2 and op3. These can be either single

Out

precision or double precision.

b) Input decoder Special input detector

Input decoder in floating point pipeline reads packet from

input FIFO. It decodes the operations depending on opcode,

F7, F5 and F3 fields. If any of these fields is not matching with Fig. 4. Floating Point ALU Architecture

legal opcode or legal F7, F5, F3 for floating point instruction

then decoder will give that as illegal instruction. If fields are

matching then decoder enable corresponding FPU operation d) Output decoder

and it will pass op1, op2 and op3 data to FPU ALU. FPU Output decoder logic only commits oldest instruction from

coprocessor keeps the track of the instruction by putting token pipeline. This ensures in order commit. It checks token from

into an instruction scheduler queue. instruction scheduler queue and fetches oldest token and

On each clock cycle an instruction will be issued till commits instruction only if that token matches with completed

instruction scheduler queue is not full. Since, latency for each instruction. Output decoder takes corresponding output data

operation is different; depth of this queue should be equal to with exception, forms packet and send it to output FIFO.

maximum clock latency of all operations to operate without Appropriate exceptions for the each operation are raised and

any bubble in pipeline. As instructions are issued on every processed according to the RISC-V instruction set. The floating

clock cycle, they can execute in parallel and out of order. They point co-processor has 5 exception signals to raise exceptions

may finish even out of order but they cannot commit out of in the operation. The exceptions are raised according to the

order. IEEE 754 standard.

c) Floating Point ALU architecture e) Output FIFO

Fig. 4. shows the micro-architecture of the floating point Decoder stores output data along with exceptions in output

co-processor ALU. There are six independent pipelines as FIFO. It sends this packet to write back stage of integer

shown in figure. These pipelines are floating point add/sub, pipeline.

divider, multiplier, square root, multiply and accumulate and

compare. Each pipeline has its own clock latency. It receives

three operands (either single precision or double precision) and V. MICRO-ARCHITECTURE OF INTERNAL FPU ALU

rounding mode as input. It gives output of arithmetic operation

A. Adder/subtractor

and five exception as overflow, underflow, invalid, inexact and

divbyzero. Opcode decoder will decode instruction and enables Architecture for floating point adder cum subtractor unit is

the required module. Internal module will perform operation on as shown in Fig. 5. FPU adder has five stages. So clock

input data. Each module will take specific clock latency and latency for adder is 5 clocks. This is continuous pipelined

gives output along with exceptions. There is special input structure. It receives 4 inputs. Two IEEE format operands, 3 bit

detector which checks for special inputs like snan, qnan, round_mode for run time configuration, 1 bit operation signal

infinity and zero. If any special input is there then instruction as control signal for addition or subtraction. It gives addition

will get bypassed directly. It will give output for these special result as an output in IEEE 754 format. Along with this, it will

inputs as per IEEE754. give four more exceptional signals namely overflow,

underflow, invalid and inexact. Main functionality is divided

into three parts, as pre-processing unit, binary addition mode provided. If there is carry from rounding then again

subtraction unit and post processing unit. Pre-processing stage result is shifted and exponent will be updated. Rounded result,

performs unpacking of input operands into sign, mantissa and updated exponent and sign of result will be provided to

exponent. For addition or subtraction exponent of both compose unit which convert output to IEEE 754 standard

operands should be same. Exponent unit calculates effective format and also gives all exception signal.

exponent and exponent difference. Larger operand exponent is

B. Divider

considered as an output exponent.

Swap unit decides smaller and larger operand. Smaller Architecture for floating point divider unit is as shown in

operand’s mantissa undergoes left shift by exponent difference Fig. 6. FPU divider has 31 stages. So clock latency for divider

value to make exponent of both operands same. Effective is 31 clocks. This is continuous pipelined structure. It receives

operation (EOP) is calculated by xor-ing sign of both operands three inputs. Two IEEE format operands and three bit

(S1 and S2) and operation bit. Sign of final result is calculated round_mode for run time configuration. It gives out_data as

by using EOP and larger operand. Three bits will be added to division result; in IEEE format. Along with this it will give five

smaller mantissa as guard, round and sticky. These are called more exceptional signals as overflow, underflow, invalid,

rounding bits which are useful at rounding. Smaller mantissa inexact and divbyzero. Design has three main stages as pre-

will through left shift by exponent difference value. This shift processing unit, non-restoring binary divider and post-

goes through rounding bits. Trailing zero detector (TZD) will processing unit.

calculate number of trailing zeros for smaller mantissa. So as Op1 Op2 Round_mode

per left shift and number of trailing zeros, sticky bit will

Decompose

update. If any non-zero bit losses out in shift then sticky bit

E1 E2

will be set otherwise it will be reset. Binary addition E1-E2+Bias_val LZD1 M1 LZD2 M2

subtraction unit takes larger mantissa and shifted smaller LZ1 LZ2

S1 S2

mantissa as inputs. It does binary addition or subtraction on E+LZ2-LZ1

both operand and gives result and carry to post processing unit. Normalize M1 Normalize M2

Resultant E

Op2 Non restoring binary division

Operation Op1 Round_mode Sign calc

Decompose

Exponent adjust Normalization TZD

E1 E2 M1 M2

Operation

Effective Exp and exp Swap Sign_result Sticky bit update

S2 diff calculator

M_small

Exponent adjust Rounding

S1 Exp_diff

EOP Calc M_large TZD Exp_final M_final

Significant shifter Compose

Resultant Sticky bit calc

Sign calc Out_data 5

All exception signals

Binary adder cum subtractor Fig. 6. Architecture for Floating Point Divider

LZD This is continuous pipelined structure. It receives three

E

Shift calculation inputs. Two IEEE format operands and three bit round_mode

Sign_result for run time configuration. It gives out_data as division result;

Exponent adjust Normalization in IEEE format. Along with this it will give five more

exceptional signals as overflow, underflow, invalid, inexact

Exponent adjust Rounding

and divbyzero. Design has three main stages as pre-processing

Exp_final M_final

unit, non-restoring binary divider and post-processing unit.

Compose In pre-processing, decompose unit unpacks two IEEE-754

Out_data 4 encoded numbers into corresponding sign bits, biased binary

All exception signals

exponents, and mantissa. Sign of final result is calculated by

Fig. 5. Architecture for Floating Point Adder / Subtractor using sign of both operands. Resultant exponent will be

calculated by E1 – E2. Bias has to be added to this value (E1-

Result of addition or subtraction may be de-normalized or it

E2+Bias_value). If there are leading zeros in mantissa, then

may have a carry bit generated. It has to be normalized if

normalization of mantissa is required. This will increase

possible. Leading zeros detector (LZD) will provide number of

precision in result. So leading zero detectors will calculate

leading zeros present in result. Shift calculation unit calculates

number of leading zeros in mantissa part of both operands.

amount and type of shift required. Leading zeros is amount of

Then, there will be left shift for normalization. According to

left shift needed. If there is carry from operation there will be

this shift, resultant exponent will be updated (E+LZ1-LZ2).

one bit right shift. Normalization unit normalizes result

Normalized mantissas will go through non-restoring binary

according to type and amount of shift given by shift calculation

division (NRBD) algorithm [20]. It gives one bit of quotient on

unit. According to shift, there is adjustment in exponent.

every clock cycle. This result may not be normalized.

Normalized result will go through rounding as per rounding

Maximum of 1 bit of left shift is required for normalization. If exponent in specified limit. After this normalization it will

results are too tiny then there is need of right shift to make through rounding as per rounding mode mentioned. If there is

exponent in specified limit. Trailing zeros detector will check carry from rounding then again result is shifted and exponent

number of trailing zeros and if right shift is required then sticky will be updated. Rounded result, updated exponent and sign of

bit will be updated. After this normalization, it will undergo result will be provided to compose unit which convert output to

rounding as per rounding mode mentioned. If there is carry IEEE 754 standard format and also gives invalid and inexact

from rounding then again result is shifted and exponent will be exception signals.

updated. Rounded result, updated exponent and sign of result

D. Floating point multiplier

will be provided to compose unit. Compose unit converts

output to IEEE 754 standard format and also gives all Floating point multiplier unit accepts either single or

exception signal. double precision data operands and performs either single or

double precision floating point multiplication based on the

opcode for the unit. A 3-bit rounding mode is used as input for

C. Architecture for floating point square root providing the rounding mode. Given two floating point

Architecture for floating point square root unit is as shown numbers X1= {S1, E1, M1} and X2 = {S2, E2, M2} the floating

in Fig. 7. FPU square root has 31 stages. So clock latency for point multiplication result can be computed using,

square root is 31 clocks. It receives 2 inputs, operand in IEEE SOUTPUT = S1 XOR S2

754 format and 3 bit round_mode for run time configuration. It EOUTPUT = E1 + E2 –bias

gives out_data as square root. This will also be in IEEE format. 1. MOUTPUT =1.M1 * 1.M2

Along with this it will give two more exceptional signals as The architecture of the floating point multiplier is shown in

invalid and inexact. Fig. 8. A divide and conquer approach is used to design the

IEEE-754 encoded numbers (Operand) are unpacked into proposed floating point multiplier design. The multiplication

their corresponding sign bits (S), biased binary exponents (E), sign can be calculated by performing the xor- operation on sign

and mantissa (M). Resultant exponent will be calculated by of the input operands. The 8-bit, 11-bit adder for exponent

input operand exponent with following formulae. addition and 24x24-bit, 53x53-bit multiplier for mantissa

If (E is even) Ec = (EA + 1022) / 2; multiplication are design as individual units. Based on the

Else (E is odd) Ec = (EA + 1023) / 2; operation corresponding bias (127 for single precision and

If there are leading zeros in mantissa, left shift is needed to 1023 for double precision) are subtracted from the added

normalize mantissa. This will increase precision in result. exponent result. The multiplier result is rounded by using the

input rounding mode. After rounding, to make the hidden bit as

Op1 Round_mode one, proposed design calculates the leading one position in the

Decompose rounded result and forms the floating point output. The single

E1 precision floating point multiplier is having 17-clock cycles

Exponent calculation LZD1 M1 latency and double precision floating point multiplier is having

LZ1 9 clock cycles latency for the operation.

Exponent adjust

Normalize M1

E Non restoring binary division

Exponent adjust Normalization LZD

Exponent adjust Rounding

Exp_final M_final

Compose

Out_data All exception signals 2

Fig. 7. Architecture For Square Root

Fig. 8. Micro-Architecture of Floating Point Multiplier

So leading zero detectors will calculate number of leading

zeros in mantissa part operand. According to that mantissa will E. Architecture for fused multiply and accumulator

be shifted. According to this shift for normalization resultant

exponent is modified. Normalized mantissas will go through Architecture for fused multiply and accumulate (MAC)

non-restoring square root calculation (NRSC) algorithm. On module is as shown in Fig. 9. MAC has 10 clocks latency. This

every clock cycle NRSC gives 1 bit of result. Result has to be is continuous pipelined structure. It receives 3 IEEE 754 format

normalized. Result can have maximum of 1 bit left shift. If input operands. It gives out data as MAC result and 4 bit

results are too tiny then there is need of right shift to make exception signals as an output.

It takes three IEEE 754 operands decompose it into sign M_ls and M_eq. Now according to sign of both operands and

mantissa and exponent. MAC is divided into two parts, results from both binary comparators; comparator logic unit

multiplication of first two operand and then added or will give results. If input operands are invalid then unordered

subtracted result with third operand. In first part, mantissa of bit will set.

first and second operand gets multiplied. Result of this will

undergoes normalization process as per leading zero detector. Op1 Op2

Resultant exponent is calculated by E1+E2-Bias. Normalized

result and exponent is passed to second part. Decompose

Second part takes multiplication result and third operand as S1 E1 E2 M1 M2

S2

input. It will find out smaller operands and shifts it to make

Binary exponent Binary mantissa

both operands exponent same. Then it will add or subtracts comparator comparator

both mantissas. Then this result will undergo normalization.

Normalized result will be rounded as per rounding mode given. E_gr E_ls E_eq M_gr M_ls M_eq

Exponent will be updated after both normalization and Comparator logic

rounding if needed. Resultant sign, mantissa and exponent will Op1 < op2 Op1 = op2

Unordered Op1 > op2

be packed into IEEE 754 form.

Round_mode

Op1 Op2 Op3 Fig. 10. Architecture For Comparator

Decompose VI. EXPERIMENT RESULTS

E1 E2 E3 M1 M2 M3 A. Verification and Testing

E1+E2-BIAS M1*M2 In order to verify proposed design, an extensive simulation

and tests on FPGA have been done. ModelSim DE 10.0a by

Adjust LZD & Mentor Graphics is used for simulation. Hardware is verified

exponent Normalize on PICO M503 FPGA board equipped with a Xilinx Virtex 6

Sign calc xc6vlx550t. Results are verified and tested against IBM test

suit [21] for single precision (as this is the only test suit

Effective Exp and exp Swap available from IBM). Apart from these, a million random test

diff calculator

M_small TZD cases have been generated. Software floating point unit using

Exp_diff

M_large Matlab version 7.6.0.324 (R2008a) has been developed. One

Significant shifter million generated test cases have been verified against this

Sticky bit calc software implementation.

Binary adder cum subtractor TABLE III: Performance analysis

LZD Slice registers LUTs Frequency

40,193 55,661 180 Mhz

Shift calculation

B. Accuracy Assessment

Exponent adjust Normalization The experiment results show that accurate results are

obtained for addition, multiplication, division and square root

Sign operations. Maximum error of 1 unit in last position (ULP)

Exponent adjust Rounding

Result against IBM and software implementation results is observed

Exp_final M_final

Compose for certain cases. This is because of difference in rounding

strategies.

Out_data All exception signals

C. Analysis and benchmarking

Fig. 9. Fused Multiply and Accumulate A floating point coprocessor with an open source RISC V

ISA has been implemented from scratch and verified with large

F. Architecture for comparator number of test cases. It may not be fair to do one to one

Architecture for comparator module is as shown in Fig. 10. comparison for earlier implemented designs, as specification of

FPU comparator has three clocks latency. This is continuous design like precision, supported operations, platform are

pipelined structure. It receives 2 inputs IEEE format operands. different. FPVC [22] gives throughput of 25 MFLOPs.

It gives 4 bit comparison result as an output. Decompose unit CalmRISC32 [17] gives design frequency of 70 MHz. GRFPU

will separate both operands into corresponding sign (S1, S2), [23] gives peak performance of 100 MFLOPs. By using the

exponent (E1, E2) and mantissa (M1, M2). Then exponent continuous pipelined architecture for the presented design, the

comparator will compare both exponent and give 3 bit result as FPU unit achieved a frequency of 240 Mhz for single precision

E_gr (if E1 > E2), E_ls (if E1 < E2) and E_eq (if E1=E2). and 180 Mhz for double precision operations on FPGA. So if

Similarly mantissa comparator will give 3 bit result as M_gr, inputs are supplied continuously on every clock cycle then

implemented design gives throughput up to 180 MFLOPS on [6] IEEE Standard for Binary Floating-Point Arithmetic,IEEE

FPGA itself. Std.754-1985.

[7] Joy, C., P. P. Boyle, et al. (1996). "Quasi-Monte Carlo Methods

VII. CONCLUSION in Numerical Finance." Management Science 42: 926-938.

This paper presents design and implementation of an out of [8] Hull, J.C. Option, futures, and other derivatives. Prentice Hall,

order floating point coprocessor enabled with an open source Upper Saddle River, 2000.

RISC V ISA. It is compatible with IEEE754-2008 standard. It [9] Jäckel, P. Monte Carlo Methods in Finance. John Wiley & Sons,

supports common floating point arithmetic operations along LTD, 2002.

with runtime reconfigurable rounding mode. It is capable of [10] J. Wang, Y.-K. Yong and T. Imai, Finite element analysis of

handling all exceptions mentioned in the standard. Individual piezoelectric vibrations of quartz plate resonators with

floating point units uses state of the art algorithms for the higherorder plate theory, Int. J. of Solids and Struct. 36, pp.

implementation to achieve effective resource utilization on 2303 -2319, 1999

FPGA. By using the continuous pipelined architecture for the [11] P. C. Y. Lee and Y.-K. Yong, Frequency-temperature behavior

present design, the FPU units achieved a frequency of 240 Mhz of thickness vibrations of double rotated quartz plates affected

by plate dimensions and orientations, J. Appl. Phys. 60(7),

for single precision and 180 Mhz for double precision

2327-2342, 1986.

operations on Xilinx Virtex-6 FPGA.

[12] Marius Cornea, Cristina Anderson, Charles Tsen, “Software

Future work includes, exploring other ways to improve

Implementation of the IEEE 754R Decimal Floating-Point

flexibility, such as an adaptive multi-mode floating point unit Arithmetic”, Software and Data Technologies Communications

or reconfiguring the computation components within in Computer and Information Science Volume 10, 2008, pp 97-

floating point units to perform other functions. Furthermore 109.

implementation of enhanced current design algorithms will be [13] Jainik Kathiara, Miriam Leeser “An Autonomous Vector/Scalar

considered to achieve more throughputs. Currently only Floating Point Coprocessor for FPGAs”, IEEE International

pluggable FPU coprocessor with RISCV ISA has been Symposium on Field-Programmable Custom Computing

implemented, in future full processor compatible with RISC V Machines, 2011

ISA will be explored. [14] Haibing HU, Tianjun Jin, Xianmiao Zhang, Zhengyu LU,

Zhaoming Qian, “A Floating-point Coprocessor Configured by a

VIII. ACKNOWLEDGMENT FPGA in a Digital Platform Based on Fixed-point DSP for

Authors sincerely thank Department of Electronics and Power Electronics”, Power Electronics and Motion Control

Information Technology (DEITY), MCIT, GoI, and Dr. Sarat Conference, 2006.

Chandra Babu, Executive Director, C-DAC, Bangalore for the [15] ChrisN.Hinds, “An Enhanced Floating Point Coprocessor for

continuous support, encouragement and the opportunity to Embedded Signal Processing and Graphics Applications”,

Conference on Signals, Systems, and Computers, 1999.

work on the topic of this paper.

[16] Xunying Zhang, Xubang Shen, “A Power-Efficient Floating-

IX. REFERENCES point Co-processor Design”, 2008 International Conference on

Computer Science and Software Engineering

[1] A. Waterman, Y. Lee, D. A. Patterson, and K. Asanovic. The

RISC-V Instruction Set Manual,Volume I: Base User-Level [17] C. Jeong, S Kim, M Lee “In-Order Issue Out-of-Order

ISA. Technical Report UCB/EECS-2011 -62, EECS Execution Floating-point Coprocessor for CalmRISC32”. 15th

Department, University of California, Berkeley, May 2011. IEEE Symposium on Computer Arithmetic, 2001

[2] Bailey D H., “High-precision floating-point arithmetic in [18] ARM Ltd. ARMv6-M Architecture Reference Manual, 2008.

scientific computation.” Computing in Science and Engineering, [19] Opencores.org. Open-RISC Architecture Reference Manual,

2005, 7(3):54-61. 2003.

[3] K. Scott Hemmert, Keith D. Underwood. “An Analysis of the [20] Kihwan Jun ; Swartzlander, E.E. “Modified non-restoring

Double-Precision Floating-Point FFT on FPGAs.” Sandia division algorithm with improved delay profile and error

National Laboratories, In proceeding of 13th Annual IEEE correction” Conference on Signals, Systems and Computers

Symposium on Field-Programmable Custom Computing (ASILOMAR), 2012 Conference Record of the Forty Sixth

Machines, 2005. Asilomar

[4] Miaoqing Huang, Miaoqing Huang. “Reaping the processing [21] FPgen Team, “Floating-Point Test-Suite for IEEE”, IBM Labs

potential of FPGA on double-precision floating-point in Haifa

operations: an eigenvalue solver case study “, Computer Science [22] Jainik Kathiara, Miriam Leeser “An Autonomous Vector/Scalar

& Computer Engineering Department, 18th IEEE Annual Floating Point Coprocessor for FPGAs” IEEE International

International Symposium on Field-Programmable Custom Symposium on Field-Programmable Custom Computing

Computing Machines, 2010 Machines, 2011

[5] Gerald R. Morris and Viktor K. Prasanna, “An FPGA-based [23] J. Gaisler, The LEON-2 Processor User’s Manual Version

floating-Point Jacobi Iterative Solver”, department of electrical 1.0.13. Goteborg, Sweden: Gaisler Research, 2003

engineering, In Proceedings of the 8th International Symposium

on Parallel Architectures, Algorithms and Networks, 2005.

You might also like

- Application Specific Integrated Circuit (ASIC) TechnologyFrom EverandApplication Specific Integrated Circuit (ASIC) TechnologyNorman EinspruchNo ratings yet

- Topics in Engineering Logic: International Series of Monographs on Electronics and InstrumentationFrom EverandTopics in Engineering Logic: International Series of Monographs on Electronics and InstrumentationNo ratings yet

- Virtualization Techniques OverviewDocument63 pagesVirtualization Techniques OverviewGopi SundaresanNo ratings yet

- Growing Challenges in Nanometer Timing Analysis: by Graham BellDocument3 pagesGrowing Challenges in Nanometer Timing Analysis: by Graham BellRaghavendra ReddyNo ratings yet

- Large-Scale C++ Software DesignDocument870 pagesLarge-Scale C++ Software Designyou1lawNo ratings yet

- The Single Cycle CPU ProjectDocument16 pagesThe Single Cycle CPU ProjectErik MachorroNo ratings yet

- BusPro-S SPI TutorialDocument8 pagesBusPro-S SPI TutorialDexterNo ratings yet

- Class12 15 HSPICEDocument59 pagesClass12 15 HSPICEKavicharan MummaneniNo ratings yet

- Risc VDocument66 pagesRisc Vfarha19No ratings yet

- Classic RISC PipelineDocument10 pagesClassic RISC PipelineShantheri BhatNo ratings yet

- Implementation of Digital Clock On FPGA: Industrial Training Report ONDocument26 pagesImplementation of Digital Clock On FPGA: Industrial Training Report ONAdeel HashmiNo ratings yet

- Delays VerilogDocument18 pagesDelays VerilogAkhilesh SinghNo ratings yet

- PCI Express 5 Update Keys To Addressing An Evolving SpecificationDocument33 pagesPCI Express 5 Update Keys To Addressing An Evolving SpecificationSasikala KesavaNo ratings yet

- Static Crosstalk-Noise AnalysisDocument127 pagesStatic Crosstalk-Noise AnalysisNguyễn Hưng Idiots100% (1)

- HFIC Chapter 9 HF Mixer Switch Modulator DesignDocument48 pagesHFIC Chapter 9 HF Mixer Switch Modulator DesignAnonymous 39lpTJiNo ratings yet

- PCIe Measurements 4HW 19375 1Document36 pagesPCIe Measurements 4HW 19375 1Satian AnantapanyakulNo ratings yet

- RISC-V Quick ReferenceDocument10 pagesRISC-V Quick Referenceadnan.tamimNo ratings yet

- Cache Coherence: Part I: CMU 15-418: Parallel Computer Architecture and Programming (Spring 2012)Document31 pagesCache Coherence: Part I: CMU 15-418: Parallel Computer Architecture and Programming (Spring 2012)botcreaterNo ratings yet

- Chip Design Made EasyDocument10 pagesChip Design Made EasyNarendra AchariNo ratings yet

- Hspice 2005Document173 pagesHspice 2005何明俞No ratings yet

- RTL Design FSM With DatapathDocument47 pagesRTL Design FSM With DatapathamaanrizaNo ratings yet

- VerificationDocument149 pagesVerificationP Brahma ReddyNo ratings yet

- Development of a derivative standard cell library BNM_LVT_45nm for CMOS GPDK045 libraryDocument46 pagesDevelopment of a derivative standard cell library BNM_LVT_45nm for CMOS GPDK045 librarySumanth VarmaNo ratings yet

- Design of Synchronous FifoDocument18 pagesDesign of Synchronous Fifoprasu440No ratings yet

- Zynq7000 Embedded Design TutorialDocument126 pagesZynq7000 Embedded Design TutorialRohini SeetharamNo ratings yet

- DDI0406C D Armv7ar Arm PDFDocument2,720 pagesDDI0406C D Armv7ar Arm PDFLukalapu Santosh RaoNo ratings yet

- Verilog HDL Synthesis A Practical PrimerDocument245 pagesVerilog HDL Synthesis A Practical PrimerNguyễn Sĩ NamNo ratings yet

- Soc Verification StrategyDocument2 pagesSoc Verification StrategyDebashis100% (2)

- HSPICE Toolbox For Matlab and Octave (Also For Use With Ngspice)Document5 pagesHSPICE Toolbox For Matlab and Octave (Also For Use With Ngspice)Wala SaadehNo ratings yet

- RTL coding styles that can cause pre-post synthesis simulation mismatchesDocument15 pagesRTL coding styles that can cause pre-post synthesis simulation mismatchesajaysimha_vlsiNo ratings yet

- Cache Memory-Direct MappingDocument30 pagesCache Memory-Direct MappingEswin AngelNo ratings yet

- Basic Verilog HDL GuideDocument204 pagesBasic Verilog HDL GuideNhu Ngoc Phan Tran100% (1)

- DDR PHY Interface Specification v3 1Document141 pagesDDR PHY Interface Specification v3 1Yu-Tsao Hsing50% (2)

- Cortex-M For BeginnersDocument24 pagesCortex-M For BeginnersSatish Moorthy100% (1)

- Cache Coherence: Caches Memory Coherence Caches MultiprocessingDocument4 pagesCache Coherence: Caches Memory Coherence Caches MultiprocessingSachin MoreNo ratings yet

- Booting An Intel Architecture SystemDocument14 pagesBooting An Intel Architecture Systemdra100% (1)

- Interfacing and Some Common Building Blocks: Coe 111: Advanced Digital DesignDocument35 pagesInterfacing and Some Common Building Blocks: Coe 111: Advanced Digital DesignFrancisNo ratings yet

- SAR ADC TutorialDocument48 pagesSAR ADC TutorialPing-Liang Chen100% (1)

- Cache CoherencyDocument33 pagesCache CoherencyKUSHAL NEHETENo ratings yet

- Resource Efficient LDPC Decoders: From Algorithms to Hardware ArchitecturesFrom EverandResource Efficient LDPC Decoders: From Algorithms to Hardware ArchitecturesNo ratings yet

- Linux&Tcl TrainingDocument13 pagesLinux&Tcl TrainingHòa Nguyễn Lê MinhNo ratings yet

- TCL TKDocument20 pagesTCL TKLifeat5voltsNo ratings yet

- VLSI Cadance ManualDocument84 pagesVLSI Cadance ManualTony ParkerNo ratings yet

- DUI0534A Amba 4 Axi4 Protocol Assertions r0p0 UgDocument47 pagesDUI0534A Amba 4 Axi4 Protocol Assertions r0p0 UgSoumen BasakNo ratings yet

- Zynq 7000 TRMDocument1,770 pagesZynq 7000 TRMSambhav VermanNo ratings yet

- Key Difference Between DDR4 and DDR3 RAMDocument4 pagesKey Difference Between DDR4 and DDR3 RAMGopalakrishnan PNo ratings yet

- Lab 5 DR Muslim (Latest)Document15 pagesLab 5 DR Muslim (Latest)a ThanhNo ratings yet

- Design of Sram in VerilogDocument124 pagesDesign of Sram in VerilogAbhi Mohan Reddy100% (3)

- 4-bit ALU Design and VerificationDocument18 pages4-bit ALU Design and VerificationHarry TrivediNo ratings yet

- Cache MemoryDocument20 pagesCache MemoryTibin ThomasNo ratings yet

- VerilogDocument226 pagesVerilogNadeem AkramNo ratings yet

- Orthogonal Code Generator For 3G Wireless Transceivers: Boris D. Andreev, Edward L. Titlebaum, and Eby G. FriedmanDocument4 pagesOrthogonal Code Generator For 3G Wireless Transceivers: Boris D. Andreev, Edward L. Titlebaum, and Eby G. FriedmanSrikanth ChintaNo ratings yet

- Cache MemoryDocument72 pagesCache MemoryMaroun Bejjany67% (3)

- Two Stage CMOS Op Amp - Seid PDFDocument79 pagesTwo Stage CMOS Op Amp - Seid PDFTayachew BerhanNo ratings yet

- SECDED CodingDocument4 pagesSECDED CodingShashank ShrivastavaNo ratings yet

- Application-Specific Integrated Circuit ASIC A Complete GuideFrom EverandApplication-Specific Integrated Circuit ASIC A Complete GuideNo ratings yet

- Modeling Embedded Systems and SoC's: Concurrency and Time in Models of ComputationFrom EverandModeling Embedded Systems and SoC's: Concurrency and Time in Models of ComputationNo ratings yet

- Engineering Field Theory: The Commonwealth and International Library: Applied Electricity and Electronics DivisionFrom EverandEngineering Field Theory: The Commonwealth and International Library: Applied Electricity and Electronics DivisionNo ratings yet

- ASIC and FPGA Verification: A Guide to Component ModelingFrom EverandASIC and FPGA Verification: A Guide to Component ModelingRating: 5 out of 5 stars5/5 (1)

- ParetoDocument3 pagesParetokiranNo ratings yet

- Assignment 2 MatlabDocument1 pageAssignment 2 MatlabkiranNo ratings yet

- Floating-Point Arithmetic Using GPGPU On FPGAsDocument6 pagesFloating-Point Arithmetic Using GPGPU On FPGAskiranNo ratings yet

- AES-Based Security Coprocessor IC in 0.18-MuhboxmCMOS With Resistance To Differential Power Analysis Side-Channel AttacksDocument11 pagesAES-Based Security Coprocessor IC in 0.18-MuhboxmCMOS With Resistance To Differential Power Analysis Side-Channel AttackskiranNo ratings yet

- NPAV LicenseDocument1 pageNPAV LicensekiranNo ratings yet

- A VLSI Analog Computer - Math Co-Processor For A Digital ComputerDocument3 pagesA VLSI Analog Computer - Math Co-Processor For A Digital ComputerkiranNo ratings yet

- The I486 CPU - Executing Instructions in One Clock CycleDocument10 pagesThe I486 CPU - Executing Instructions in One Clock CyclekiranNo ratings yet

- Norestrictions - Nomemrestrict - Percentvidmem 100 - Availablevidmem 24.0 - Refreshrate 60Document1 pageNorestrictions - Nomemrestrict - Percentvidmem 100 - Availablevidmem 24.0 - Refreshrate 60kiranNo ratings yet

- Performance Evaluation of R With Intel Xeon Phi CoprocessorDocument8 pagesPerformance Evaluation of R With Intel Xeon Phi CoprocessorkiranNo ratings yet

- Floating-Point Arithmetic Using GPGPU On FPGAsDocument6 pagesFloating-Point Arithmetic Using GPGPU On FPGAskiranNo ratings yet

- Designing of Single Precision Floating Point DSP Co-ProcessorDocument5 pagesDesigning of Single Precision Floating Point DSP Co-ProcessorkiranNo ratings yet

- Hardware Design and Arithmetic Algorithms For A Variable-Precision, Interval Arithmetic CoprocessorDocument8 pagesHardware Design and Arithmetic Algorithms For A Variable-Precision, Interval Arithmetic CoprocessorkiranNo ratings yet

- A Dual-Threaded Architecture For Interval Arithmetic Coprocessor With Shared Floating Point UnitsDocument4 pagesA Dual-Threaded Architecture For Interval Arithmetic Coprocessor With Shared Floating Point UnitskiranNo ratings yet

- A High Performance Floating Point CoprocessorDocument7 pagesA High Performance Floating Point CoprocessorkiranNo ratings yet

- C 17Document1 pageC 17kiranNo ratings yet

- c17 BenchDocument1 pagec17 BenchkiranNo ratings yet

- Directory LocationDocument1 pageDirectory LocationkiranNo ratings yet

- Quiz - 27.8.16Document1 pageQuiz - 27.8.16kiranNo ratings yet

- Bell With ValDocument2 pagesBell With ValkiranNo ratings yet

- BellmanDocument2 pagesBellmankiranNo ratings yet

- New Microsoft Word DocumentDocument7 pagesNew Microsoft Word DocumentkiranNo ratings yet

- Complex IntegrationDocument71 pagesComplex IntegrationSaurabh JainNo ratings yet

- Smith Chart TutorialDocument27 pagesSmith Chart TutorialSandun RathnayakeNo ratings yet

- April 2017 - Lab Quiz TimetableDocument1 pageApril 2017 - Lab Quiz TimetablekiranNo ratings yet

- New Microsoft Word DocumentDocument7 pagesNew Microsoft Word DocumentkiranNo ratings yet

- Visual SettingsDocument8 pagesVisual SettingsManuel LLaczaNo ratings yet

- HDL Concepts for Electronic DesignDocument66 pagesHDL Concepts for Electronic DesignMd Samil FarouquiNo ratings yet

- Digital Design Using Verilog PDFDocument60 pagesDigital Design Using Verilog PDFkiranNo ratings yet

- HDL Lab4Document2 pagesHDL Lab4kiranNo ratings yet

- Introduction To Mechatronics: Programmable Logic ControllersDocument31 pagesIntroduction To Mechatronics: Programmable Logic ControllersAmanuel tadiwosNo ratings yet

- F SQP Ip 2023-24Document8 pagesF SQP Ip 2023-24anshulchauhan945950% (1)

- 3 Intro+to+MB90F387S PDFDocument29 pages3 Intro+to+MB90F387S PDFMarc Neil ApasNo ratings yet

- Industrial Ethernet Module IEM Users GuideDocument84 pagesIndustrial Ethernet Module IEM Users Guidekfathi55No ratings yet

- Quezon City University: Minor Offence: Violation Monitoring System For Barangay Batasan Hills, Quezon CityDocument77 pagesQuezon City University: Minor Offence: Violation Monitoring System For Barangay Batasan Hills, Quezon CityGlenn B. MendozaNo ratings yet

- CZIDBVN Opcode ChartDocument1 pageCZIDBVN Opcode ChartMartin ZacharyNo ratings yet

- Ap89042 VoiceDocument19 pagesAp89042 VoicevizordNo ratings yet

- Ch-8 CPU-MMDocument46 pagesCh-8 CPU-MMRohan100% (2)

- Lab 09Document5 pagesLab 09api-241454978No ratings yet

- Integrado de Plancha RemingtonDocument99 pagesIntegrado de Plancha RemingtonAndrey serrano hidalgoNo ratings yet

- Syllabus B.Tech Applied Electronics and Instrumentation PDFDocument55 pagesSyllabus B.Tech Applied Electronics and Instrumentation PDFShiva KiranNo ratings yet

- Addressing Modes 8051Document37 pagesAddressing Modes 80514SI21EC037 MONOJ S BNo ratings yet

- What's The Difference Between CTS, Multisource CTS, and Clock MeshDocument7 pagesWhat's The Difference Between CTS, Multisource CTS, and Clock MeshRenju TjNo ratings yet

- PLC Based Pneumatic Punching MachineDocument5 pagesPLC Based Pneumatic Punching MachineEslam MouhamedNo ratings yet

- Faulty Code Description SolutionDocument5 pagesFaulty Code Description SolutionOppoNo ratings yet

- Computer Organization Module 5Document27 pagesComputer Organization Module 51RN19CS033 Chidwan RameshNo ratings yet

- Instruction-Level Parallelism (ILP), Since TheDocument57 pagesInstruction-Level Parallelism (ILP), Since TheRam SudeepNo ratings yet

- Question Paper 8051 Microcontroller and ApplicationsDocument1 pageQuestion Paper 8051 Microcontroller and Applicationsveeramaniks408No ratings yet

- Parul University: Pin Diagram of 8086Document14 pagesParul University: Pin Diagram of 8086Lakshmi KumariNo ratings yet

- Introduction To Ict, Computer and WindowsDocument49 pagesIntroduction To Ict, Computer and WindowshoxyberryNo ratings yet

- Kendall Sad9 Cpu 07 PDFDocument6 pagesKendall Sad9 Cpu 07 PDFBrandon DawggNo ratings yet

- Cso Handbook PDFDocument22 pagesCso Handbook PDFPrasen NikteNo ratings yet

- A Project Report On Avr Micro Controller Development SystemDocument8 pagesA Project Report On Avr Micro Controller Development SystemGold KnowinNo ratings yet

- Inside The System UnitDocument48 pagesInside The System UnitCharlie Arguelles100% (1)

- Coc 1 ExamDocument7 pagesCoc 1 ExamJelo BioNo ratings yet

- TestBank Chap2Document12 pagesTestBank Chap2Zhuohui Li50% (2)

- MonitoringDocument8 pagesMonitoringsid_srmsNo ratings yet

- Win32 Processes and ThreadsDocument168 pagesWin32 Processes and ThreadsAjit Kumar SinghNo ratings yet

- 02 Ch5 Basic Computer Organization and DesignDocument30 pages02 Ch5 Basic Computer Organization and DesignBigo LiveNo ratings yet

- Chapter 3-Instruction CycleDocument8 pagesChapter 3-Instruction CycleRoshan NandanNo ratings yet