Professional Documents

Culture Documents

Unified Physical Infrastructure Strategies For Smart Data Centers

Uploaded by

adrian_udrescuOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Unified Physical Infrastructure Strategies For Smart Data Centers

Uploaded by

adrian_udrescuCopyright:

Available Formats

Unified Physical InfrastructureSM (UPI)

Strategies for Smart Data Centers

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

www.panduit.com WP-09 • September 2009

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

Introduction

Business management applications and rich collaboration software for both employees and customers require

increasingly complex and large-scale database processing capabilities. Data center managers are under

constant pressure to manage growth while reducing capital and operational expenses. With per-cabinet

equipment densities increasing to meet these processing demands, network stakeholders need new and

effective cooling conservation strategies for managing growth and the resulting high heat densities over the life

of the data center.

SM

Organizations are looking to Unified Physical Infrastructure (UPI) solutions that take a holistic approach to the

data center, turning to passive exhaust containment solutions to optimize energy efficiency and mitigate risk

throughout the physical infrastructure. One such solution, the Vertical Exhaust System (VES), is a passive

exhaust containment system that eliminates hot exhaust air recirculation to active equipment inlets throughout

the data center. These systems channel heated server exhaust air directly into the hot air return plenum,

separating hot exhaust air from cool supply air. This allows data center managers to operate the data center at

higher supply air set point temperatures to achieve operational savings.

This white paper discusses thermal management considerations associated with deploying a Vertical Exhaust

System in the data center, and its impact on the data center physical infrastructure. An example scenario is

presented to demonstrate the cost savings that may be achieved by deploying a VES in a typical data center

environment. Best practices include minimizing airflow impedance in the back of the cabinet and up through the

vertical duct, and keeping the server exhaust area clear of airflow obstructions. When deployed in conjunction

with infrastructure best practices, VES containment strategies achieve cooling efficiencies, support

sustainability goals, and lower cooling system operating costs by 25% or more.

Reaching the Limits of Traditional Cooling Systems

Typically, airflow management in a data center is achieved by strategic placement of Computer Room Air

Handling (CRAH) units and physical layer infrastructure elements, with equipment rows positioned in a Hot

Aisle / Cold Aisle arrangement (see Figure 1) as defined in TIA-942 and in ASHRAE’s “Thermal Guidelines for

Data Processing Environments.”

Figure 1. Traditional Raised Floor Hot Aisle / Cold Aisle Data Center with Ceiling Returns

WW-CPWP-09, Rev.0, 09/2009 2

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

In a raised-floor environment with ceiling returns, CRAH units distribute cool air underneath a raised floor,

allowing air to enter the room through perforated floor tiles located in cold aisles. Active equipment within

cabinets is positioned such that the equipment fans draw cool air through the equipment from the cold-aisle side

and release exhaust into the hot aisle at the rear. Finally, the equipment exhaust air exits the room and returns

back to the CRAHs via a ceiling plenum and return ducts.

The current trend in data centers is toward greater equipment densities, and these higher densities are pushing

the capability of raised floor cooling systems to their limits. Raised floor systems with ceiling returns can

typically deliver enough air to remove 7-8 kW of heat per cabinet, provided that the CRAH units have adequate

thermal capacity and best thermal management practices are employed. These best practices include a 24-36

inch (60-90 cm) raised floor height, a hot aisle / cold aisle arrangement for cabinet rows, properly sized

perforated floor tiles or grates, a proper return airflow path, proper positioning of power and data cables, and the

use of raised floor air sealing grommets and blanking panels.

Data center consolidation and virtualization efforts are resulting in higher density cabinets with each cabinet

holding several servers. These high server densities can generate heat loads of 8-12 kW per cabinet; and as

equipment is added and upgraded, especially high heat-generating blade server configurations, cabinet heat

loads can exceed 25 kW. Without effective thermal management strategies in place risks multiply quickly:

• Increased risk of downtime and lost revenue due to equipment failures

• Reduced Mean Time Between Failure (MTBF)

• Increased costs associated with maintenance

• Increased operational expenses due to overcooling

Innovative, Cost-Effective Improvements in Data Center Cooling

Budget constraints and escalating energy costs are forcing data center stakeholders to review all available heat

removal options in order to improve operational efficiency and maintain business continuity. UPI-based cooling

conservation solutions are finding increasing adoption in data centers as a reliable, cost-effective and eco-

friendly option for addressing high heat loads. These innovative solutions use precision cooling techniques to

optimize airflow and protect active equipment without adding power-hungry cooling units that drive capital costs

up and introduce additional operational costs (see Table 1 for a quantitative comparison).

Table 1. Comparison of Supplemental Cooling Methods in the Data Center

Method Capital Costs Operational Costs Points of Failure

Passive Systems $ None None

In-Cabinet Fans $$ 3 3

Overhead Cooling Units $$$ 3 3

Rear Door Heat Exchanger $$$ 3 3

Boost CRAH Capacity $$$ 3 3

As part of a holistic UPI-based approach, precision cooling elements can be planned and deployed from initial

startup or modularly added when needed, enhancing agility by integrating with existing cabinet layouts to allow

cooling capacity to scale as business requirements change and grow. This flexibility allows data center

stakeholders to provision equipment as needed without adding CRAH units or overtaxing the raised floor

cooling system.

WW-CPWP-09, Rev.0, 09/2009 3

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

One of the most efficient passive airflow management options for data center environments is exhaust

containment such as the Vertical Exhaust System (VES), which channels heated server exhaust air directly to

the ceiling return plenum via a vertical duct or “chimney” unit (see Figure 2). This ducting of hot exhaust air to

the CRAH returns prevents hot air recirculation, ensuring availability of cool air at the equipment inlets. The

VES exhaust containment system is capable of supporting high heat loads to enable high density equipment

1

configurations such as blade server deployments.

Temperature

60ºF 73ºF 85ºF 98ºF 110ºF

Ceiling Return Plenum

Cold Hot Cold Hot Cold

Aisle Aisle Aisle Aisle Aisle

Under Floor Plenum

Hot Spots Develop Without VES

Ceiling Return Plenum

Cold Hot Cold Hot Cold

Aisle Aisle Aisle Aisle Aisle

Under Floor Plenum

VES Deployed

Figure 2. TOP: In a traditional Raised Floor with Ceiling Returns configuration (hot aisle/cold aisle) hot

air can recirculate to the front of the enclosure, heating the top portion of the rack.

BOTTOM: The Panduit VES optimizes thermal efficiency by moving hot air through vertical ducts

directly to the return plenum.

1

Intel white paper: “Air-Cooled High Performance Data Centers: Case Studies and Best Methods.” November 2006.

WW-CPWP-09, Rev.0, 09/2009 4

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

The segregation of hot and cool air allows the data center to be operated at a higher temperature set point,

which in turn reduces energy consumption by the cooling system. Directing hot air into the return plenum also

reduces energy use by maximizing the difference in temperature between the return air and chilled water across

cooling coils, which enables CRAH units to run at higher capacity. The deployment of a VES-based air

containment system provides opportunities to lower CRAH fan speeds and observe fan power savings while

maintaining the cabinet inlet temperatures within the desired range (e.g., ASHRAE-recommended temperature

limit of 80.5ºF).

A VES enables significant energy savings in the data center by potentially reducing the number of CRAH units

required to obtain desired thermal performance and by optimizing the work performed by those units, resulting

2

in immediate and considerable energy and cost savings. For example, a 3,000 ft raised floor data center with

68 server cabinets would require 6 CRAH units to manage 8 kW of heat per cabinet. As equipment is added,

data center stakeholders face the challenge of managing the additional thermal load.

This hypothetical data center would require additional CRAH units to reliably manage up to 10 kW per cabinet,

at a capital cost of $35,000 per unit. At an average electrical utility rate of $0.11 per kW-hr, the cost to operate a

2

CRAH unit at maximum capacity runs about $9,000/yr , plus any other installation and maintenance costs to

service the unit. By contrast, for less than the cost of one CRAH unit, a passive VES can be deployed on all

heat-generating cabinets to manage thermal loads without additional ongoing operating costs (see Table 2).

All of these factors combine to reduce cooling system operating costs by at least 25%, with actual cost savings

potentially increasing with layout configuration, equipment density, and maintenance savings. For all these

reasons it is clear that VESs are the cost-effective (and green) choice to efficiently cool high heat-generating

cabinets.

2

Table 2. Cost Savings Achieved Using Passive VES for Thermal Management (3,000 ft Data Center with

68 Server Cabinets)

Capital Costs* Utility Costs**

Thermal Annual

Management CRAH # of VES per

Option unit cost CRAH unit cost # of VES Total CRAH Total

Option 1: Additional

$35,000 8 $700 0 $280,000 $9,023 $72,184

CRAHs

Option 2: VES

$35,000 6 $700 37 $235,900 $9,023 $54,138

Cabinets

Total Cost Savings Using VES $44,100 $18,046

% Cost Savings Using VES 16% 25%

Assumptions:

* Does not include cost to install.

**Electricity rate of $0.11 per kW-hr.

Return on Investment (ROI) For VES Deployment

Payback period: 0.3 years

10-year ROI: 266%

2

Value calculated with the Liebert Power and Cooling Energy Savings Estimator, using an electricity rate of $0.11 per

kW/hr at 90% motor efficiency.

WW-CPWP-09, Rev.0, 09/2009 5

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

VES Design Considerations

The decision to deploy a VES has multiple implications for layout of other data center elements. First, data

center stakeholders need to analyze the layout of the entire physical infrastructure (both facilities-related and

cabling-related elements) to determine how best to keep overhead room space free from obstruction. Second,

stakeholders should maximize system performance by optimizing cable management within the cabinet: in-

cabinet airflow can be hampered by poor cable management in server cabinets where power and data cable

congestion can block server exhaust fans and obstruct airflow through the VES.

Optimize Room-Level Layout

Several room-level factors need to be considered for effective VES deployment. Designers should route data

cable pathways overhead at the front of cabinet enclosures, leaving the rear of the cabinet open for physical

placement of the vertical duct above the hot exhaust area. Only the front area above the cabinet should be used

for overhead copper and fiber cabling pathways. This should be carefully designed to ensure sufficient cabling

capacity for the entire row of cabinets (see Figure 3a).

An additional challenge for data center stakeholders is integrating the VES layout with lighting and fire

detection/prevention systems. Infrastructure layout decisions are commonly made during the data center design

phase in consultation with facilities experts, with lighting and fire systems positioned to attain maximum

coverage throughout the room and in compliance with local codes. In retrofit applications the VES must be

integrated with existing lighting and fire suppression designs to maintain the effectiveness of those key systems.

Once the vertical duct unit is placed in position, it should be sealed at the points of transition (i.e., ceiling

plenum and cabinet) to prevent hot exhaust air from being released into the data center and recirculating (see

Figure 3b). Cable entry points at tops of cabinets also should be sealed to prevent hot air leakage and

recirculation.

Figure 3a. Effective Location of Overhead Cabling Figure 3b. Solid Rear Doors Prevent Hot Air

Pathways in Relation to Panduit VES Recirculation

WW-CPWP-09, Rev.0, 09/2009 6

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

To realize the full potential of a Vertical Exhaust System and for effective separation of cabinet exhaust air from

the room ambient air, use blanking panels in empty cabinet spaces and properly seal all cable pass-through

openings. These best practices eliminate the bypass of cold air, recirculation of hot exhaust air, and unwanted

airflow leakage. This also enables server inlets to draw uniformly cool air at the front of the cabinet, eliminating

the need to oversupply cool air to achieve desired thermal performance.

Keep the Exhaust Area Open

The VES functions by containing and channeling hot exhaust air. A

slightly lower pressure in the ceiling return plenum than in the room

helps draw hot exhaust air from the cabinet and into the plenum. The

VES should be properly sized, sealed, and free from obstructions to

avoid introducing choke points that impede the continuous flow of

exhaust air and limit system effectiveness.

The impact of chimney size on system performance is fairly

straightforward-- the larger the channel and less obstruction, the less

impedance to server fan airflow and hence the greater the volumetric

airflow through the vertical duct. The more critical limiting factor for

VES effectiveness is the size of the space behind servers, as the

back pressure on the exhaust side of the cabinet may adversely

affect the cooling of IT equipment. To minimize the back pressure

effect on server fans and to optimize VES effectiveness, the space

behind the servers should be free from obstructions and sized to

avoid any substantial back pressure.

The free space behind servers is also a critical parameter to control,

as server cabling density can have a significant impact on the ability

of hot exhaust air to exit the cabinet. For example, in a standard Figure 4. Poor in-cabinet cable manage-

24-inch-wide server cabinet, the effective open space behind ment practices introduce clutter and choke

points in the exhaust area that limit cooling

servers is often reduced by improper cabling and horizontal cable system effectiveness.

management (see Figure 4).

As shown in Figure 5, data cables behind servers in a 24-inch-wide cabinet are forced to occupy valuable

exhaust area space, with data cables positioned to one side of the rear of the enclosure and power cables on

the other. Cable management arms also extend approximately four inches into the exhaust area, blocking that

space with cables and reducing the effective size of the exhaust area by 33%. (Loose or poorly managed

cabling can add clutter to this area, reducing cooling system effectiveness even further.)

In contrast, a 32-inch-wide cabinet adds 8 inches of width to the exhaust area without sacrificing depth: cables

are routed into vertical spaces to the sides of the servers to keep the entire exhaust area clear. Data and power

cabling can be managed outside the exhaust area to maximize the airflow channel and maintain optimal cooling

system performance. The result is an effective doubling of the open exhaust area (see Figure 5), dramatically

increasing airflow capacity while observing best cable management practices to ensure system performance.

WW-CPWP-09, Rev.0, 09/2009 7

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

Cross-Sectional

186 sq. in. (1200 sq. cm) 372 sq. in. (2400 sq. cm)

Exhaust Area

~24 in. (600 mm) Cabinet

Cabinet Width ~32 in. (800 mm) Cabinet

with Cable Mgmt Arms

Effective Free Space

~8 in. (200 mm) ~12 in. (300 mm)

Behind Servers

Figure 5. Top View Showing Impact of Cabinet Width and Cable Management on Exhaust Area Space

Cable Management Considerations

Panduit has identified several best practices to follow in order for data center stakeholders to maximize VES

effectiveness while maintaining optimal network performance. In general, the exhaust area should be kept as

free from obstruction as possible to enable the unimpeded flow of hot exhaust air from the cabinet into the

vertical duct. Installers should route data and power cables toward cabinet side walls and away from server

exhaust areas. Also, the use of cable management fingers is preferred over cable management arms, as the

arms create an obstruction that impedes airflow.

The following best practices address specific areas within the cabinet where optimal airflow should be

observed.

• In-cabinet exhaust area. The most critical area of the in-cabinet exhaust area is the upper four to six

rack unit spaces. Although cabling-related obstructions below this point can impede airflow,

obstructions above this point present a direct risk to cooling system performance as they block the

vertical duct channel and directly inhibit the ability of air to exit the cabinet (see Figure 6). For

example, server cabinets provisioned with Top of Rack (ToR) switching architectures are prone to this

type of risk, as dense fiber and copper cable bundles (as well as equipment) may protrude several

inches into the exhaust area.

WW-CPWP-09, Rev.0, 09/2009 8

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

Because fiber enclosures can extend beyond cabinet mounting rails, a flush-mount fiber enclosure is

recommended to prevent fiber tray overhang into the exhaust area. For patching environments, the

use of a flush-mount vertical patching system is recommended to route patch cords from server ports

directly to adjacent patching locations, leaving the exhaust area free from large cable bundles that

block the vertical duct channel opening (see Figure 6). For ToR switching deployments, cable

bundles can be efficiently routed up vertical side channels to minimize the amount of space these

bundles occupy in the exhaust area.

• Power Outlet Units (POUs) and associated cabling. Power outlet units and cabling should be

aligned with data cabling by placing POUs in opposite vertical spaces and positioning power cables

away from IT assets (see Figures 5 and 6). Cables should be sized at minimal lengths (18-24 inches)

to minimize cable slack and increase available space in the exhaust area.

Overhead cabling

should be kept clear

of the exhaust area

Equipment can reduce

the open exhaust area,

creating a choke point

that reduces VES

effectiveness

Example of proper data and power cable

management, positioning cables in vertical spaces

and keeping exhaust area clear

Figure 6. Maximize VES Effectiveness by Properly Managing Overhead Pathways and In-Cabinet Patching

WW-CPWP-09, Rev.0, 09/2009 9

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

• Passive air-blocking devices. Blanking panels should occupy empty rack spaces and air sealing

grommets should be used to seal raised floor tile cutout openings to maintain hot and cold air

separation.

• Cable pathways. Data center designers should deploy overhead cabling pathways at the front of

cabinets in order to ensure enough clearance at the rear of the cabinet for vertical duct units to be

directly positioned above the exhaust area. Also, in-cabinet cable managers provide pathways

allowing cables to run vertically in cabinet side areas; cables are managed out of the path of hot

exhaust airflow and are distanced from equipment chassis.

Retrofit Cases

Although many data center designers incorporate VESs into an initial room design, these systems can be

deployed in conjunction with existing cooling systems to address high heat-generating cabinets that emerge

over time. Room layout conditions under which the VES can be deployed in retrofit applications include

adequate room above the cabinet to fit a vertical duct unit and a hot air return path such as a dropped ceiling

return plenum. The retrofitting of VESs provides additional cooling efficiency at minimal operating cost. A VES

can be integrated with existing hot aisle / cold aisle cooling layouts, whether the data center is a raised floor or

concrete slab type.

Conclusion

High heat loads in the data center are best managed by deploying UPI-based solutions that support uptime

goals, prolong the life of active equipment, and enable the continued support of “green” IT goals. Organizations

cannot afford to waste money on inefficient cooling practices, so data center stakeholders need to be confident

that the thermal management solutions they deploy will achieve cooling goals at minimal cost.

These goals can be realized by the deployment of passive exhaust containment systems. The modular, passive

Vertical Exhaust System (VES) channels heat from the server exhaust directly into the hot air return plenum,

isolating hot exhaust air from equipment cooling air to eliminate data center hot spots due to hot exhaust air

recirculation. Best cabling practices to observe include keeping the in-cabinet exhaust area behind servers as

free from obstructions as possible to enable the unimpeded flow of hot exhaust air into the vertical duct. In

particular, installers should route data and power cables toward cabinet side walls and away from server

exhaust areas, leaving the exhaust area free from large cable bundles.

Panduit’s expertise in the data center space and consultative approach to determining customer requirements

deliver physical infrastructure solutions that optimize the thermal efficiency of the data center infrastructure. Our

comprehensive UPI-based data center solutions include thermal management systems, High Speed Data

Transport (HSDT) systems, cabinet systems, and physical infrastructure management software. This unique

solutions approach enables enterprises to confidently meet broader business objectives for agility, availability,

integration and security.

For more information on comprehensive Panduit data center solutions, or to view the Net-Access Cabinet

brochure featuring the VES, visit www.panduit.com/datacenter.

WW-CPWP-09, Rev.0, 09/2009 10

©2009 PANDUIT Corp. All rights reserved.

Deploying a Vertical Exhaust System

to Achieve Energy Efficiency and Support Sustainability Goals

About PANDUIT

Panduit is a world-class developer and provider of leading-edge solutions that help customers optimize the

physical infrastructure through simplification, increased agility and operational efficiency. Panduit’s Unified

Physical Infrastructure (UPI) based solutions give Enterprises the capabilities to connect, manage and

automate communications, computing, power, control and security systems for a smarter, unified business

foundation. Panduit provides flexible, end-to-end solutions tailored by application and industry to drive

performance, operational and financial advantages. Panduit’s global manufacturing, logistics, and e-commerce

capabilities along with a global network of distribution partners help customers reduce supply chain risk. Strong

technology relationships with industry leading systems vendors and an engaged partner ecosystem of

consultants, integrators and contractors together with its global staff and unmatched service and support make

Panduit a valuable and trusted partner.

www.panduit.com · cs@panduit.com · 800-777-3300

WW-CPWP-09, Rev.0, 09/2009 11

©2009 PANDUIT Corp. All rights reserved.

You might also like

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (890)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- Hetronic Bms-2 (GB)Document20 pagesHetronic Bms-2 (GB)mohamed92% (12)

- Rockwell FactoryTalk Studio BasicsDocument7 pagesRockwell FactoryTalk Studio BasicsBryn WilliamsNo ratings yet

- Emerson - Transformer UPS Vs Transformerless UPSDocument12 pagesEmerson - Transformer UPS Vs Transformerless UPSadrian_udrescuNo ratings yet

- Wiring Diagram ISB CM2150 and ISB CM2150 E: Engine Control ModuleDocument1 pageWiring Diagram ISB CM2150 and ISB CM2150 E: Engine Control ModuleOmar HernándezNo ratings yet

- Modbus Over Serial LineDocument44 pagesModbus Over Serial Linearindammanna123No ratings yet

- Raspberry PiDocument19 pagesRaspberry PiAnonymous E4Rbo2s100% (1)

- Tas6xx Rev 05 Install Man 600-00282-000Document132 pagesTas6xx Rev 05 Install Man 600-00282-000Angel FrancoNo ratings yet

- h8115 Vmware Vstorage Vmax WPDocument0 pagesh8115 Vmware Vstorage Vmax WPAshish SinghNo ratings yet

- ATM MachineDocument19 pagesATM Machinevikas00707No ratings yet

- IEC 62310 For Static Transfer SystemsDocument10 pagesIEC 62310 For Static Transfer SystemsFlo MircaNo ratings yet

- Twido Modbus EN PDFDocument63 pagesTwido Modbus EN PDFWilly Chayña LeonNo ratings yet

- DKSIPM204B202 TLXImpedanceinLargePVPlants WEBDocument4 pagesDKSIPM204B202 TLXImpedanceinLargePVPlants WEBadrian_udrescuNo ratings yet

- Classification of Electrical Installations in Healthcare Jul10 enDocument20 pagesClassification of Electrical Installations in Healthcare Jul10 enadrian_udrescuNo ratings yet

- The Green Approach To Electrical Power Availability: White PaperDocument9 pagesThe Green Approach To Electrical Power Availability: White Paperadrian_udrescuNo ratings yet

- Uninterruptible Power Supplies European GUideDocument60 pagesUninterruptible Power Supplies European GUidementongNo ratings yet

- SNMP: Simplified: F5 White PaperDocument10 pagesSNMP: Simplified: F5 White PaperJuan OsorioNo ratings yet

- No Harmony in HarmonicsDocument8 pagesNo Harmony in Harmonicsadrian_udrescuNo ratings yet

- Opw 0Document129 pagesOpw 0Peanut d. DestroyerNo ratings yet

- Chloride - Data Centre Tier ClassificationsDocument29 pagesChloride - Data Centre Tier ClassificationsAdeel NaseerNo ratings yet

- No Harmony in HarmonicsDocument8 pagesNo Harmony in Harmonicsadrian_udrescuNo ratings yet

- Audit-Free Cloud Storage via Deniable Attribute-based EncryptionDocument15 pagesAudit-Free Cloud Storage via Deniable Attribute-based EncryptionKishore Kumar RaviChandranNo ratings yet

- The Big Problem With Renewable EnergyDocument2 pagesThe Big Problem With Renewable EnergyDiego Acevedo100% (1)

- Digitalization in HealthcareDocument2 pagesDigitalization in HealthcareAlamNo ratings yet

- Unit 2 Smart GridDocument2 pagesUnit 2 Smart GridYash TayadeNo ratings yet

- Electro Magnetic Braking System Review 1Document18 pagesElectro Magnetic Braking System Review 1sandeep Thangellapalli100% (1)

- Jurnal Inter 5Document5 pagesJurnal Inter 5Maingame 2573No ratings yet

- Ather Bank StatementDocument12 pagesAther Bank StatementsksaudNo ratings yet

- Flare Dec+JanDocument87 pagesFlare Dec+Janfarooq_flareNo ratings yet

- Gama Urban LED - Iguzzini - EspañolDocument52 pagesGama Urban LED - Iguzzini - EspañoliGuzzini illuminazione SpANo ratings yet

- Toyota Target Costing SystemDocument3 pagesToyota Target Costing SystemPushp ToshniwalNo ratings yet

- Replication Avamar DatadomainDocument94 pagesReplication Avamar DatadomainAsif ZahoorNo ratings yet

- Whitlock DNFTDocument4 pagesWhitlock DNFTJoe TorrenNo ratings yet

- Arkel Door Control KM 10Document3 pagesArkel Door Control KM 10thanggimme.phanNo ratings yet

- Statistical and Analytical Software Development: Sachu Thomas IsaacDocument10 pagesStatistical and Analytical Software Development: Sachu Thomas IsaacSaurabh NlykNo ratings yet

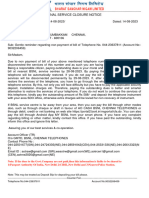

- Final Service Closure NoticeDocument2 pagesFinal Service Closure Noticeanudasari1301No ratings yet

- Webp Container SpecDocument14 pagesWebp Container SpecTorNo ratings yet

- L11 - Design A Network-2022Document36 pagesL11 - Design A Network-2022gepoveNo ratings yet

- Ensure Cisco Router Redundancy With HSRPDocument3 pagesEnsure Cisco Router Redundancy With HSRPrngwenaNo ratings yet

- Hitachi Air-Cooled Chiller Installation, Operation and Maintenance ManualDocument52 pagesHitachi Air-Cooled Chiller Installation, Operation and Maintenance Manualmgs nurmansyahNo ratings yet

- PLC Trainer Simulates Automation ControlsDocument2 pagesPLC Trainer Simulates Automation Controlssyed mohammad ali shahNo ratings yet

- OpendTect Administrators ManualDocument112 pagesOpendTect Administrators ManualKarla SantosNo ratings yet

- Current Trends and Issues in ITDocument30 pagesCurrent Trends and Issues in ITPol50% (2)