Professional Documents

Culture Documents

Etel Mikes Workplace Project Portfolio

Uploaded by

katika01Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Etel Mikes Workplace Project Portfolio

Uploaded by

katika01Copyright:

Available Formats

Biostatistics Collaboration of Australia

University of Sydney

Workplace Project Portfolio

Containing: Preface, Project Report I and Project Report II for

Units of study: WPPA and WPP B

Student name: Etel Mikes

Student ID: 0155584

Sydney, December 2006

To my mother Melanija Mikes and father Mihály Mikes

Abbreviations

AE Adverse event

AFT Accelerated failure time

CR Complete response

CRF Case report form

DSMB Data safety monitoring board

ECOG Eastern cooperative oncology group

ESMO European society for medical oncology

HER2 Human epidermal growth factor receptor 2

HT Study medication taken by patients in treatment groups A and B

LD Longest diameter

LVEF Left ventricular ejection fraction

PD Progressive disease

PH Proportional hazards

PR Partial response

RECIST Response evaluation criteria in solid tumors

SAS Statistical analysis system commercial software

SD Stable disease

STATA Commercial statistical software

TTP Time to progression

X Study medication taken by patients in treatment group A

Student: Etel Mikes ID: 0155584 WPP - Abbreviations

Workplace Project Portfolio

Preface

Student: Etel Mikes ID: 0155584 1 WPP - Preface

Table of Contents

1. CONTEXT OF THE PROJECT.....................................................................................................3

2. SCOPE OF WORK .........................................................................................................................3

3. REFLECTION ON THE LEARNING PROCESS .......................................................................4

3.1 RELATIONSHIP OF THE PORTFOLIO WORK TO COURSEWORK UNDERTAKEN .............................4

3.2 CHANGE IN KNOWLEDGE AND SKILLS AS A RESULT OF UNDERTAKING THE PROJECTS ............5

3.3 LESSONS LEARNT........................................................................................................................5

4. ETHICAL CONSIDERATION........................................................................................................5

5. PROTECTION OF PROPRIETARY INFORMATION................................................................5

6. OTHER SOURCE OF ASSISTANCE ..........................................................................................6

Student: Etel Mikes ID: 0155584 2 WPP - Preface

1. Context of the project

The two workplace projects are tied to one clinical trial conducted in Roche Products

Pty Ltd looking at the efficacy and safety of HT with or without X in patients with

human epidermal growth factor receptor 2 (HER2)-positive advanced or metastatic

breast cancer:

The primary objective of this study was to compare the overall response rate of

patients randomized to HT with overall response rate of patients randomized to HT

plus X.

As a SAS programmer in Roche Products Pty Ltd, assigned to work on this Phase II

clinical trial, I was responsible for the creation of report objects required for abstract

preparation, manuscript writing and final statistical report. Working closely with the

statistician responsible for the study, and having gained theoretical background

during my coursework over the past few years, I had been given the opportunity to

take on additional tasks as part of my workplace projects in our Biostatistics

Department.

2. Scope of work

The first project encompassed the evaluation of the study data collection and

making sure that the available data are suitable for the planned statistical analyses. It

also evaluated the design of the study and the use of the Response evaluation

criteria in solid tumors (RECIST) in comparing the overall response in the two

treatment arms.

Since there were set timelines for the presentation of the first efficacy and safety

results of the clinical trial, I had to ensure that data collection, data cleaning and data

transfer processes are all well planned and coordinated. Given the remote location of

the statistics and data management team working on this clinical trial, communication

was a challenging task, mainly carried out by email, as well as teleconferences on a

regular basis for issues resolution.

The second project involved the selection of the appropriate statistical models for

the expanded analyses of the clinical trial data, writing the SAS code to perform the

Student: Etel Mikes ID: 0155584 3 WPP - Preface

analysis and interpreting the results obtained from the point of view of clinical

relevance.

The use of logistic regression in a real-life workplace setting example, which was part

of the second project, gave me an insight on how challenging it can be to try to

reliably interpret the possible effects of the covariates on a primary efficacy variable,

particularly when there are missing data, and also in the presence of confounding.

3. Reflection on the learning process

The workplace projects undertaken as part of this Portfolio provided me with the

opportunity to get hand on experience of what it means being involved in a range of

professional duties related to the design and analysis of clinical research.

Working in collaborative manner with the Data Management team, the first project

emphasized the development of communication and negotiation skills through team

work and activities like attending Study Management Team teleconferences and face

to face meetings.

The second project exposed me to the development and implementation of the

algorithms for the use of explorative techniques, and interpretation of the results of

the analyses.

3.1 Relationship of the portfolio work to coursework undertaken

The two projects I was involved in during the last eight months at my workplace are

mostly related to the Design of Experiments and Randomised Clinical Trials (DES),

Data Management and Statistical Computing (DMC), Survival Analysis (SVA),

Categorical Data and GLMs (CDA) units of study that I’ve undertaken in my

coursework.

By working on the projects defined for this unit of study, I realized how interrelated

are the many concepts acquired in different units of the coursework undertaken, and

how these different units of study like puzzles address different aspects of the same

clinical trial.

Student: Etel Mikes ID: 0155584 4 WPP - Preface

3.2 Change in knowledge and skills as a result of undertaking the projects

Most of the practical examples and exercises during the graduate coursework were

done using the STATA software. I found it challenging but at the end rewarding to

learn and apply the theoretical knowledge that I’ve gained over years using the SAS

v8.2 software which is widely used at my workplace.

3.3 Lessons learnt

As part of my second workplace projects, for which I did not have time constraints

apart from the deadline to hand in the portfolio for this unit of study, I started on

several different things at a time. I got easily carried away with different approaches

and possibilities, and had not finished one thing before jumping onto something else.

At the end I realized that though it is interesting to do exploratory analysis, one has to

keep focused on the objective of the analysis in order to respect given timelines. This

is a lesson from which I can learn for future work projects.

4. Ethical consideration

This clinical trial was conducted in accordance with the Declaration of Helsinki, and

International Good Clinical Practice principles as described in the International

Conference of Harmonization (ICH) guidelines for Good Clinical Practice, as well as

in accordance with all local ethical and regulatory requirements.

The projects undertaken as part of this portfolio were following the same principles of

confidentiality with respect to documentation related to the clinical trial and subject

records.

5. Protection of proprietary information

Some details related to the protocol, study conduct or results are omitted from this

portfolio for reasons of confidentiality.

Since the results of the clinical trial have not been presented yet to a wide audience,

and to protect proprietary information by the sponsor, the treatment information is

Student: Etel Mikes ID: 0155584 5 WPP - Preface

partially blind in this portfolio.

The above restriction should not affect the understanding of the concepts and

methods used during my workplace project work.

6. Other source of assistance

Apart from having regular consultations with Peter Button, the lead statistician for this

clinical trial who was also my supervisor for the workplace projects, I had a chance to

contact and consult colleagues from the Roche Welwyn office in UK, who had

experience with the use of the accelerated failure time (AFT) model for the analysis

of time-to-event data.

Student: Etel Mikes ID: 0155584 6 WPP - Preface

Workplace Project Portfolio

Project Report I

Design and Data Management

Student: Etel Mikes ID: 0155584 1 WPP – Project Report I

Table of Contents

1. PROJECT DESCRIPTION ............................................................................................................3

1.1 BACKGROUND, RATIONALE FOR PROJECT .................................................................................3

1.2 AIM OF THE PROJECT ..................................................................................................................3

2. OVERVIEW OF STUDY DESIGN AND RELATED ISSUES ...................................................4

2.1 DATA-DEPENDENT STOPPING .....................................................................................................4

2.2 REFLECTION ON THE RECIST CRITERIA ....................................................................................6

3. EVALUATION OF DATA COLLECTION FROM THE PERSPECTIVE OF STATISTICAL

ANALYSIS..............................................................................................................................................8

3.1 OBTAINING DATA .........................................................................................................................8

3.2 CRF DESIGN ISSUES WITH THE DATA .........................................................................................9

4. DATA MANAGEMENT FROM THE PERSPECTIVE OF STATISTICAL ANALYSIS.......11

4.1 EVALUATING THE AMOUNT OF DATA CLEANING NEEDED .........................................................12

4.2 TEAMWORK ...............................................................................................................................13

4.3 DATA CLEANING/MANIPULATION ...............................................................................................13

5. REPORT SUMMARY ...................................................................................................................14

6. REFERENCES ..............................................................................................................................14

Student: Etel Mikes ID: 0155584 2 WPP – Project Report I

1. Project description

This workplace project comprised of the evaluation of data collection and data

management on an open-label randomized phase II study of HT and X in

combination, versus HT, in patients with advanced and/or metastatic breast cancers

that overexpress HER2. This project was geared towards making sure that the

available data are suitable for the primary study analysis defined in the Analysis Plan

[1]. In this clinical trial, overall, 222 patients were enrolled and received study drug

from January 2002 to September 2005.

1.1 Background, rationale for project

The results of the primary efficacy analysis of the clinical trial investigating the

efficacy and safety of the triple combination of HT and X compared with HT as 1st-

line treatment for HER2-positive advanced/metastatic breast cancer, were to be

presented in Basel, Switzerland at the beginning of June 2006 for the first time to a

small audience consisting of Study Management Team, Clinical science leader,

International medical leaders and Documentation specialist.

Following this the results were to be used for presentation at the European Society

for Medical Oncology (ESMO) in September 2006.

The above milestones determined also quite strictly the timelines for this workplace

project.

Both biostatistics and data management activities for the study have been performed

by the Roche Basel teams until October 2005, at which time Roche Australia took

over the biostatistics activities – approximately six months prior to the planned

database lock.

1.2 Aim of the project

The aim of this project was to evaluate the data collection and data management

process that was undergoing in this study at the stage when our biostatistics team

took over the responsibility of data analysis for the final study reporting event, and to

give guidance and input into further practice. This project also took on coordinating

and giving advice about particular data collection and data management issues that

arose during the busy data cleaning period preceding the clinical cut-off date for the

primary study analysis.

Student: Etel Mikes ID: 0155584 3 WPP – Project Report I

2. Overview of study design and related issues

The clinical trial I worked on as part of this workplace project was an open-label,

randomized, multi-center, comparative phase II study of HT administered

intravenously once every 3 weeks, with or without oral X administered twice-daily for

2 weeks followed by a 1 week rest period. Treatment in both arms will continue until

progressive disease, unmanageable toxicity or patient request.

Patient will be assessed for treatment response or disease progression according to

the schedule outlined in the following table until progression is documented.

Table 1. Overview of study design

Screening and Day 1 until After cycle 8 After one year 28 days after

Baseline Day progressive until disease from the last dose of

disease progression or enrollment study drug

one year from

enrollment

From -28 to +1 Drug treatment. Tumor Disease Safety follow-up

Tumor assessment assessment

assessment every 12 weeks according to

every 6 weeks routine practice

until disease

progression.

Survival status

until 18 months

after the last

patient enrolled

Clinical cut-off for the primary study analysis was six months after the last patient

enrolled. However, survival data will be collected until 18 months after the last patient

enrolled. These results will be reported a year later in an addendum to the study

report.

2.1 Data-dependent stopping

This clinical trial provided me with an example for understanding the methods and

rationale for determining whether a study should be stopped early [1]. This included

getting familiar with the role and findings of the Data and Safety Monitoring Board,

Student: Etel Mikes ID: 0155584 4 WPP – Project Report I

and the Interim Safety Report.

Recruitment on this Phase II clinical trial began in January 2002 and was suspended

in February 2003, after enrolment of 65 patients, following concerns from the Data

Safety Monitoring Board (DSMB).

Recruitment remained suspended for three months while follow-up safety data were

collected on the enrolled study population.

The study investigators were informed of the results of the Interim Safety Analysis

conducted in April 2003, (data cutoff for the 65 patients Safety analysis was April 7th

2003), and their awareness raised regarding the higher than expected incidence of

febrile neutropenia seen in the study.

After detailed assessment in June 2003, the DSMB were satisfied, and requested

amendments to the protocol and patient information sheet to address the findings.

The protocol changes were designed to ensure selection of patients better able to

tolerate the therapy, and monitoring the course of any neutropenia.

Following the amendments, the study was re-opened in June 2003.

The DSMB requested a further safety data review of the study when another 45

patients had been enrolled, giving a total of 110 patients, half the expected overall

recruitment.

Following the second Interim Safety Report, recruitment into the study continued as

of the end of June 2004.

In familiarizing myself with the project that I was assigned to, I had to make sure that

I understand the implications of the stopping and re-opening of the study. Protocol

amendment included changes to the eligibility criteria such as widening the inclusion

criteria for the enrollment of patients with lower Eastern cooperative oncology group

(ECOG) performance status, and excluding patients with dyspnea at rest due to

malignant or other diseases and patients on chronic concomitant steroids. Due to

such amendments the baseline characteristics of patients before and after stopping

were slightly different. We had to monitor whether these differences in baseline

characteristics of patients could potentially impact on the outcome. No such

Student: Etel Mikes ID: 0155584 5 WPP – Project Report I

difference was found. In addition, no hypothesis testing for efficacy was done during

the stopping and re-starting of the trial, and therefore there was no impact on the

alpha level for the final statistical analysis.

2.2 Reflection on the RECIST criteria

The primary measure of treatment efficacy was the overall tumor response rate.

Evaluation of target lesions and non-target lesions was in accordance with the

Response evaluation criteria in solid tumors (RECIST) [2].

To be able to work with the data collected for this clinical trial it was of importance to

understand the elements of the RECIST criteria for the measurement of tumor

response.

Evaluation of target lesions

All patients with measurable lesions (up to a maximum of 5 lesions per organ and 10

lesions in total), were identified as having target lesions. These lesions were

measured and recorded at baseline. Target lesions were selected on the basis of

their size (lesions with the longest diameter) and their suitability for accurate

repetitive measurements (either by imaging techniques or clinically). A sum of the

longest diameter (LD) for all target lesions was calculated and reported as the

baseline sum longest diameter. The baseline sum LD was be used as reference to

further characterize the objective tumor response of the measurable dimension of the

disease.

The evaluations of target lesions were determined by the following RECIST criteria.

Complete Response (CR): Disappearance of all target lesions

Partial Response (PR): At least a 30% decrease in the sum of the LD of target

lesions taking as reference the baseline sum longest diameter.

Stable Disease (SD): Neither sufficient shrinkage to qualify for PR nor sufficient

increase to quality for PD taking as references the smallest sum LD since the

treatment started.

Progression (PD): At least a 20% increase in the sum of LD of target lesions taking

as reference the smallest sum LD recorded since the treatment started or the

appearance of one or more new lesions.

Student: Etel Mikes ID: 0155584 6 WPP – Project Report I

Evaluation of non-target lesions

All other lesions (or sites of disease) were identified as non-target lesions and were

also recorded at baseline. Measurements were not required, but the presence or

absence of each, were noted throughout follow-up.

The evaluations of non-target lesions were determined by the following RECIST

criteria.

Complete Response (CR): Disappearance of all non-target lesions and

normalization of tumor marker level.

Non-Complete Response: Persistence of one or more non-target lesions (non-CR)

or/and maintenance of tumor marker level above the normal limits.

Progression (PD): Appearance of one or more new lesions and/or unequivocal

progression of existing non-target lesions.

The following table shows how overall response is determined at each treatment

cycle, taking into account the tumor responses in target and non-target lesions and

the appearance of new lesions during that cycle.

Table 2. Overall response criteria at each treatment cycle

Target Lesions Non-Target New Lesions Overall Response

Lesions

CR CR No CR

CR Non-CR/Non-PD No PR

PR Non-PD No PR

SD Non-PD No SD

PD Any Yes or No PD

Any PD Yes or No PD

Any Any Yes PD

One of the problems of the definition that I see is that according to the study protocol,

partial and complete responses have to be confirmed at consecutive tumor

assessments at least 4 weeks apart in order to be considered as responses, whereas

confirmation of progressive disease is not required. A possible consequence of this is

also that without the confirmation of progressive disease, some patients could be

taken off study medication too early.

Student: Etel Mikes ID: 0155584 7 WPP – Project Report I

My perception is that the use of RECIST criteria in this clinical trial yielded an

excessively conservative approach.

Another problem that I see is related to the definition of progressive disease for target

lesions itself. Though partial response is measured in relation to the baseline tumor

measurement, progressive disease is measured in relation to the smallest lesion at

any time during the study. According to this definition it could happen that the sum of

longest diameter at some tumor assessment during the study is smaller than the

baseline value, and the patient still to be classified based on this assessment as

having a progressive disease.

For example: baseline sum of longest diameter is 20 mm, value at cycle 4 is 10 mm,

and value at cycle 8 is 13 mm, which classifies as progression and as a

consequence the patient is being taken off the study drug without the confirmation of

the latest tumor measurement.

3. Evaluation of data collection from the perspective of statistical

analysis

The framework for data collection for this clinical trial was defined in the protocol to

ensure quality data at the end of the study.

As this particular clinical trial had protocol amendments mentioned in section 2.1

above, and since our biostatistics team in Dee Why took over the responsibility for

this study from our colleagues in Basel, Switzerland, an evaluation of data collection

was necessary.

3.1 Obtaining data

The study data for this clinical trial were collected on Case report forms (CRFs) and

entered into Oracle Clinical on a Unix platform.

Where possible, standard data definitions of variables commonly collected for

oncology studies at Roche were used.

The data collected in the Oracle Clinical database were mapped into SAS general

Student: Etel Mikes ID: 0155584 8 WPP – Project Report I

data models according to the data design specification. The following table lists the

SAS datasets holding all the data collected from CRFs

Table 3. SAS datasets created from collected data

SAS dataset Description of dataset

name

AE Adverse events and intercurrent illnesses

CENT Center and investigator information

COMT Comments

DEMO Demographic data

DIAG Previous or current diseases

DIED Data related to death of a patient

EFEX Efficacy data and special safety data

EXCL Data on exclusion from statistical analysis

EXIT Data on completion or premature termination

LABP Laboratory results

MEDO Treatments other than trial medication

MEDT Trial medication and adjuvant medication

TUMO Tumor assessment data

A regular overnight batch job of data transfer was set up between the Oracle Clinical

Administration side and the Biostatistic Computing Environment side stored on

different Unix platforms.

Additional information needed for the analysis was captured in value added datasets

for demography and efficacy variables.

Occasionally, upon request there were additional data transferred arranged. For the

receipt of the final snapshot data a special arrangement was made and the autocopy

lock procedure ensured that it will not be overwritten.

3.2 CRF design issues with the data

As mentioned already in section 2.1 above, this study had protocol amendments.

Each such amendment potentially implies changes in the data collection process and

consequently deviations from the originally designed CRF.

Student: Etel Mikes ID: 0155584 9 WPP – Project Report I

One such example in this clinical trial was the protocol amendment related to the

extension of the follow up data collection period beyond the initial 17 cycles of study

drug intake. Due to delayed extended CRF design, printing and distribution, the data

were not recorded in a consistent manner by the investigators. Rectification of this

problem had to be addressed.

The solution to this data collection deficiency implied:

• Changes to the algorithm previously developed for the calculation of visit

windows, since days from baseline to assessments or treatment administration

have been judged to provide more meaningful reference to the treatment stage

than the cycle numbers recorded on the CRF pages. Given the inconsistent

recording of cycle information on the CRF, cycle numbers for tumor assessments,

study medication and physical exams were re-assigned according to the time

windows defined in the Table 4. This ensured that cycle events such as efficacy

and safety assessments were analysed at comparable times for all patients. In

case of physical exam measures, if more than one value was within a specific

time window, then the last value was used.

Table 4. Time windows for efficacy analyses

Visit Minimum Study Scheduled Study Maximum Study

Day Day Day

Screening/Baseline -999 -1 0

Cycle 1 1 1 14

Cycle 2 15 22 35

Cycle 3 36 43 56

Cycle 4 57 64 77

Cycle 5 78 85 98

Cycle 6 99 106 119

Cycle 7 120 127 140

Cycle 8 141 148 161

Cycle 9 162 169 182

… … … …

Continuing with this sequence for all cycles.

• Dealing with duplicate observations entered due to CRF pages entered twice.

After the protocol amendment a new CRF was created which included additional

pages for higher cycle numbers than the initially planned 17 cycles. Recording of

data for some patients already in the study continued on old CRFs, but in some

cases a new CRF form was filled out as well. In these situations we ended up

having the same data entered twice in the database. These data entry problems

have been identified and reported to the data management team. The database

Student: Etel Mikes ID: 0155584 10 WPP – Project Report I

also contained duplicate observations due to multiple or unscheduled

assessments. Duplicate assessments with same date and same value were

deleted in our programs.

• Programming additional Data Quality Checks in SAS to capture other possible

errors introduced by the additional cycle information collected. Examples of such

additional data checks are:

- visit date incorrect in relation to scheduled visit date

- last dose date not equal to last treatment date

- date of end of treatment before date of start of treatment

- AE resolution date missing when resolution reported on study completion

CRF page

4. Data Management from the perspective of statistical analysis

The scope of data management work during the conduct of a clinical trial is usually

defined at the beginning of the study conduct once the CRF design phase has been

finished.

For this clinical trial the requirements for data cleaning were defined in Data Review

Plan established by the Study Management Team [3]. To ensure appropriate actions

are taken in response to the review, the Data Review Plan lays out the requirements

defining:

• What data are to be reviewed

• Who reviews what

• The timing and frequency of the review

• The methods to be used for the review

The Data Review Plan is one of the components of a more comprehensive plan, the

Data Quality Plan which for this study includes the following information:

• Data listings used for review

• Protocol Violation, Deviation and Eligibility Rules

• Second pass data entry procedures

As the trial proceeds, data quality must be considered and maintained. For this study

a comprehensive validation check program was used to verify the data that were

Student: Etel Mikes ID: 0155584 11 WPP – Project Report I

entered into the study database. These data checks were defined and set up in the

Oracle Clinical Database Management System to run as on a daily basis. As a result

of these programmed validation checks, discrepancy reports were triggered for

review by investigators. Upon completion of study participation, patient’s data were

second-pass entered into the study database from the original, signed CRFs, and re-

run through the validation checks suite for any remaining discrepancies.

4.1 Evaluating the amount of data cleaning needed

The set of data validation checks defined by the Data Management at the beginning

of the study included a set of generic data consistency checks such as checks for

Demographic, Vital Signs and Adverse Events data, as well as study-specific

validation checks.

There were also validation checks defined outside the Oracle Clinical Database

Management system Some of these additional checks were generic data quality

checks programmed in SAS on the Unix operating system. Others were study-

specific statistical analysis oriented checks.

It is worth noting again here that all the above mentioned checks were defined much

earlier than our biostatistics team took over the responsibility for the statistical

analysis and reporting for the upcoming reporting event.

It was realized that the number of validation checks programmed over the course of

the study conduct built up to the point where discrepancy management required

excessive amount of time.

As part of my project I took the responsibility to identify the subset of relevant

validation checks to be run during the process of data cleaning that preceded the

database lock, thus saving unnecessary resources and achieving results within the

set timelines.

The evaluation of the number of relevant validation checks, and consequently the

amount of data cleaning needed was based on the safety parameters that were part

of our report objects, such as adverse event (AE) summaries by frequency counts,

contingency tables comparing the pattern of change for lab parameters, listings with

change from baseline for vital signs etc. It was equally based on the efficacy

Student: Etel Mikes ID: 0155584 12 WPP – Project Report I

parameters to be analysed and reported, involving tumor measurement information

and important dates for the overall response and time to event variables.

In the evaluation of data cleaning needed it was also important to evaluate the

resource availability and make appropriate decisions about resource allocation in

order to meet the set timelines.

4.2 Teamwork

Communication between the remotely located data management team and

biostatistics team was an important part of this workplace project.

I decided to use an Excel workbook as a base for the management of data issues

found using the validation checks programmed in SAS. It was a practical tool that

was owned by both parties: data management team in Basel, Switzerland and

biostatistics team in Dee Why, and as such was mutually maintained.

Important communication was achieved via regular teleconferences that have been

set up to resolve ongoing issues. These teleconferences were attended by wider

audience, including also clinical scientists providing us with important input for both

safety and efficacy data issues.

4.3 Data cleaning/manipulation

Though data review for quality should be an ongoing activity as data collection

proceeds, it is most important before database lock in time for reporting the results of

the clinical trial.

To meet the set deadlines it was important to correct the identified discrepancies in

timely manner. For the Data Manager it meant sending a list of errors back to the

person collecting the data for them to correct.

It happened also that for some reason the data could not be corrected. In that case

the data manager escalated the problem to the study management team which

decided what to do with the unresolved discrepancy. An approach to resolution of

such problems was to claim the discrepancy as irresolvable, properly document it

and leave it as it is. This implied that we in Statistics department had to make

Student: Etel Mikes ID: 0155584 13 WPP – Project Report I

allowance for possibly incorrect data and deal with them programmatically.

The realistic study management goal of meeting the data quality specification for this

clinical trial was met seven weeks after the clinical cut-off date, and the database

was locked and deemed ready for statistical analyses.

5. Report summary

Quality of data collection and data management activities are of high importance for

the accuracy of conclusions drawn from clinical trials.

Data management tasks are usually labor intensive and time consuming. The set of

validation checks defined to run on a study data should be carefully selected in order

to target the data relevant for statistical reporting. For that reason the statistician’s

contribution to the specification of the Data Quality Plan and review of Data Quality

Checks is very important at the early stage of the clinical trial conduct.

Data cleaning should be balanced out carefully between data quality and resource

availability without compromising the precision of the scientific results.

Electronic data capture, that is an emerging technology for data collection and data

management will also introduce a shift in the definition of relevant validation checks

for assuring that data are suitable for statistical analysis.

There will be always issues originating from data collection or data management

activities. In resolving those issues, well established and constant communication

between the data management and biostatistics department is of utmost importance.

6. References

1. Statistical Analysis Plan for protocol MO16419, Roche Products Pty Ltd, 18th April 2006; 7

2. Therasse P, Arbuck SG, Eisenhauer EA et al, New guideines to Evaluate the Response to

Treatment in Solid Tumors, Journal of the national Cancer Institute, Vol 92, No 3, Feb 2000;

206-209

3. SMT Data Review Plan for protocol MO16419, Roche Products Pty Ltd, 28th October 2002;

4-10

Student: Etel Mikes ID: 0155584 14 WPP – Project Report I

Workplace Project Portfolio

Project Report II

Analysis and Interpretation

Student: Etel Mikes ID: 0155584 1 WPP – Project Report II

Table of Contents

1. PROJECT DESCRIPTION ............................................................................................................3

1.1 BACKGROUND, RATIONALE FOR PROJECT .................................................................................3

1.2 AIM OF THE PROJECT ..................................................................................................................3

1.3 DATA MANAGEMENT ...................................................................................................................3

2. MAIN ANALYSES OF THE CLINICAL TRIAL DATA ..............................................................4

3. EXPANDED ANALYSES OF CLINICAL TRIAL DATA ...........................................................7

3.1 DESCRIPTIVE STATISTICS ............................................................................................................8

3.2 EXPLORATORY ANALYSIS OF THE OVERALL TUMOR RESPONSE RATE ......................................9

3.3 EXPLORATORY ANALYSIS OF TIME TO PROGRESSION ..............................................................12

4. REPORT SUMMARY ...................................................................................................................18

5. REFERENCES ..............................................................................................................................19

Student: Etel Mikes ID: 0155584 2 WPP – Project Report II

1. Project description

This workplace project comprises of the exploratory analyses of data from an open-

label randomized phase II study of HT and X in combination (treatment A), versus H

plus T (treatment B), in patients with advanced and/or metastatic breast cancers that

overexpress HER2. Overall, 222 patients were enrolled from January 2002 to

September 2005 and received study drugs.

The exploratory analyses conducted as part of this workplace project have been

designed to complement (and extend) the analyses initially conducted to address the

primary and secondary objectives of the study. These analyses focus on logistic

regression of overall response and Cox regression on time to disease progression

with different potential prognostic factors explored in the models.

1.1 Background, rationale for project

The primary analyses of the data on this clinical trial were conducted according to the

written requirements specification and in preparation for presenting the first efficacy

results at the European Society for Medical Oncology (ESMO) in September 2006.

The clinical cut-off date for the protocol-defined primary study analysis was 6 months

after the last patient enrolled. The Statistical Analysis Plan for this study [1] also

suggests conducting expanded analysis in an exploratory manner. These analyses

were to be done after the primary analyses if indicated by the data, and as such

deemed to be a good choice for applying my theoretical knowledge and skills learnt

during the coursework in the field of multivariate analysis.

1.2 Aim of the project

After the analyses for the primary and secondary objectives of the clinical trial had

been completed, the aim of this project was to investigate the effects of various

possible baseline prognostic factors on the overall response rate and on the time to

disease progression (or tumor growth). [2].

1.3 Data Management

The data were managed by Roche Data Management in Basel, Switzerland. The

data were collected by CRF, entered into a database and verified by a series of

Student: Etel Mikes ID: 0155584 3 WPP – Project Report II

validation checks for which all relevant queries were resolved on an ongoing basis.

All discrepancies were reviewed and any resulting queries resolved with the study

centre in question and amended on the database.

This workplace project is based on the same data collected and cleaned for the

Project I of this Portfolio.

2. Main analyses of the clinical trial data

The primary and secondary efficacy endpoints of this clinical trial were the overall

response rate during the treatment period, time to disease progression and duration

of overall survival.

All efficacy analyses were done according to the intent-to-treat principle i.e as

randomized. All safety analyses related to adverse events and laboratory data were

done according to the treatment actually received. In this particular clinical trial these

two populations were in fact different (one patient randomized to A received

treatment B).

Primary efficacy endpoint

The protocol assumptions were response rate of 50% in comparator arm B, and 70%

in the experimental arm A. The number of patients required to observe 40% relative

increase in response rate was 220 (110 per arm). The calculation was based on a

chi-square test of no difference in response rate between treatment groups, with a 2-

sided significance level of 5% and 80% of power. The calculation allows for 5%

dropout rate.

Tumor response was assessed in terms of changes in the size of measurable

lesions.

The response levels have been determined as defined by the RECIST Criteria

outlined in more detail in Project I of this Portfolio. In case the response cannot be

calculated a non-response will be assumed following a conservative approach.

For each patient their best response according to the RECIST during the course of

the study was identified.

Best overall response (in later text referred to as overall response) for determining

Student: Etel Mikes ID: 0155584 4 WPP – Project Report II

tumor response rate for this study is defined as a confirmed complete response (CR)

or a confirmed partial response (PR) at any time point during the study.

The results of the primary efficacy analyses 6 months after last patient enrolled

showed a high response rate in both treatment regimens, as shown in the following

table.

Table 1. Tumor response rates

Treatment group A (n=112) Treatment group B (n=110)

Best overall response of CR or PR

79 (71%) 80 (73%)

Complete response

20 (18%) 16 (15%)

Partial response

59 (53%) 64 (58%)

Stable disease

28 (25%) 18 (16%)

Progressive disease

4 (4%) 10 (9%)

Not evaluable

1 (1%) 2 (2%)

In investigating whether one treatment regimen is superior to the other the chi-square

test was used yielding a statistically non-significant result (p=0.72).

Secondary efficacy endpoints

The analysis of time to progression and overall survival was based on the survivor

function, which is the probability of surviving or, more generally, staying event free

beyond a certain point in time. The time-to-event endpoints were summarized by

Kaplan-Meier plots.

To test the null hypothesis that there is no difference between the treatment groups

in the probability of an event at any time point the logrank test was used. This test is

most likely to detect a difference between the treatment groups when the risk of an

event is consistently greater for one group than another [4]. The logrank test is purely

a hypothesis test. It cannot provide an estimate of the size of the difference between

the groups or a confidence interval.

Time to progression was measured from the time of treatment commencement to

the time of disease progression. This was done in other studies with the same

compound and the same approach was adopted here in order that the results be

Student: Etel Mikes ID: 0155584 5 WPP – Project Report II

comparable. Patients who had not progressed at the time of study completion

(including patients who had died before progressive disease) or who were lost to

follow-up were censored at the most recent of the last tumor assessment or last drug

intake.

From, the following Kaplan-Meier plot and the logrank test result for the equality of

the survivor functions we can see that treatment A showed longer time to progression

compared with treatment B reaching statistical significance (p=0.045).

Table 2. Summary of the Cox regression for time to progression

Events in groups A and B Hazard Ratio 95% CI p-value

A : 55 B : 68 0.70 0.49, 0.10 0.047

The above results show that patients receiving treatment A are 70% of the risk of

having a disease progression than those receiving treatment B. The difference

proves to be marginally significant.

Figure 1. Kaplan-Meier curve of time to progression

Kaplan-Meier Curve of Time to Progression

1.00

Estimated Probability

0.75

0.50

0.25

0.00

0 10 20 30 40 50

analysis time

(months)

group A group B

Overall survival was measured as the time from start of treatment to the date of

death, irrespective of the cause of death. Patients for whom no death was captured

on the clinical database were censored at the most recent date they were known to

Student: Etel Mikes ID: 0155584 6 WPP – Project Report II

be alive, either date of last tumor assessment, last drug intake or last follow-up

information.

Table 3. Summary of the Cox regression for overall survival (time to death)

Events in groups A and B Hazard Ratio 95% CI p-value

A : 25 B : 30 0.82 0.48, 1.39 0.47

The confidence interval of the hazard ratio for the overall survival includes the value

1. Therefore the inferred result of patients in group A being at 82% of the risk of dying

at any time point compared to group B is not a statistically significant result.

Figure 2. Kaplan-Meier curve of overall survival

Kaplan-Meier Curve of Overall Survival

1.00

Estimated Probability

0.75

0.50

0.25

0.00

0 10 20 30 40 50

analysis time

(months)

group A group B

Having only reached 25% of events for the Overall survival time to event analysis, it

is too early to draw conclusions yet. Follow-up is ongoing and survival data will be

collected until 18 months after the last patient enrolled.

3. Expanded analyses of clinical trial data

The analysis population for the expanded analysis was the intent-to-treat population,

which included all patients who were randomized and received study medication at

least once. Groups are defined according to the study arm patients were randomized

to.

Student: Etel Mikes ID: 0155584 7 WPP – Project Report II

The expanded analysis of the clinical trial data that was the focus of this project

consisted of two parts. The work done and the interpretation of results for each of

these parts are detailed in the following subsections.

3.1 Descriptive statistics

The covariates chosen for the secondary analysis of data are fixed covariates known

at baseline or entry to the study.

The following frequency tables look at the distribution of exploratory variables of

interest. The variables listed in the table, like age, number of target tumor sites and

Eastern Cooperative Oncology Group (ECOG) performance status at baseline were

generated from the collected data and categorised for the purpose of this analysis.

Table 4. Summary statistics of categorical variables

Treatment group A Treatment group B

Covariates Category n=112 n=110

Age

<=50 1 46 (21%) 50 (23%)

>50 2 66 (30%) 60 (27%)

Number of target tumor sites

1 1 83 (37%) 84 (38%)

>=2 2 29 (13%) 26 (12%)

Adjuvant Anthracycline Therapy

no 1 64 (29%) 61 (27%)

yes 2 48 (22%) 49 (22%)

Liver metastases

no 0 72 (32%) 69 (31%)

yes 1 40 (18%) 41 (18%)

Lung metastases

no 0 71 (32%) 68 (31%)

yes 1 41 (18%) 42 (19%)

ECOG performance status

Fully active 0 69 (31%) 71 (32%)

Restricted in carrying out activity 1 43 (19%) 39 (18%)

Progesterone status

negative 0 61 (31%) 65 (33%)

positive 1 37 (19%) 32 (16%)

Disease status

locally advanced 0 16 (7%) 14 (6%)

metastatic 1 96 (43%) 96 (43%)

Student: Etel Mikes ID: 0155584 8 WPP – Project Report II

3.2 Exploratory analysis of the overall tumor response rate

With the aim of providing a prognostic model for the exploratory analysis of the

overall tumor response, the logistic regression was used.

The logistic regression model is based on the logit link function and is fit for binary or

ordinal response data by the method of maximum likelihood.

The overall response is the indicator variable with a value of 1 for a complete or

partial overall response, and 0 otherwise. I was modelling here the probability of

having an overall response.The model included nine (including treatment) variables

as risk factors thought to be related to the overall tumor response. These variables

are listed in table 4 above. In developing the logistic regression model, initially a

logistic regression was performed for each covariate separately adjusting only for the

possible treatment difference. The results of these regressions are shown in the

following table.

Table 5. Maximum likelihood estimates for individual parameters adjusted for

treatment

Wald test

Covariates df statistics p>Chi-square

Age 1 4.61 0.032

Number of target tumor sites 1 2.24 0.13

Adjuvant Anthracycline Therapy 1 1.81 0.18

Liver metastases 1 2.34 0.13

Lung metastases 1 1.88 0.17

ECOG performance status 1 2.09 0.15

Progesterone status 1 4.29 0.038

Disease status 1 1.14 0.29

Subsequently, in identifying the prognostic factors for the overall tumor response, the

stepwise multivariate logistic regression was used [3]. A significance level of 0.2 was

set to allow a variable into the model, and a significance level of 0.2 was set for a

variable to stay in the model. I chose these variable entry end removal levels to be

relatively large so that the selection of covariates into the model is not bound too

much by the usual 0.05 level of statistical significance.

The backward stepwise logistic regression procedure automates the removal of

Student: Etel Mikes ID: 0155584 9 WPP – Project Report II

variables one at a time, based on the smallest change in likelihood ratio tests when

refitting the model with one less variable. In other words, in one iteration, the

variable that contributed the smallest amount with its presence in the model is being

removed.

Setting 0.2 as entry and removal significance levels makes the forward and backward

elimination of variables essentially the same in this case. In the following section I

chose the forward stepwise logistic regression as a convenient way to summarise the

results.

Summary of stepwise logistic regression

In forward stepwise selection, an attempt is made to remove any insignificant

variable from the model before adding the next significant variable to the model

according to the set significant levels. After fitting the intercept-only model, the

intermediate model with the intercept term and the progesterone status variable is

fitted. The progesterone status remains significant (p=0.043 < 0.2) and is not

removed. Selection of potential variables continues the same way until none of the

remaining variables outside the models meets the entry criterion, and the stepwise

selection is terminated. The following table displays the results of the stepwise

selection.

Table 6. Results of the analysis of maximum likelihood estimates

Effect df Wald Chi-square p>Chi-square

Age 1 3.53 0.060

Number of target tumor sites 1 4.09 0.043

Adjuvant Anthracycline Therapy 1 1.96 0.16

ECOG performance status 1 2.51 0.11

Progesterone status 1 2.60 0.11

Disease status 1 4.82 0.028

Result of the likelihood ratio test for testing the joint significance of the explanatory

variables has a chi-square statistics of 17.67 with 6 df, yielding a statistically

significant result (p=0.0071).

For the evaluation of how well the model represents the data, the Hosmer and

Lemeshow goodness-of-fit test produced a value of 5.71 with df=8, indicating no

evidence of a poor fit (p=0.68).

Student: Etel Mikes ID: 0155584 10 WPP – Project Report II

I fit regression models with different combination of potential factors and interaction

terms to allow for effect modification of the overall model, but the best fit was

obtained by the model presented above. My conclusion was based on the goodness-

of fit test and the smaller Akaike Information Criterion (AIC). AIC is a function of log-

likelihood and the number of parameters in the model, and it can be used to compare

different models.

For the above analysis 27 observations were deleted form the analysis dataset due

to missing values for the progesterone status variable. The disposition of the

progesterone status levels including missing values relative to the overall response

variable is illustrated in the following table.

Table 7. Disposition of Progesterone status by Overall Response

Progesterone status

negative positive missing

responder 85 56 18

non-responder 41 13 9

Interpretation of results

Although the main analysis of the clinical trial data for the Overall tumor response did

not provide evidence of the difference in Overall tumor response between the two

treatment groups, exploratory analysis was conducted in order to look at possible

predictors of the Overall tumor response.

Of all the variables included in the model, age, number of target tumor sites and

disease status at baseline were significant at alpha=0.1 level, as shown in Table 6.

Table 8. shows the odds ratio estimates for the baseline predictors of the Overall

tumor response. We can see that the 95% confidence intervals for the odds ratios

for number of target lesions and disease status at baseline do not include 1 indicating

that the odds ratios are statistically significant at the 0.05 level. There are two other

factors, progesterone status and especially age category for which the odds ratio

confidence interval merely touches that borderline.

Student: Etel Mikes ID: 0155584 11 WPP – Project Report II

Table 8. Odds ratio estimates for the predictor variables

Lower Higher Odds 95% Wald 95% Wald

Effect category category ratio* lower CI upper CI

Age <=50 >50 1.95 0.97 3.91

Number of target tumor sites 1 >=2 2.18 1.02 4.66

Adjuvant Anthracycline Therapy no yes 1.64 0.82 3.27

ECOG performance status fully active restricted in activity 1.75 0.88 3.50

Progesterone status negative positive 0.54 0.26 1.14

Disease status locally advanced metastatic 0.34 0.13 0.89

* Odds ratios >1 mean that patients in lower category have more chance of responding compared to

those in higher category

After this exploratory analysis, we see a trend backed by the odds ratio point

estimates that women under 50 years of age, having metastatic breast cancer with

only 1 target tumor site at baseline assessment and positive progesterone status

have better prognosis for overall tumor response.

One could ask a question (as I did in questioning whether the above given

interpretation is sensible in a clinical context): What is the reason that women with

positive progesterone receptor may respond better to the cancer treatment than

those with the negative hormone receptor? Usually negative test results mean

something good, but in this case the hormone receptor assay at baseline test shows

that if the receptors are present on the surface for the breast cancer cells, then the

cancer is likely to respond better to this particular type of therapy [5].

3.3 Exploratory analysis of time to progression

Cox regression model, the most commonly used multivariate approach for

analyzing survival time data in clinical research was used for the expanded analysis

of the secondary endpoint in this clinical trial.

The Cox regression model describes the relationship between the risk of an event, in

this case time to progression (TTP), and a set of covariates. It provides an estimate

of the hazard ratio and its confidence interval.

The assumption of the proportional hazards model is that the hazard of the event of

interest in one treatment group is a constant multiple of the hazard in the comparator

group. In this case the hazard ratio indicates the relative likelihood of progression

between the two treatment groups at any given point in time.

Student: Etel Mikes ID: 0155584 12 WPP – Project Report II

Checking that a given model is an appropriate representation of the data is an

important step in the analysis [6]. There are various methods for verifying the

assumption of the proportional hazards (PH) model [7]. I used a graphical method

that plots the logarithm of the estimated cumulative hazard function for each

treatment group against the survival time.

The log-log plot is presented in Figure 3. The curves do not cross and the separation

between the two curves (one for each treatment group) is fairly constant with not

much deviation. This indicates that the PH assumption holds for these data.

Figure 3. Log(-Log(Survival) curves for TTP

The aim of the analysis is to investigate a collection of factors of known relevance for

their ability to predict time to progression. The covariates considered for the analysis

are the same as for the primary efficacy endpoint described in section 3.1 of this

Report (see table 4.)

The strategy used is the attempt to initially model all covariates, and then

subsequently fit the reduced model, provided the predictive ability of the model is not

compromised.

Table 9. contains the individual logrank test results for the variables considered for

the model. Each factor is assessed through separate univariate Cox regression. P

Student: Etel Mikes ID: 0155584 13 WPP – Project Report II

value of less than 0.05 means that time to progression is associated with the

individual covariate.

According to the logrank test for equality of survivor functions, the variables that are

associated with shorter time to progression are the following baseline assessments:

number of target tumor sites, prior anthracycline therapy for breast cancer, liver

metastases, lung metastases, ECOG performance status and disease status.

Table 9. Logrank test results for the potential predictor factors

Covariates df p>Chi-square

Age 1 0.39

Number of target tumor sites 1 0.017

Adjuvant Anthracycline Therapy 1 0.0055

Liver metastases 1 0.031

Lung metastases 1 0.0015

ECOG performance status 1 0.0002

Progesterone status 1 0.55

Disease status 1 0.0006

After fitting the initial full model with all the covariates included the following results

were obtained:

Table 10. Initial Cox regression results

Lower Higher Hazard Lower Upper

Factor category category ratio* p>|z| CI CI

Treatment A B 1.19 0.37 0.81 1.75

Age <=50 >50 1.46 0.068 0.97 2.19

Number of tumor sites 1 >=2 1.04 0.88 0.64 1.70

Adjuvant Anthracycline Therapy no yes 1.55 0.029 1.05 2.29

Liver metastates no yes 1.17 0.45 0.78 1.77

Lung metastates no yes 1.19 0.43 0.77 1.84

ECOG performance status fully active restricted in activity 2.02 0.001 1.36 3.01

Progesterone status negative positive 1.22 0.33 0.82 1.83

Disease status locally advanced metastatic 2.86 0.030 1.11 7.40

* Hazard ratios >1 mean that patients in lower category have reduced risk of progression compared to

those in higher category

According to the partial likelihood ratio test for the overall significance (G=36.66,

df=9, p<0.0001), we reject the null hypothesis, and conclude that overall the model is

significant. This means that one or more covariates in this model are significant

Student: Etel Mikes ID: 0155584 14 WPP – Project Report II

predictors of time to progression.

Looking at the individual Wald tests given in the output, the categorical variables age,

prior adjuvant anthracycline therapy, baseline ECOG performance status and

disease status are the ones contributing to the model at a 0.1 significance level.

I fitted than the reduced model, and the test for the significance of the variables

removed from the model (G=3.53, df=5, p=0.62) yields the conclusion that number of

target sites, liver or lung metastases and progesterone status are not significant

predictors of time to progression.

Interpretation of results

The factors: prior anthracycline therapy for breast cancer, ECOG performance status

and disease status at baseline are all significant determinants of time to progression.

The following table presents the hazard ratios and their confidence interval of the

factors contributing to the reduced model.

Table 11. Reduced model Cox regression results

Lower Higher Hazard Lower Upper

Factor category category ratio* p>|z| CI CI

Age <=50 >50 1.36 0.10 0.94 1.96

Adjuvant Anthracycline Therapy no yes 1.53 0.022 1.06 2.20

ECOG performance status fully active restricted in activity 1.82 0.001 1.26 2.62

Disease status locally advanced metastatic 2.69 0.013 1.23 5.88

* Hazard ratios >1 mean that patients in lower category have reduced risk of progression compared to

those in higher category

The hazard ratio derived from the Cox model must be interpreted with caution. It

does not translate directly into information about the duration of time until disease

progression. It may be used for purposes of statistical hypothesis testing and

indication of the amount of benefit (e.g. a decrease in odds of time to progression),

but other measures must also be applied to understand the full importance of the

study.

Median time to progression is also a useful parameter for interpreting effects [8]. The

following table lists the median time to progression in months for the prognostic

factors.

Student: Etel Mikes ID: 0155584 15 WPP – Project Report II

Table 12. Median time to disease progression

Factor Lower category* Higher category* Median ratio

Age 15.2 12.9 1.18

Adjuvant Anthracycline Therapy 18.6 11.6 1.60

ECOG performance status 17.3 10.6 1.63

Disease status 38.4 12.9 2.98

* Categories of covariates are listed in Table 4.

Though the age category variable is not a statistically significant predictor, from the

above table we can see that women in the age group under 50 progress later, with

median time to progression 15.2 months for younger and 12.9 months for older

women.

Women who did not have prior anthracycline therapy for breast cancer have longer

median time to progression: the median duration for women in this category is 18.6

months which is significantly different from 11.6 months for women having prior

anhracycline therapy.

The effect of ECOG performance status is the strongest predictor in the model

according to the Cox regression: the median time to progression is 17.3 months for

women with performance status 0 and 10.6 for women with performance status 1 or

more.

Disease status has the largest hazard ratio according to the Cox regression results

as reported in Table 11, but with a fairly large confidence interval. The median time to

progression for the locally advanced cancer patients at baseline assessment is 38.4

months compared to 12.9 months for the women with a metastatic breast cancer at

the start of the clinical trial.

As can be seen from comparing the hazard ratio estimates in Table 11 with the

median ratio estimates in Table 12, the hazard ratio estimates for our data are

consistent with median ratios, and hence they are a reliable measure of the

association of the prognostic factors with the time to disease progression.

Student: Etel Mikes ID: 0155584 16 WPP – Project Report II

The Accelerated failure time (AFT) model is an alternative to the PH model for the

analysis of time-to-event data.

As opposed to the PH model which expresses treatment differences in terms of the

risk of the event of interest in any given time, the AFT model expresses treatment

differences in terms of a direct effect on time. In other words in the AFT approach the

survival probability in group 1 at time t is equal to the survival probability in group 2 at

some multiple of time.

To assess whether the AFT model is suitable for the data we can plot the percentiles

of the KM estimated survivor function from one treatment group against the other. If

the model is appropriate, this would approximate to a straight line through the origin.

According to the regression results, the slope of the fitted straight line is 1.57

providing an unadjusted estimate of the multiplicative factor of 0.97, indicating that

the time to progression probability for a patient in treatment group A at time t is equal

to the survival probability in group B at time 0.97 t.

However since the Quantile-Quantile (QQ) plot in Figure 3. does not approximate

well enough to a straight line from the origin, the AFT model might not be suitable for

these data, and hence the results obtained might not provide a reliable estimate of

treatment differences. For this reason, and also because of time constraints, I did not

consider exploring further this approach during my project work.

Figure 4. Quantile-Quantile plot for TTP

Student: Etel Mikes ID: 0155584 17 WPP – Project Report II

Accelerated failure time model is a particularly useful model when the event rate is

high, and as such might be reconsidered for use for the next reporting event at the

end of the follow up period.

4. Report summary

Although the clinical trial was unsuccessful in providing statistically significant result

for the overall tumor response in favor of the experimental treatment regiment, it

showed a high (above 70%) overall tumor response rate in both treatment arms.

Time to progression, measured from time to start of treatment to time to disease

progression defined by the RECIST criteria, was shown to be significantly longer in

the experimental treatment arm.

Further analyses investigated the effects of various possible baseline prognostic

factors on the overall response and on time to disease progression.

The Logistic regression of overall response on several baseline characteristics

showed that younger women with a smaller number of target tumor sites at baseline

have better chance of responding to treatment. It also reveals that women being

categorized as having metastatic breast cancer at the start of the study have better

overall response than women having locally advanced breast cancer. This latter

finding is rather unexpected, and raises further questions about the response criteria

determined by the RECIST.

The proportional hazard model applied for the analysis of time to progression showed

the existence of association between shorter time to progression and age under 50,

no prior adjuvant anthracycline therapy, fully active ECOG performance status and

locally advanced breast cancer at baseline.

This randomized clinical trial will continue to collect follow up data on the same study

population, and it will be interesting to see the results of overall survival in order to

draw other possible conclusions.

Student: Etel Mikes ID: 0155584 18 WPP – Project Report II

5. References

1. Statistical Analysis Plan for protocol MO16419, Roche Products Pty Ltd, 18th April 2006;

16-17

2. Clinical Study Protocol, Protocol number MO16419D, Roche Products Pty Ltd, 26th April

2005; 75

3. SAS/STAT User’s Guide Version 8, Cary, NC: SAS Institute Inc., 1999, Volume 2; 1975-

1988

4. Bland MJ, Altman DG, The logrank test, BMJ 2004; 328(7447) 1073

5. Are hormone receptors present?, breastcancer.org,

http://www.breastcancer.org/dia_pict_hormone.html

6. Bradburn MJ, Clark TG, Love SB, Altman DG, Survival Analysis Part III: Multivariate data

analysis - choosing a model and assessing its adequacy and fit, British Journal of Cancer

(2003) 89: 605-611

7. Patel K, Kay R, Rowell L, Comparing proportional hazards and accelerated failure time

models: an application in influenza, Pharmaceutical Statistics, 2006; 5: 213-224

8. Squance SL, Reid JE, Grace M, Samore M, Hazard Ratio in Clincial Trials, Antimicrobial

Agents and Chemotherapy, Aug 2004, 2787-2792

Student: Etel Mikes ID: 0155584 19 WPP – Project Report II

You might also like

- Expert Judgment in Project Management: Narrowing the Theory-Practice GapFrom EverandExpert Judgment in Project Management: Narrowing the Theory-Practice GapNo ratings yet

- Major Oroject On Management Information SystemDocument48 pagesMajor Oroject On Management Information SystemAbhimanyuGulatiNo ratings yet

- Clinical Practicum PaperDocument26 pagesClinical Practicum Paperncory79100% (1)

- THS 102 - Module 1 - Purpose of ResearchDocument6 pagesTHS 102 - Module 1 - Purpose of ResearchKabagis TVNo ratings yet

- FP MD 7100-SyllabusDocument5 pagesFP MD 7100-SyllabusyoungatheistNo ratings yet

- 0684 Autoimune DiseasesDocument43 pages0684 Autoimune Diseasesjs14camaraNo ratings yet

- SP ThesisDocument4 pagesSP Thesislyjtpnxff100% (2)

- Writing Research Protocol - Formulation of Health Research Protocol - A Step by Step DescriptionDocument6 pagesWriting Research Protocol - Formulation of Health Research Protocol - A Step by Step DescriptionCelso Gonçalves100% (1)

- Analytical PerspectiveDocument26 pagesAnalytical PerspectiveKarl Patrick SiegaNo ratings yet

- Local Media9155218125481198705Document60 pagesLocal Media9155218125481198705Erah Kim GomezNo ratings yet

- Master Thesis Pilot StudyDocument5 pagesMaster Thesis Pilot Studyjacquelinedonovanevansville100% (2)

- Writing Chapter 4 Research PaperDocument6 pagesWriting Chapter 4 Research Paperaflbrtdar100% (1)

- PRint FinalDocument50 pagesPRint FinalSwapnil Sontakke JainNo ratings yet

- #Design and Implementation of Computerized Medical Duties Scheduling System - Inimax HubDocument77 pages#Design and Implementation of Computerized Medical Duties Scheduling System - Inimax HubclarusNo ratings yet

- Kannur Digree Syllabus and Scheme For BioinformaticsDocument35 pagesKannur Digree Syllabus and Scheme For BioinformaticsJinu MadhavanNo ratings yet

- L01 IntroductionDocument12 pagesL01 Introductionlolpoll771No ratings yet

- Writing Chapter 4 DissertationDocument4 pagesWriting Chapter 4 DissertationCanSomeoneWriteMyPaperForMeSingapore100% (1)

- Sustainability in Project Management:: Eight Principles in PracticeDocument110 pagesSustainability in Project Management:: Eight Principles in PracticeGreyce MaasNo ratings yet

- Food Chemistry Research Plan GuideDocument3 pagesFood Chemistry Research Plan GuideSamantha WNo ratings yet

- PROPOSAL - SSL101cDocument7 pagesPROPOSAL - SSL101cphuclacs180358No ratings yet

- Sample Dmin ThesisDocument4 pagesSample Dmin Thesisafcnenabv100% (2)

- Bioe 4640Document4 pagesBioe 4640REXTERYXNo ratings yet

- Research Methods in Management - 12Document14 pagesResearch Methods in Management - 12Trairong SwatdikunNo ratings yet

- Module 1 CAP101Document7 pagesModule 1 CAP101cambaliza22No ratings yet

- Ndejje Univeristy Student Health Record System PDFDocument44 pagesNdejje Univeristy Student Health Record System PDFManyok Chol DavidNo ratings yet

- Prediction of Project Performance-FluorDocument159 pagesPrediction of Project Performance-FluorCüneyt Gökhan Tosun100% (4)

- A Study on the Challenges in UAE Healthcare Organization ةسارد لوح تاراملإاب ةيحصلا ةياعرلا تاسسؤمب عيراشملا ةرادإ تايدحتDocument56 pagesA Study on the Challenges in UAE Healthcare Organization ةسارد لوح تاراملإاب ةيحصلا ةياعرلا تاسسؤمب عيراشملا ةرادإ تايدحتARINDAM GHOSHNo ratings yet

- CASE STUDY PreziDocument16 pagesCASE STUDY PreziTrisha Mae TrinidadNo ratings yet

- Completing the CU Ethics Approval Checklist for EHR Implementation ProjectDocument5 pagesCompleting the CU Ethics Approval Checklist for EHR Implementation ProjectNishNo ratings yet

- Lesson 1 - Revisiting The Research ProposalDocument8 pagesLesson 1 - Revisiting The Research Proposalangel annNo ratings yet

- Dissertation ThemenwahlDocument4 pagesDissertation ThemenwahlCollegePaperWritingServicesLittleRock100% (1)

- d3d671f1 Fda2 4baa 946e 5c777d1903d2 Brochure Startfolder August 2015Document41 pagesd3d671f1 Fda2 4baa 946e 5c777d1903d2 Brochure Startfolder August 2015Zia KangNo ratings yet

- ICT583 Unit Guide 2022Document18 pagesICT583 Unit Guide 2022Irshad BasheerNo ratings yet

- GTS 368 Study Guide 2021Document36 pagesGTS 368 Study Guide 2021TylerNo ratings yet

- PHD Thesis Results SectionDocument6 pagesPHD Thesis Results SectionCrystal Sanchez100% (2)

- Research Paper Chapter 4 ExampleDocument8 pagesResearch Paper Chapter 4 Exampleaflefvsva100% (1)

- Syllabus Therm Proj EngDocument9 pagesSyllabus Therm Proj EngRiya guptaNo ratings yet

- Biomedical Science Dissertation StructureDocument4 pagesBiomedical Science Dissertation StructureWriteMyPaperForMeCheapAlbuquerque100% (1)

- EML3301L Syllabus and Course OverviewDocument5 pagesEML3301L Syllabus and Course OverviewangelNo ratings yet

- Project Work BioetchnologyDocument10 pagesProject Work BioetchnologyYachna YachnaNo ratings yet

- PHD Thesis Q MethodologyDocument4 pagesPHD Thesis Q MethodologyHelpWithWritingAPaperSiouxFalls100% (1)

- NURS 5192 SyllabusDocument8 pagesNURS 5192 SyllabusdawnNo ratings yet

- Hosptalmanagementsystem 200902070112Document28 pagesHosptalmanagementsystem 200902070112sk khanNo ratings yet

- ResearchDocument10 pagesResearchUtsav BaralNo ratings yet

- Computer Science Dissertation MethodologyDocument7 pagesComputer Science Dissertation MethodologyWriteMyPaperApaStyleLasVegas100% (1)

- Sample Thesis Chapter 3 For Information TechnologyDocument4 pagesSample Thesis Chapter 3 For Information Technologystephanieclarkolathe100% (2)

- The Research Problem Is The Start of Bringing To Light and Introducing The Problem That The Research Will Conclude With An AnswerDocument3 pagesThe Research Problem Is The Start of Bringing To Light and Introducing The Problem That The Research Will Conclude With An AnswerAlnow AnabNo ratings yet

- Survey of Labs in AustraliaDocument77 pagesSurvey of Labs in Australiaeduman9949No ratings yet

- 1 PilotStudyDocument4 pages1 PilotStudyPriyanka SheoranNo ratings yet

- List of PHD Thesis in ManagementDocument8 pagesList of PHD Thesis in Managementjessicamyerseugene100% (2)

- 7007BMS CW1 Lab Report SEPT Starts 22-23 SLS Assessment Brief Academic Year 2022 - 23 CWDocument12 pages7007BMS CW1 Lab Report SEPT Starts 22-23 SLS Assessment Brief Academic Year 2022 - 23 CWfaizaNo ratings yet

- Program 2879636751 BhIxhQYk7V RemovedDocument15 pagesProgram 2879636751 BhIxhQYk7V RemovedAnonymous 6bsT9wKGuvNo ratings yet

- BC ProposalDocument18 pagesBC ProposalAschalew AyeleNo ratings yet

- List of All My Research Classes - Ucsd LluDocument5 pagesList of All My Research Classes - Ucsd Lluapi-150223943No ratings yet

- Syllabus Therm Proj EngDocument9 pagesSyllabus Therm Proj EngMohd ZaidNo ratings yet

- APCS_Thesis-proposalDocument18 pagesAPCS_Thesis-proposalTâm TrầnNo ratings yet

- Bijl2b - Protocol Template Retrospectieve StudieDocument7 pagesBijl2b - Protocol Template Retrospectieve Studie主邮箱鲁汶学联No ratings yet

- Full Text 01Document62 pagesFull Text 01Raydoon SadeqNo ratings yet

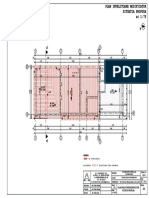

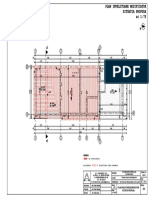

- 12 Plan Invelitoare Propus PDFDocument1 page12 Plan Invelitoare Propus PDFkatika01No ratings yet

- 9 Plan Invelitoare Modifiator Propus PDFDocument1 page9 Plan Invelitoare Modifiator Propus PDFkatika01No ratings yet

- 9 Plan Invelitoare Modifiator Propus PDFDocument1 page9 Plan Invelitoare Modifiator Propus PDFkatika01No ratings yet

- 4 Plan Invelitoare Existent PDFDocument1 page4 Plan Invelitoare Existent PDFkatika01No ratings yet

- 8 Plan Invelitoare Modificator Existent PDFDocument1 page8 Plan Invelitoare Modificator Existent PDFkatika01No ratings yet

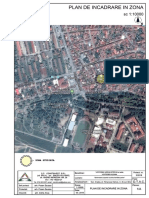

- Plan de incadrare in zona 1:10000 pentru schimbare acoperis locuintaDocument1 pagePlan de incadrare in zona 1:10000 pentru schimbare acoperis locuintakatika01No ratings yet